Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#4414

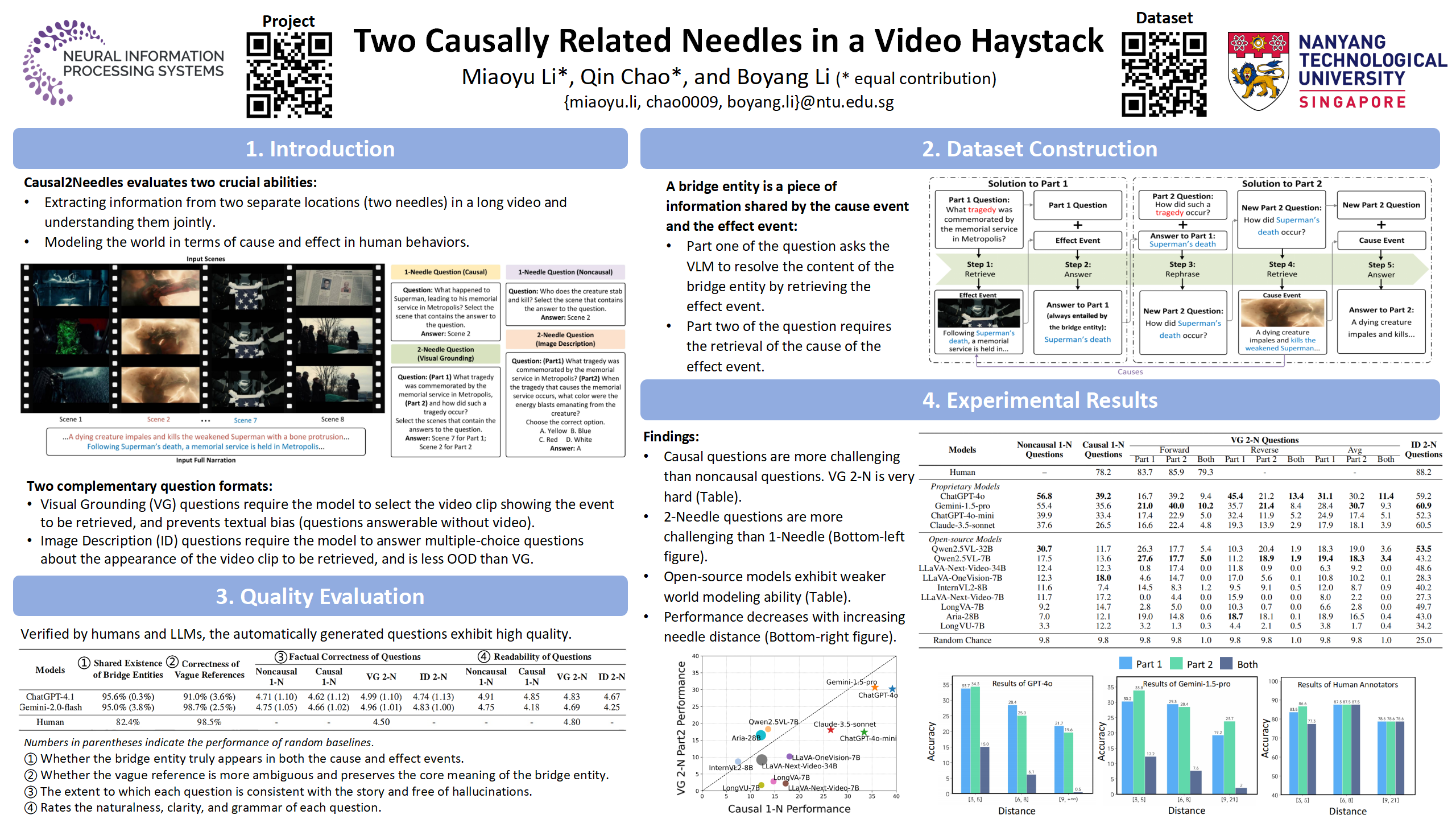

Two Causally Related Needles in a Video Haystack

Abstract

Properly evaluating the ability of Video-Language Models (VLMs) to understand long videos remains a challenge. We propose a long-context video understanding benchmark, Causal2Needles, that assesses two crucial abilities insufficiently addressed by existing benchmarks:

- extracting information from two separate locations (two needles) in a long video and understanding them jointly,

- modeling the world in terms of cause and effect in human behaviors.

Causal2Needles evaluates these abilities using noncausal one-needle, causal one-needle, and causal two-needle questions. The most complex question type, causal two-needle questions, require extracting information from both the cause and effect events from a long video and the associated narration text.

To prevent textual bias, we introduce two complementary question formats: locating the video clip containing the answer, and verbal description of a visual detail from that video clip.

Our experiments reveal that models excelling on existing benchmarks struggle with causal 2-needle questions, and the model performance is negatively correlated with the distance between the two needles. These findings highlight critical limitations in current VLMs.