Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#110

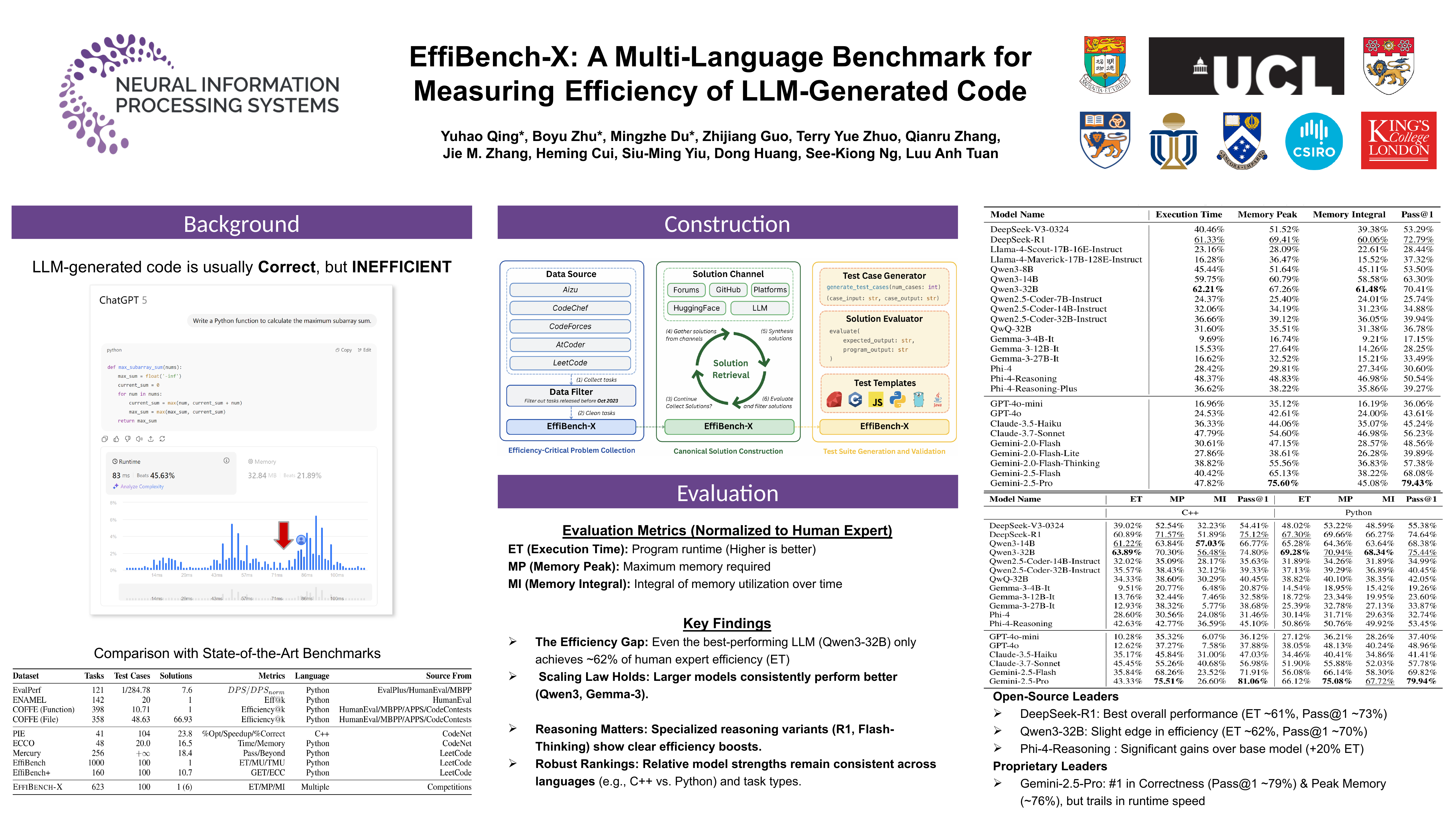

EffiBench-X: A Multi-Language Benchmark for Measuring Efficiency of LLM-Generated Code

Yuhao QING, Boyu Zhu, Mingzhe Du, Zhijiang Guo, Terry Yue Zhuo, Qianru Zhang, Jie M. Zhang, Heming Cui, Siu Ming Yiu, Dong HUANG, See-Kiong Ng, Anh Tuan Luu

Abstract

Existing code generation benchmarks primarily evaluate functional correctness, with limited attention to code efficiency, and they are often restricted to a single language such as Python.

To address this gap, we introduce EffiBench-X, the first large-scale multi-language benchmark specifically designed for robust efficiency evaluation of LLM-generated code. EffiBench-X supports Python, C++, Java, JavaScript, Ruby, and Go, and comprises competitive programming tasks paired with human-expert solutions as efficiency baselines.

Evaluating state-of-the-art LLMs on EffiBench-X reveals that while models frequently generate functionally correct code, they consistently underperform human experts in efficiency. Even the most efficient LLM-generated solutions (e.g., Qwen3-32B) achieve only around 62% of human efficiency on average, with significant language-specific variation: models tend to perform better in Python, Ruby, and JavaScript than in Java, C++, and Go (e.g., DeepSeek-R1’s Python code is markedly more efficient than its Java code).

These findings highlight the need for research into optimization-oriented methods to improve the efficiency of LLM-generated code across diverse languages. The dataset and evaluation infrastructure are publicly available at https://github.com/EffiBench/EffiBench-X.git and https://huggingface.co/datasets/EffiBench/effibench-x.