Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#3912

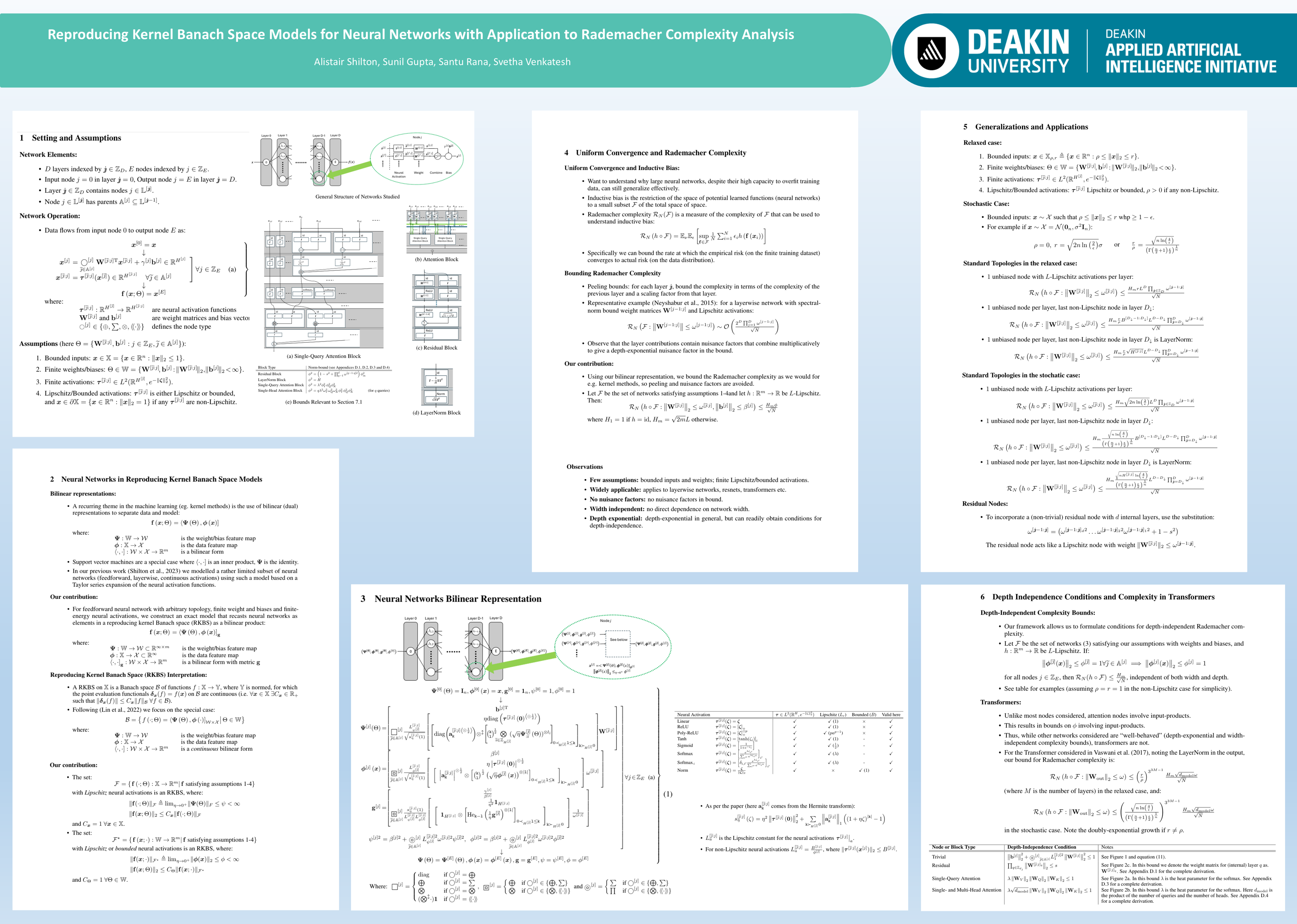

Reproducing Kernel Banach Space Models for Neural Networks with Application to Rademacher Complexity Analysis

Abstract

This paper explores the use of Hermite transform based reproducing kernel Banach space methods to construct exact or un-approximated models of feedforward neural networks of arbitrary width, depth and topology, including ResNet and Transformers networks, assuming only a feedforward topology, finite energy activations and finite (spectral-) norm weights and biases.

Using this model, two straightforward but surprisingly tight bounds on Rademacher complexity are derived, precisely:

- a general bound that is width-independent and scales exponentially with depth; and

- a width- and depth-independent bound for networks with appropriately constrained (below threshold) weights and biases.