Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#3517

Mind the Quote: Enabling Quotation-Aware Dialogue in LLMs via Plug-and-Play Modules

Abstract

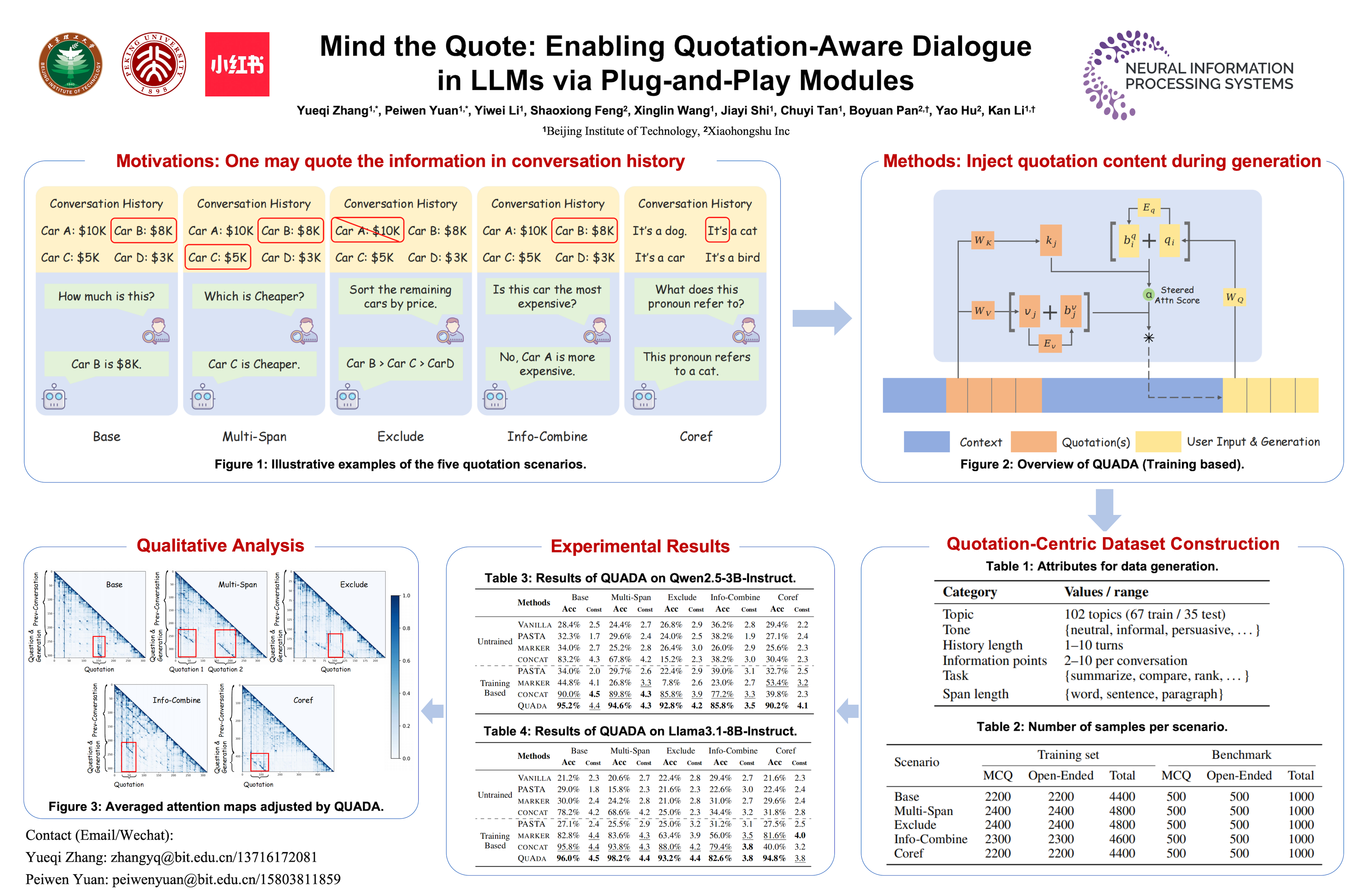

Human–AI conversation frequently relies on quoting earlier text—“check it with the formula I just highlighted”—yet today’s large language models (LLMs) lack an explicit mechanism for locating and exploiting such spans.

We formalize the challenge as span-conditioned generation, decomposing each turn into the dialogue history, a set of token-offset quotation spans, and an intent utterance. Building on this abstraction, we introduce a quotation-centric data pipeline that automatically synthesizes task-specific dialogues, verifies answer correctness through multi-stage consistency checks, and yields both a heterogeneous training corpus and the first benchmark covering five representative scenarios.

To meet the benchmark’s zero-overhead and parameter-efficiency requirements, we propose QuAda, a lightweight training-based method that attaches two bottleneck projections to every attention head, dynamically amplifying or suppressing attention to quoted spans at inference time while leaving the prompt unchanged and updating < 2.8% of backbone weights. Experiments across models show that QuAda is suitable for all scenarios and generalizes to unseen topics, offering an effective, plug-and-play solution for quotation-aware dialogue.