Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#2410

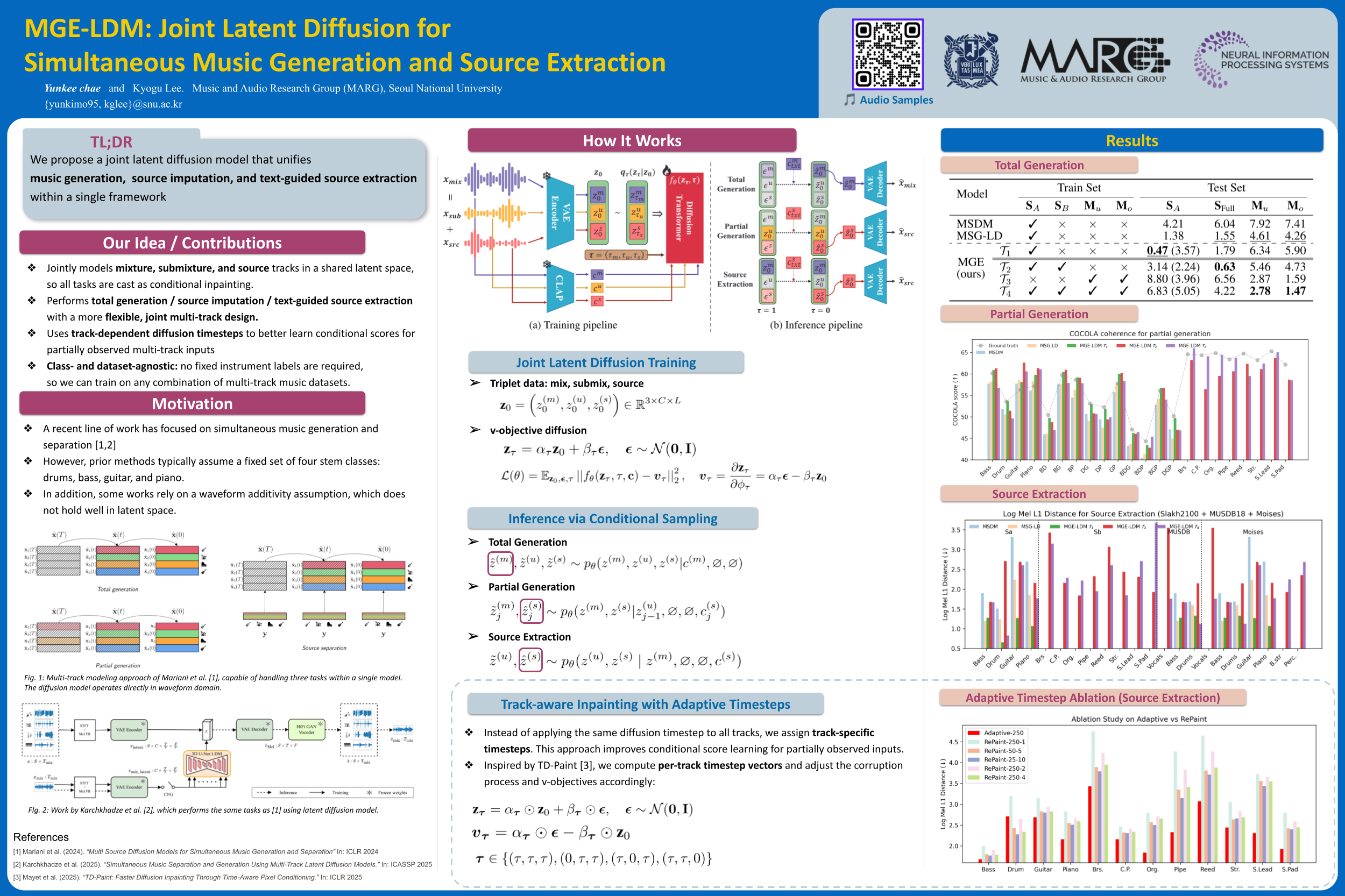

MGE-LDM: Joint Latent Diffusion for Simultaneous Music Generation and Source Extraction

Abstract

We present MGE-LDM, a unified latent diffusion framework for simultaneous music generation, source imputation, and query-driven source separation. Unlike prior approaches constrained to fixed instrument classes, MGE-LDM learns a joint distribution over full mixtures, submixtures, and individual stems within a single compact latent diffusion model.

At inference, MGE-LDM enables:

- complete mixture generation,

- partial generation (i.e., source imputation), and

- text-conditioned extraction of arbitrary sources.

By formulating both separation and imputation as conditional inpainting tasks in the latent space, our approach supports flexible, class-agnostic manipulation of arbitrary instrument sources. Notably, MGE-LDM can be trained jointly across heterogeneous multi-track datasets (e.g., Slakh2100, MUSDB18, MoisesDB) without relying on predefined instrument categories.