Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#3908 Spotlight

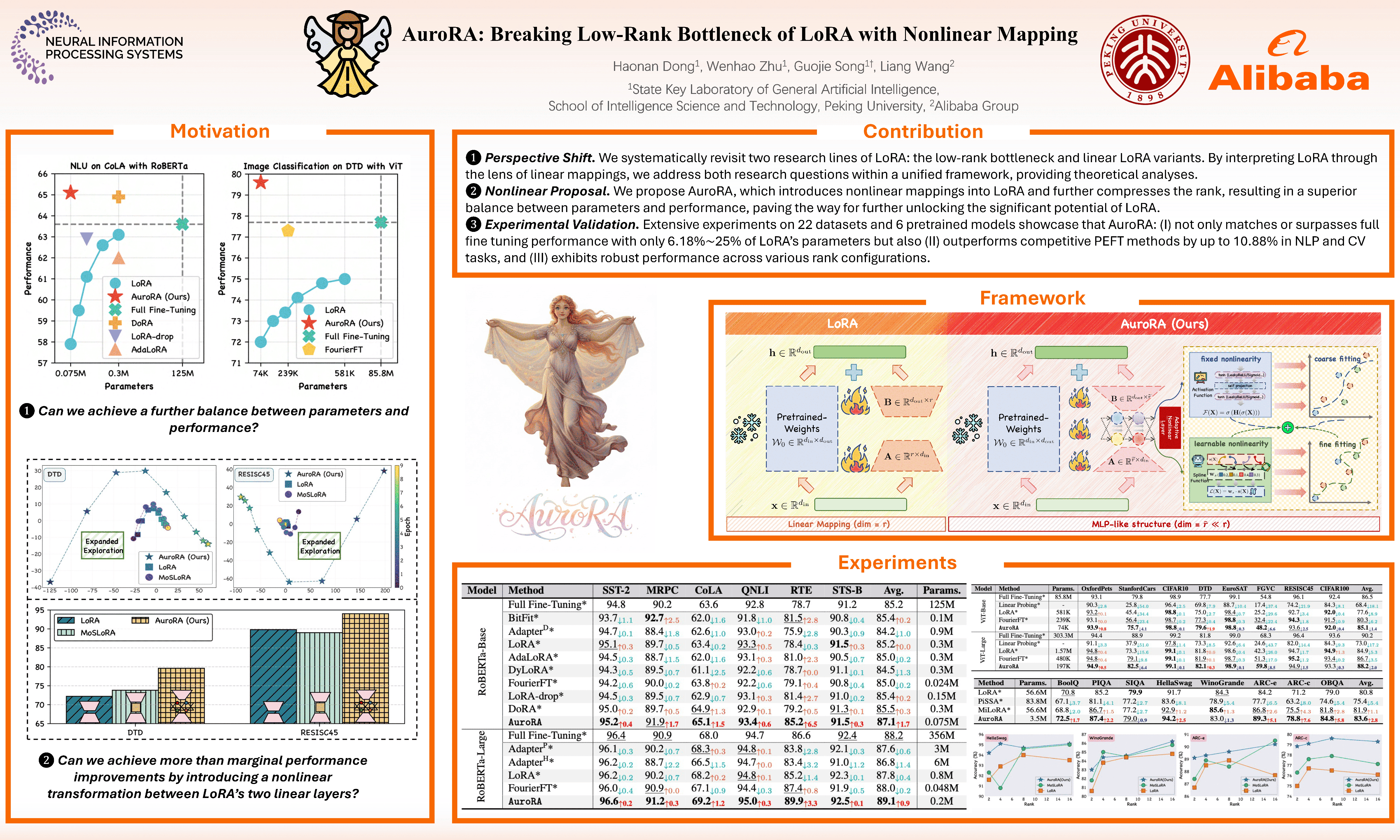

AuroRA: Breaking Low-Rank Bottleneck of LoRA with Nonlinear Mapping

Abstract

Low-Rank Adaptation (LoRA) is a widely adopted parameter-efficient fine-tuning (PEFT) method validated across NLP and CV domains. However, LoRA faces an inherent low-rank bottleneck: narrowing its performance gap with full fine-tuning requires increasing the rank of its parameter matrix, resulting in significant parameter overhead. Recent linear LoRA variants have attempted to enhance expressiveness by introducing additional linear mappings; however, their composition remains inherently linear and fails to fundamentally improve LoRA’s representational capacity.

To address this limitation, we propose ourmethod, which incorporates an Adaptive Nonlinear Layer (ANL) between two linear projectors to capture fixed and learnable nonlinearities. This combination forms an MLP-like structure with a compressed rank, enabling flexible and precise approximation of diverse target functions while theoretically guaranteeing lower approximation errors and bounded gradients.

Extensive experiments on 22 datasets and 6 pretrained models demonstrate that ourmethod:

- (I) not only matches or surpasses full fine-tuning performance with only of LoRA’s parameters but also

- (II) outperforms state-of-the-art PEFT methods by up to in both NLP and CV tasks, and

- (III) exhibits robust performance across various rank configurations.