Poster Session 5 · Friday, December 5, 2025 11:00 AM → 2:00 PM

#2902

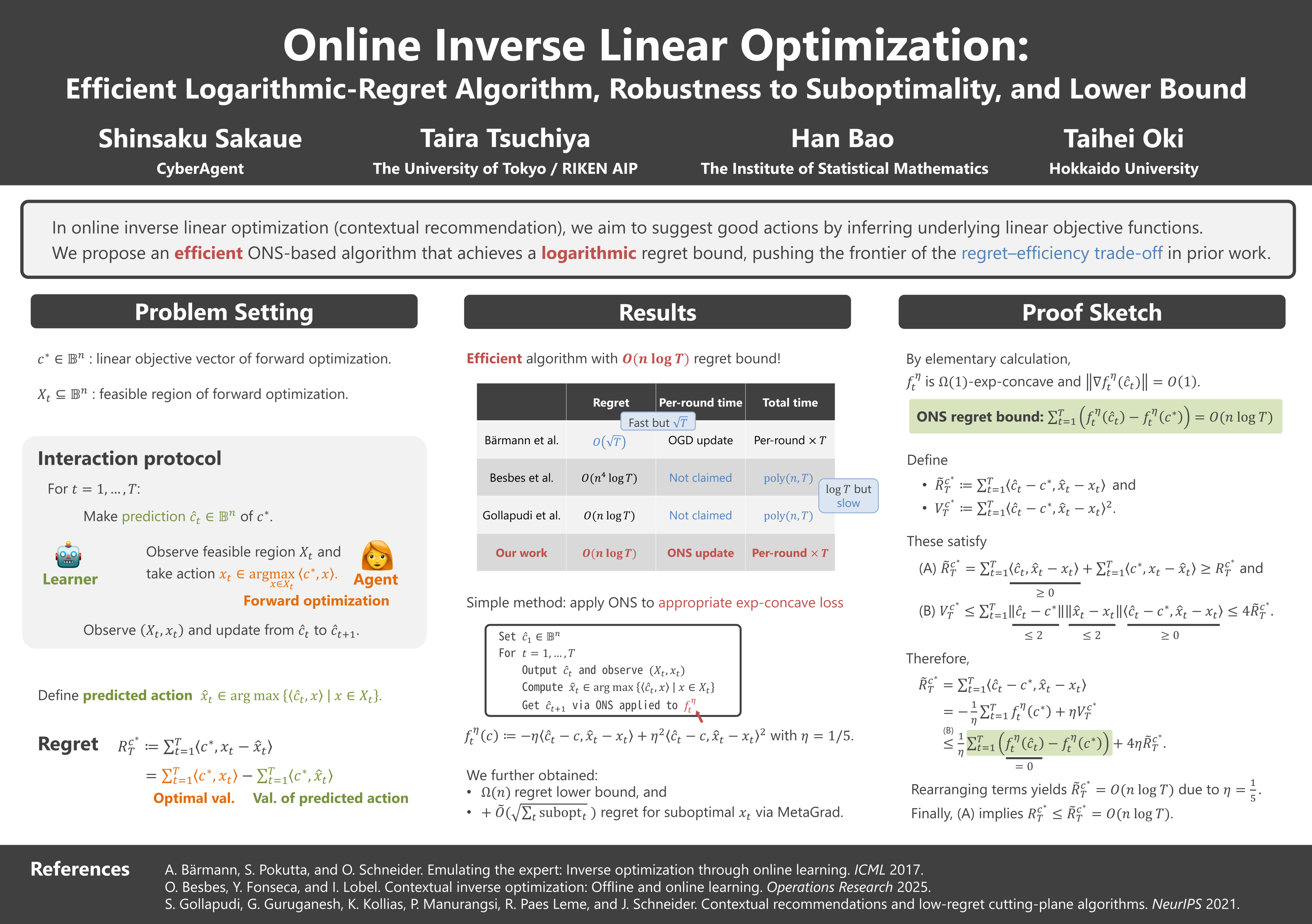

Online Inverse Linear Optimization: Efficient Logarithmic-Regret Algorithm, Robustness to Suboptimality, and Lower Bound

Abstract

In online inverse linear optimization, a learner observes time-varying sets of feasible actions and an agent's optimal actions, selected by solving linear optimization over the feasible actions. The learner sequentially makes predictions of the agent's true linear objective function, and their quality is measured by the regret, the cumulative gap between optimal objective values and those achieved by following the learner's predictions. A seminal work by Bärmann et al. (2017) obtained a regret bound of , where is the time horizon. Subsequently, the regret bound has been improved to by Besbes et al. (2021, 2025) and to by Gollapudi et al. (2021), where is the dimension of the ambient space of objective vectors.

However, these logarithmic-regret methods are highly inefficient when is large, as they need to maintain regions specified by constraints, which represent possible locations of the true objective vector. In this paper, we present the first logarithmic-regret method whose per-round complexity is independent of ; indeed, it achieves the best-known bound of . Our method is strikingly simple: it applies the online Newton step (ONS) to appropriate exp-concave loss functions.

Moreover, for the case where the agent's actions are possibly suboptimal, we establish a regret bound of , where is the cumulative suboptimality of the agent's actions. This bound is achieved by using MetaGrad, which runs ONS with different learning rates in parallel. We also present a lower bound of , showing that the bound is tight up to an factor.