Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#908 Spotlight

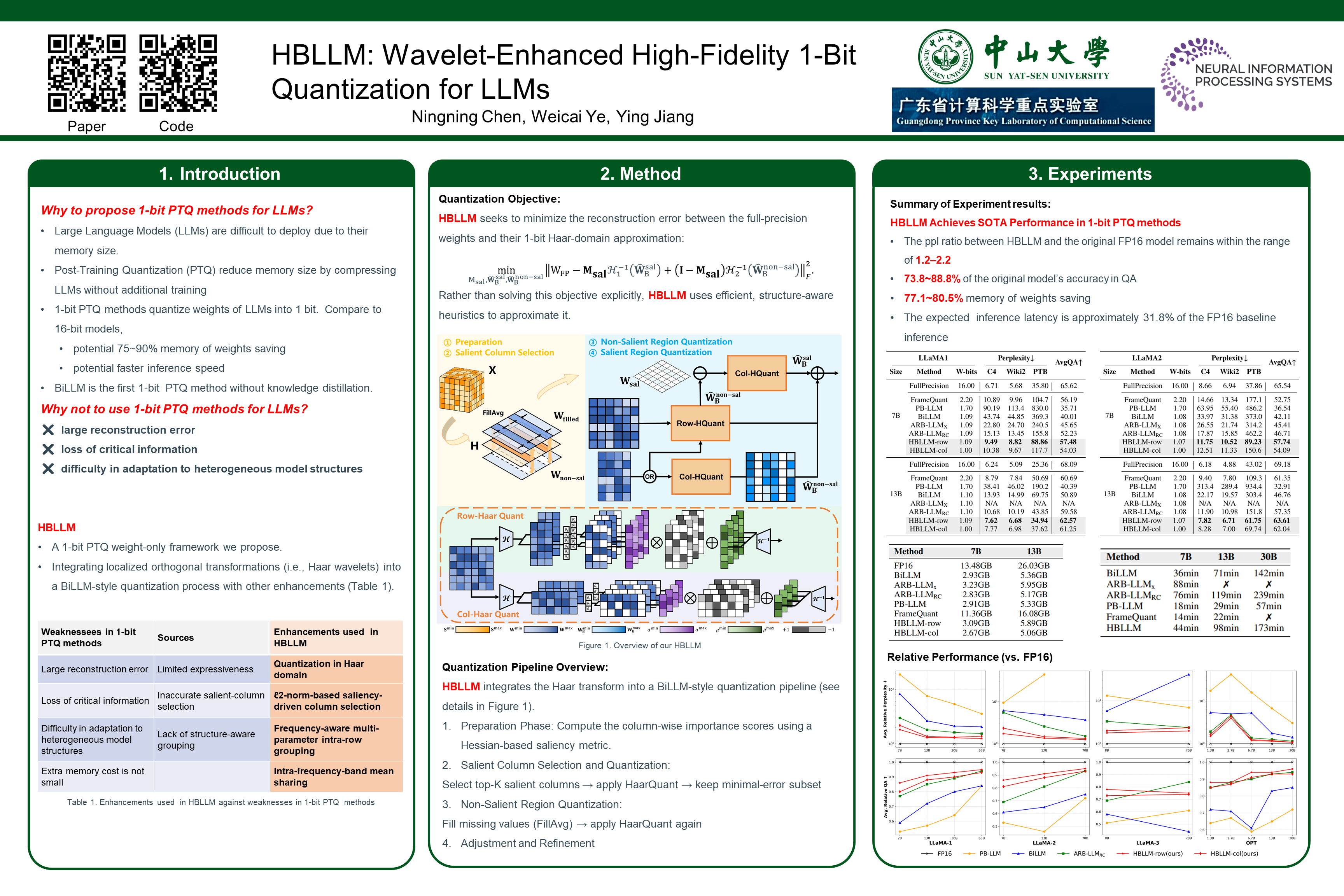

HBLLM: Wavelet-Enhanced High-Fidelity 1-Bit Quantization for LLMs

Abstract

We introduce HBLLM, a wavelet-enhanced high-fidelity -bit post-training quantization method for Large Language Models (LLMs). By leveraging Haar wavelet transforms to enhance expressive capacity through frequency decomposition, HBLLM significantly improves quantization fidelity while maintaining minimal overhead.

This approach features two innovative structure-aware grouping strategies:

- frequency-aware multi-parameter intra-row grouping

- -norm-based saliency-driven column selection.

Experiments conducted on the OPT and LLaMA models demonstrate that HBLLM achieves state-of-the-art performance in -bit quantization, attaining a perplexity of on LLaMA-B with an average weight storage of only bits.

Code available at:

https://github.com/Yeyke/HBLLM.