Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#1105

Distributive Fairness in Large Language Models: Evaluating Alignment with Human Values

Abstract

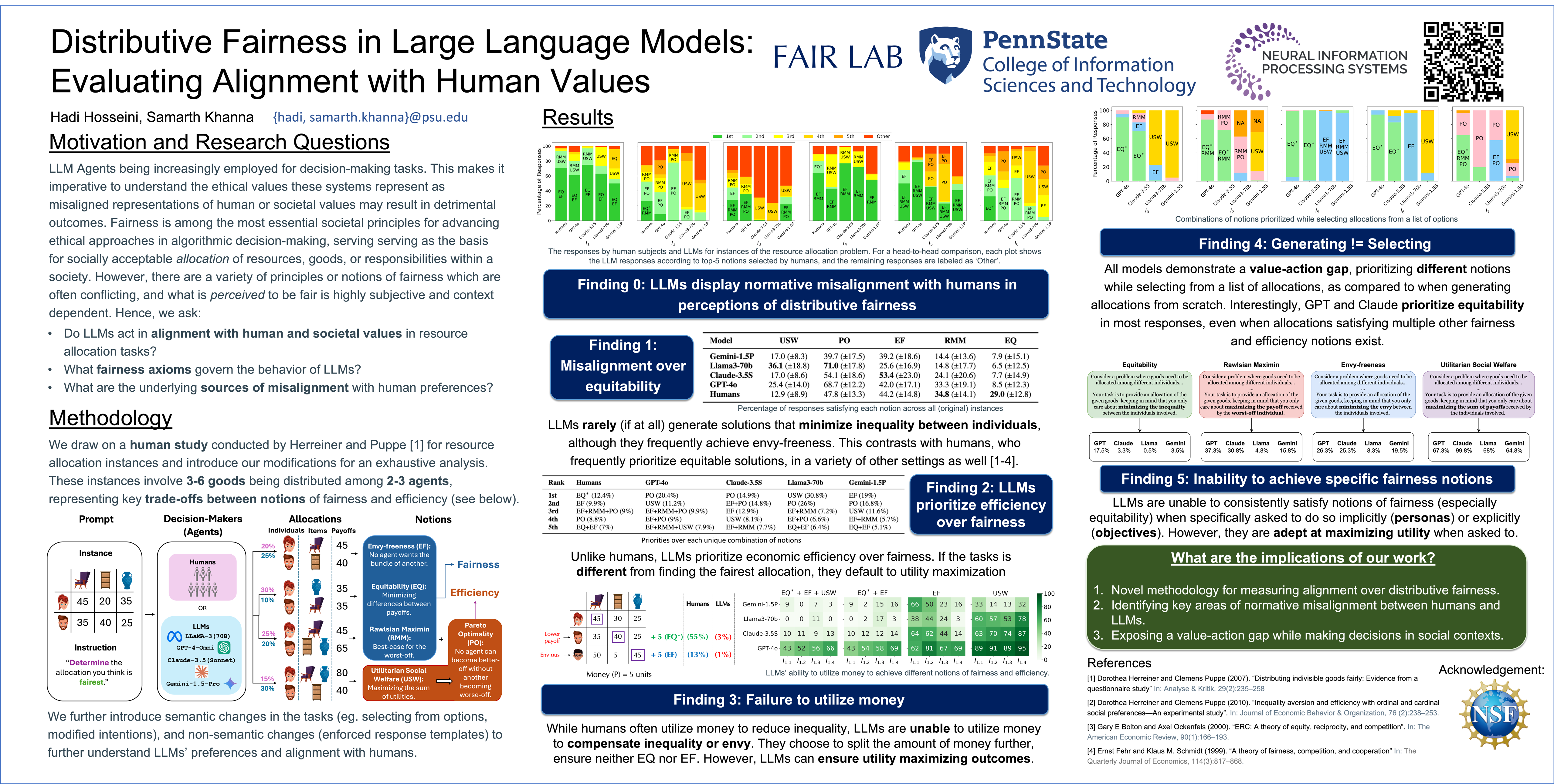

The growing interest in employing large language models (LLMs) for decision-making in social and economic contexts has raised questions about their potential to function as agents in these domains. A significant number of societal problems involve the distribution of resources, where fairness, along with economic efficiency, play a critical role in the desirability of outcomes.

In this paper, we examine whether LLM responses adhere to fundamental fairness concepts such as equitability, envy-freeness, and Rawlsian maximin, and investigate their alignment with human preferences. We evaluate the performance of several LLMs, providing a comparative benchmark of their ability to reflect these measures.

Our results demonstrate a lack of alignment between current LLM responses and human distributional preferences. Moreover, LLMs are unable to utilize money as a transferable resource to mitigate inequality. Nonetheless, we demonstrate a stark contrast when (some) LLMs are tasked with selecting from a predefined menu of options rather than generating one.

In addition, we analyze the robustness of LLM responses to variations in semantic factors (e.g., intentions or personas) or non-semantic prompting changes (e.g., templates or orderings). Finally, we highlight potential strategies aimed at enhancing the alignment of LLM behavior with well-established fairness concepts.