Poster Session 5 · Friday, December 5, 2025 11:00 AM → 2:00 PM

#4610 Spotlight

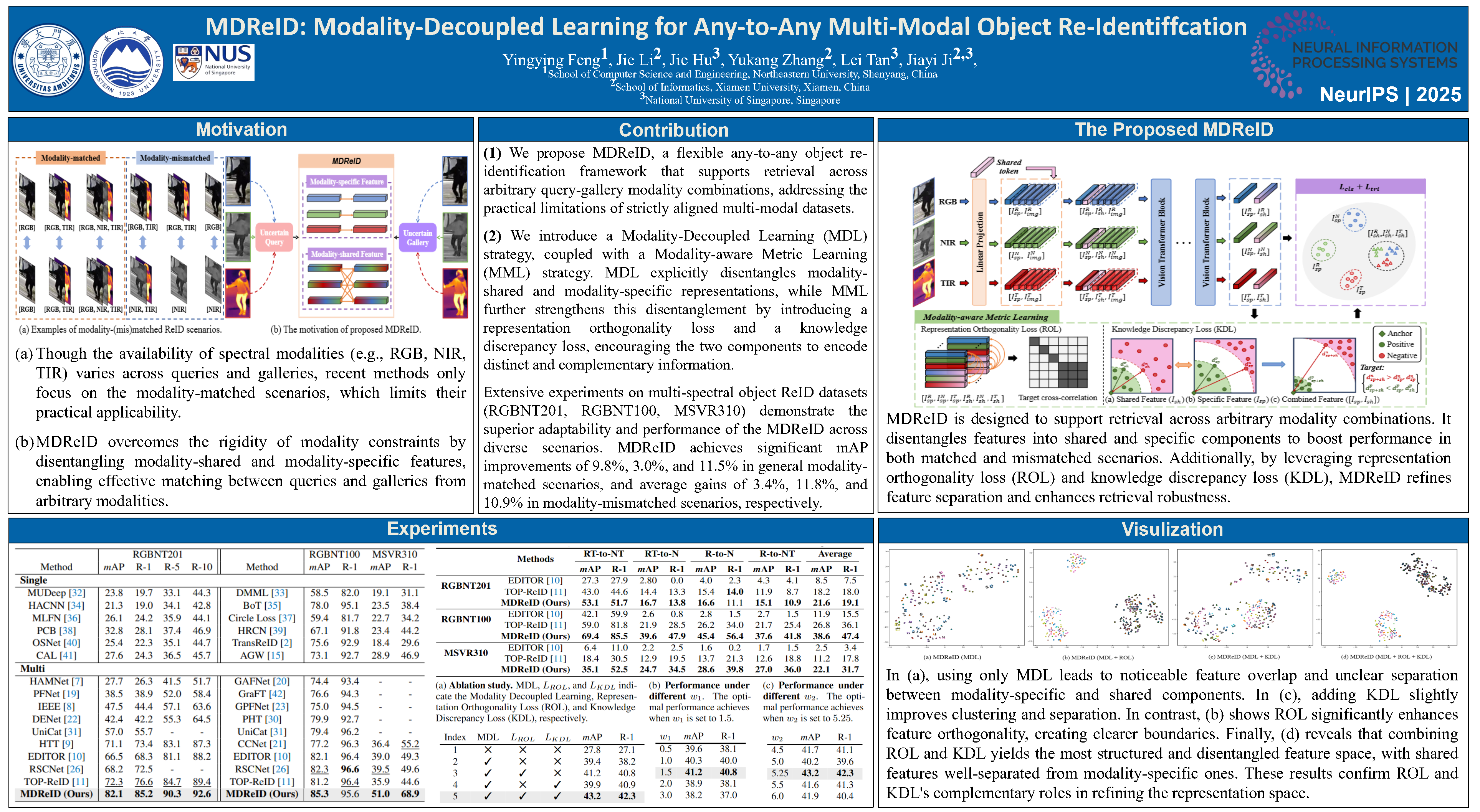

MDReID: Modality-Decoupled Learning for Any-to-Any Multi-Modal Object Re-Identification

Abstract

The challenge of inconsistent modalities in real-world applications presents significant obstacles to effective object re-identification (ReID). However, most existing approaches assume modality-matched conditions, significantly limiting their effectiveness in modality-mismatched scenarios. To overcome this limitation and achieve a more flexible ReID, we introduce MDReID to allow any-to-any image-level ReID systems.

MDReID is inspired by the widely recognized perspective that modality information comprises both modality-shared features, predictable across modalities, and unpredictable modality-specific features, which are inherently modality-dependent and consist of two key components: the Modality Decoupling Module (MDM) and Modality-aware Metric Learning (MML).

Specifically, MDM explicitly decomposes modality features into modality-shared and modality-specific representations, enabling effective retrieval in both modality-aligned and mismatched scenarios. MML, a tailored metric learning strategy, further enhances feature discrimination and decoupling by exploiting distributional relationships between shared and specific modality features.

Extensive experiments conducted on three challenging multi-modality ReID benchmarks (RGBNT201, RGBNT100, MSVR310) consistently demonstrate the superiority of MDL. MDReID achieves significant mAP improvements of 9.8%, 3.0%, and 11.5% in modality-matched scenarios, and average gains of 3.4%, 11.8%, and 10.9% in modality-mismatched scenarios, respectively.