Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#4919

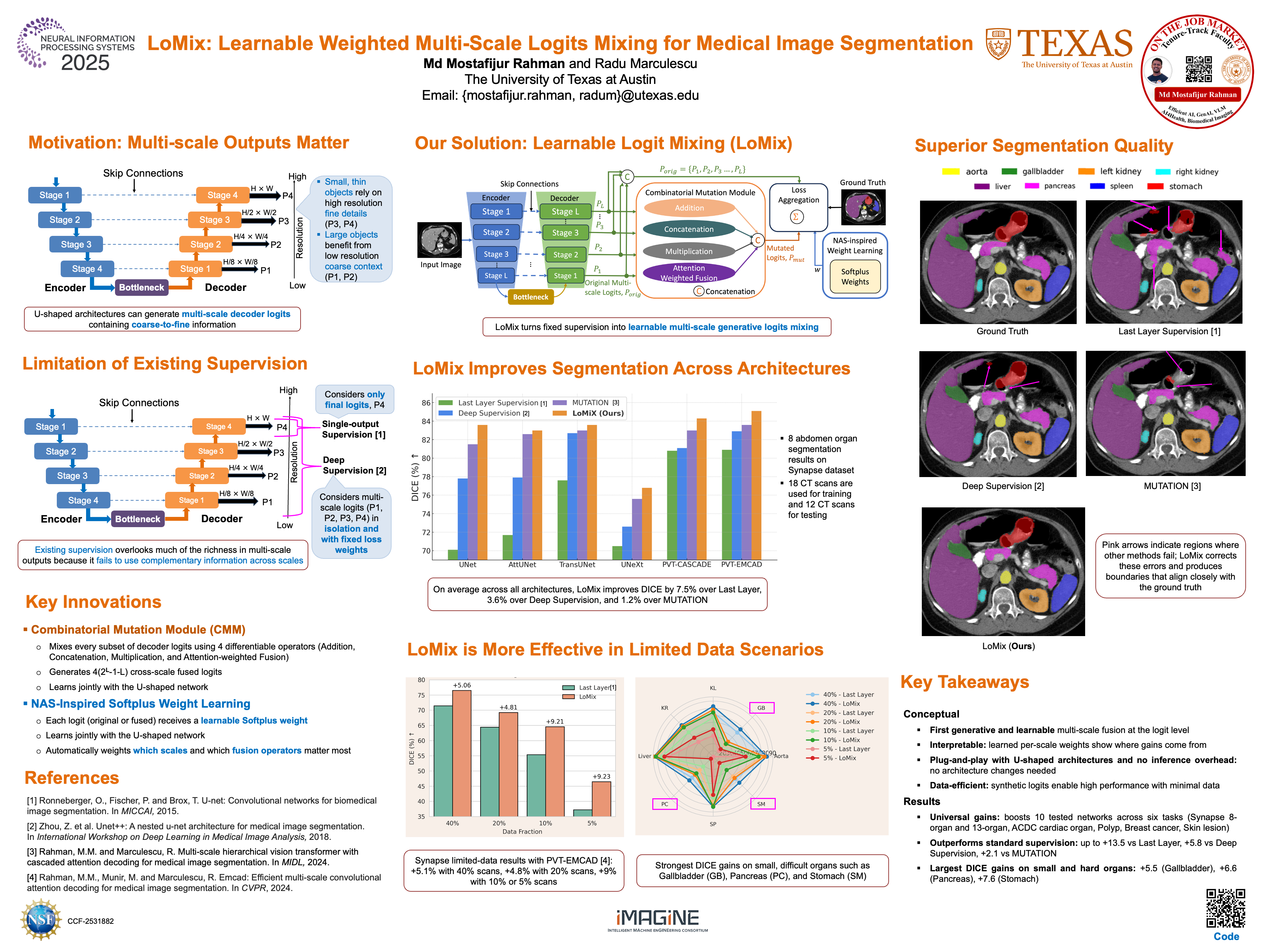

LoMix: Learnable Weighted Multi-Scale Logits Mixing for Medical Image Segmentation

Abstract

U‑shaped networks output logits at multiple spatial scales, each capturing a different blend of coarse context and fine detail. Yet, training still treats these logits in isolation—either supervising only the final, highest‑resolution logits or applying deep supervision with identical loss weights at every scale—without exploring mixed‑scale combinations. Consequently, the decoder output misses the complementary cues that arise only when coarse and fine predictions are fused.

To address this issue, we introduce LoMix (gits ing), a Neural Architecture Search (NAS)‑inspired, differentiable plug-and-play module that generates new mixed‑scale outputs and learns how exactly each of them should guide the training process. More precisely, LoMix mixes the multi-scale decoder logits with four lightweight fusion operators: addition, multiplication, concatenation, and attention-based weighted fusion, yielding a rich set of synthetic “mutant’’ maps. Every original or mutant map is given a softplus loss weight that is co‑optimized with network parameters, mimicking a one‑step architecture search that automatically discovers the most useful scales, mixtures, and operators.

Plugging LoMix into recent U-shaped architectures (i.e., PVT‑V2‑B2 backbone with EMCAD decoder) on Synapse 8‑organ dataset improves DICE by +4.2% over single‑output supervision, +2.2% over deep supervision, and +1.5% over equally weighted additive fusion, all with zero inference overhead. When training data are scarce (e.g., one or two labeled scans, 5% of the trainset), the advantage grows to +9.23%, underscoring LoMix’s data efficiency.

Across four benchmarks and diverse U-shaped networks, LoMiX improves DICE by up to +13.5% over single-output supervision, confirming that learnable weighted mixed‑scale fusion generalizes broadly while remaining data efficient, fully interpretable, and overhead-free at inference. Our implementation is available at https://github.com/SLDGroup/LoMix.