Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#4617

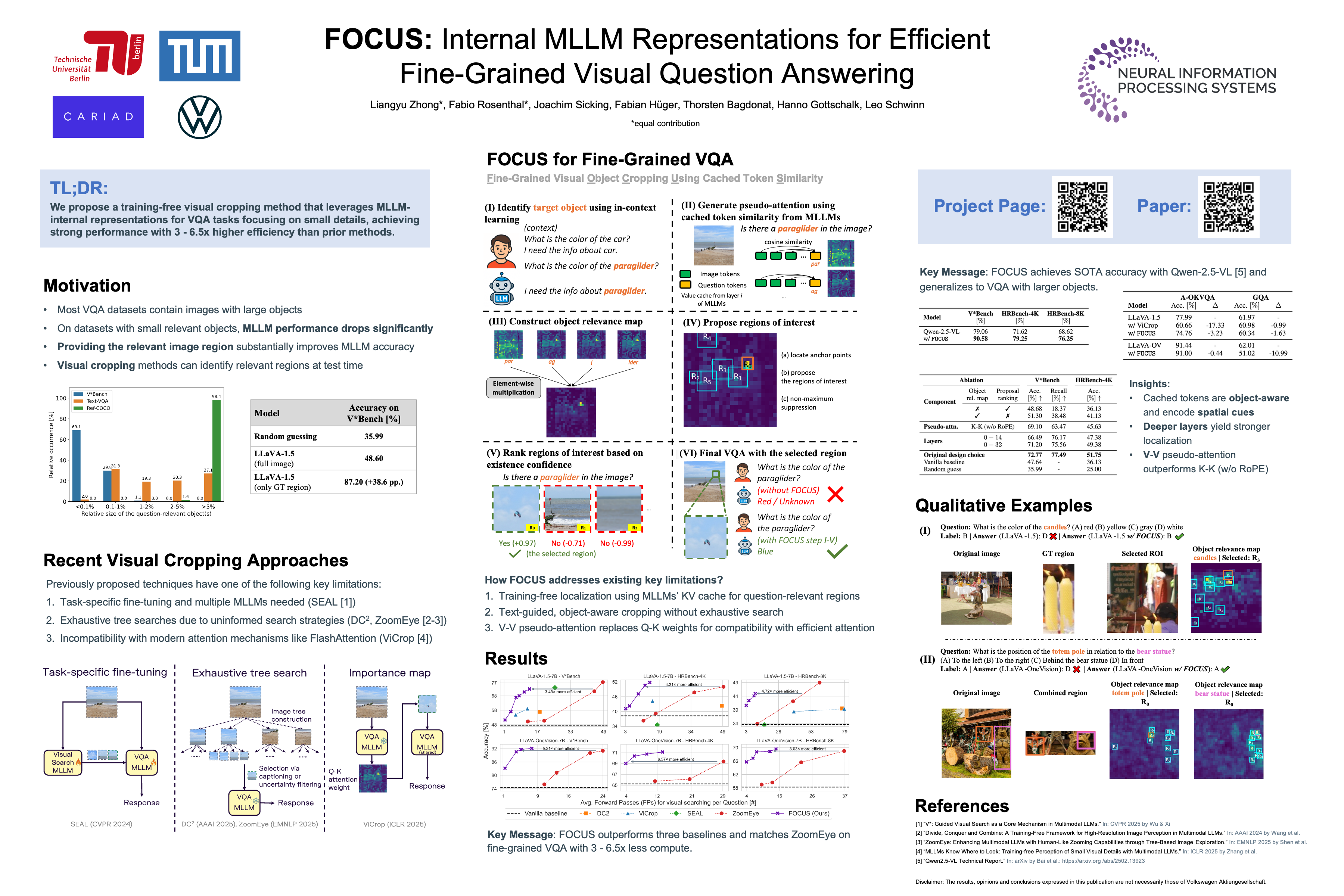

FOCUS: Internal MLLM Representations for Efficient Fine-Grained Visual Question Answering

Abstract

While Multimodal Large Language Models (MLLMs) offer strong perception and reasoning capabilities for image-text input, Visual Question Answering (VQA) focusing on small image details still remains a challenge. Although visual cropping techniques seem promising, recent approaches have several limitations: the need for task-specific fine-tuning, low efficiency due to uninformed exhaustive search, or incompatibility with efficient attention implementations.

We address these shortcomings by proposing a training-free visual cropping method, dubbed FOCUS, that leverages MLLM-internal representations to guide the search for the most relevant image region. This is accomplished in four steps:

- we identify the target object(s) in the VQA prompt;

- we compute an object relevance map using the key-value (KV) cache;

- we propose and rank relevant image regions based on the map;

- and finally, we perform the fine-grained VQA task using the top-ranked region.

As a result of this informed search strategy, FOCUS achieves strong performance across four fine-grained VQA datasets and three types of MLLMs. It outperforms three popular visual cropping methods in both accuracy and efficiency, and matches the best-performing baseline, ZoomEye, while requiring 3 – 6.5 × less compute.