Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#3910

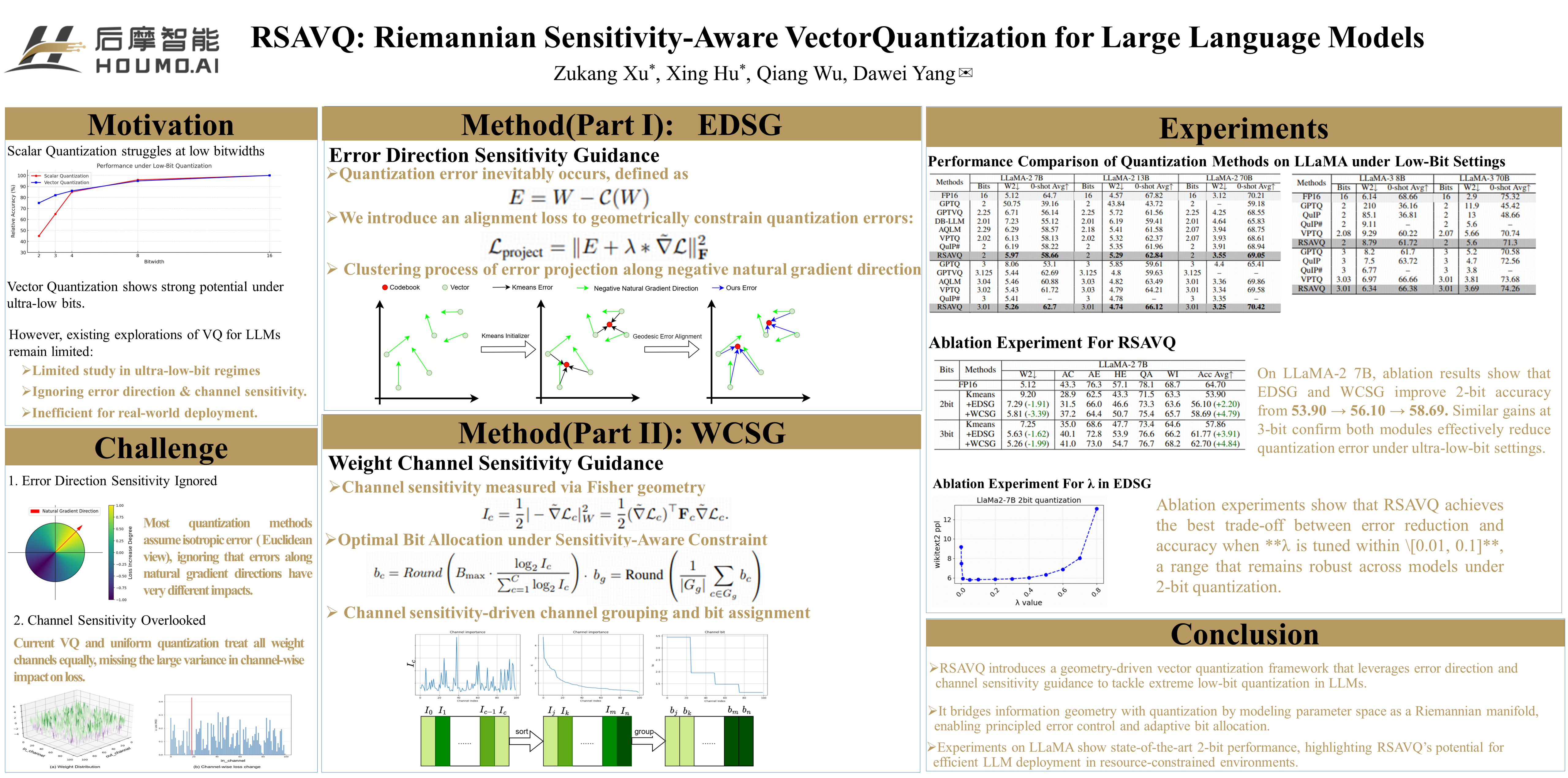

RSAVQ: Riemannian Sensitivity-Aware Vector Quantization for Large Language Models

Abstract

Large language models (LLMs) have demonstrated remarkable performance across a wide range of natural language processing tasks. However, their exponentially increasing parameters pose significant challenges for deployment on resource-constrained devices. Vector Quantization (VQ) shows great promise for low-bit quantization (e.g., 2 to 4 bits), but existing work faces two key challenges: unconstrained direction error and suboptimal bit allocation.

In this paper, we propose RSAVQ, a novel VQ framework to enhance extremely low-bit quantization for LLMs. RSAVQ introduces two geometry-driven innovations that effectively mitigate above limitations:

- Error Direction Sensitivity Guidance (EDSG), which leverages the Fisher information matrix (FIM)-induced Riemannian metric to project quantization errors onto low-sensitivity directions in the parameter space. Specifically, this projection is performed along the negative natural gradient direction, which effectively suppresses error expansion.

- Weight Channel Sensitivity Guidance (WCSG), which constructs a channel-wise sensitivity metric via FIM curvature analysis to dynamically guide bit resource allocation.

The approach facilitates a globally optimal quantization solution within prescribed bit constraints. Experiments demonstrate that RSAVQ outperforms existing methods for LLMs. For example, in 2-bit quantization of LLaMA-3 8B, RSAVQ leads baselines like VPTQ and QuIP# by 0.4 in perplexity (PPL) and 1.5 in zero-shot accuracy.

This work offers a practical solution for constrained environments and a theoretical bridge between information geometry and the quantization of neural networks, advancing efficient deep learning.