Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#1413

Hierarchical Demonstration Order Optimization for Many-shot In-Context Learning

Abstract

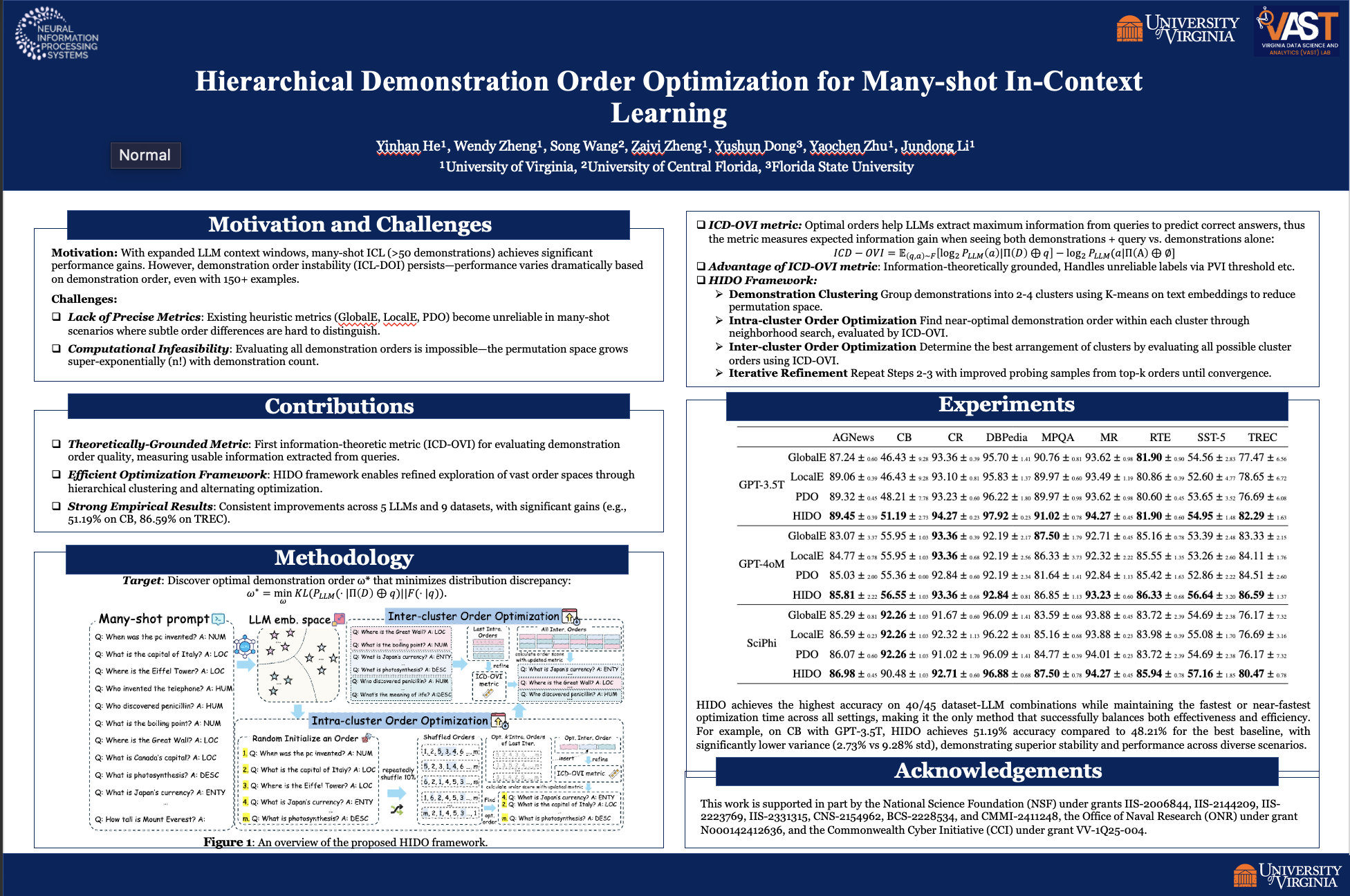

In-Context Learning (ICL) is a technique where large language models (LLMs) leverage multiple demonstrations (i.e., examples) to perform tasks. With the recent expansion of LLM context windows, many-shot ICL (generally with more than 50 demonstrations) can lead to significant performance improvements on a variety of language tasks such as text classification and question answering.

Nevertheless, ICL faces the issue of demonstration order instability (ICL-DOI), which means that performance varies significantly depending on the order of demonstrations. Moreover, ICL-DOI persists in many-shot ICL, validated by our thorough experimental investigation.

Current strategies for handling ICL-DOI are not applicable to many-shot ICL due to two critical challenges:

- Most existing methods assess demonstration order quality by first prompting the LLM, then using heuristic metrics based on the LLM's predictions. In the many-shot scenarios, these metrics without theoretical grounding become unreliable, where the LLMs struggle to effectively utilize information from long input contexts, making order distinctions less clear.

- The requirement to examine all orders for the large number of demonstrations is computationally infeasible due to the super-exponential complexity of the order space in many-shot ICL.

To tackle the first challenge, we design a demonstration order evaluation metric based on information theory for measuring order quality, which effectively quantifies the usable information gain of a given demonstration order. To address the second challenge, we propose a hierarchical demonstration order optimization method named HIDO that enables a more refined exploration of the order space, achieving high ICL performance without the need to evaluate all possible orders.

Extensive experiments on multiple LLMs and real-world datasets demonstrate that our HIDO method consistently and efficiently outperforms other baselines. Our code project can be found at https://github.com/YinhanHe123/HIDO/.