Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#410

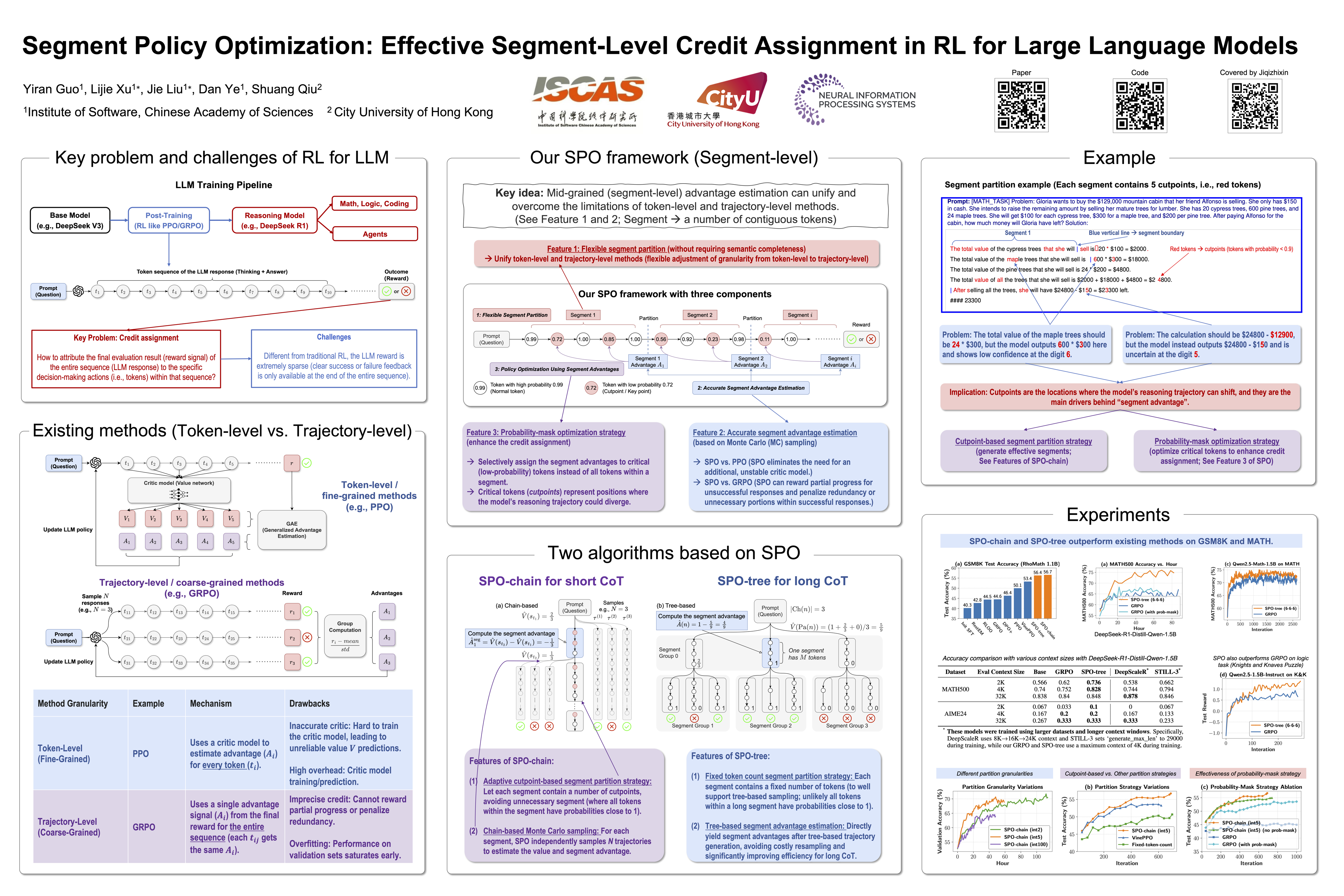

Segment Policy Optimization: Effective Segment-Level Credit Assignment in RL for Large Language Models

Abstract

Enhancing the reasoning capabilities of large language models effectively using reinforcement learning (RL) remains a crucial challenge. Existing approaches primarily adopt two contrasting advantage estimation granularities: token-level methods (e.g., PPO) aim to provide fine-grained advantage signals but suffer from inaccurate estimation due to difficulties in training an accurate critic model. On the other extreme, trajectory-level methods (e.g., GRPO) solely rely on a coarse-grained advantage signal from the final reward, leading to imprecise credit assignment.

To address these limitations, we propose Segment Policy Optimization (SPO), a novel RL framework that leverages segment-level advantage estimation at an intermediate granularity, achieving a better balance by offering more precise credit assignment than trajectory-level methods and requiring fewer estimation points than token-level methods, enabling accurate advantage estimation based on Monte Carlo (MC) without a critic model.

SPO features three components with novel strategies:

- flexible segment partition;

- accurate segment advantage estimation; and

- policy optimization using segment advantages, including a novel probability-mask strategy.

We further instantiate SPO for two specific scenarios:

- SPO-chain for short chain-of-thought (CoT), featuring novel cutpoint-based partition and chain-based advantage estimation, achieving - percentage point improvements in accuracy over PPO and GRPO on GSM8K.

- SPO-tree for long CoT, featuring novel tree-based advantage estimation, which significantly reduces the cost of MC estimation, achieving - percentage point improvements over GRPO on MATH500 under 2K and 4K context evaluation. We make our code publicly available at https://github.com/AIFrameResearch/SPO.