Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#2901

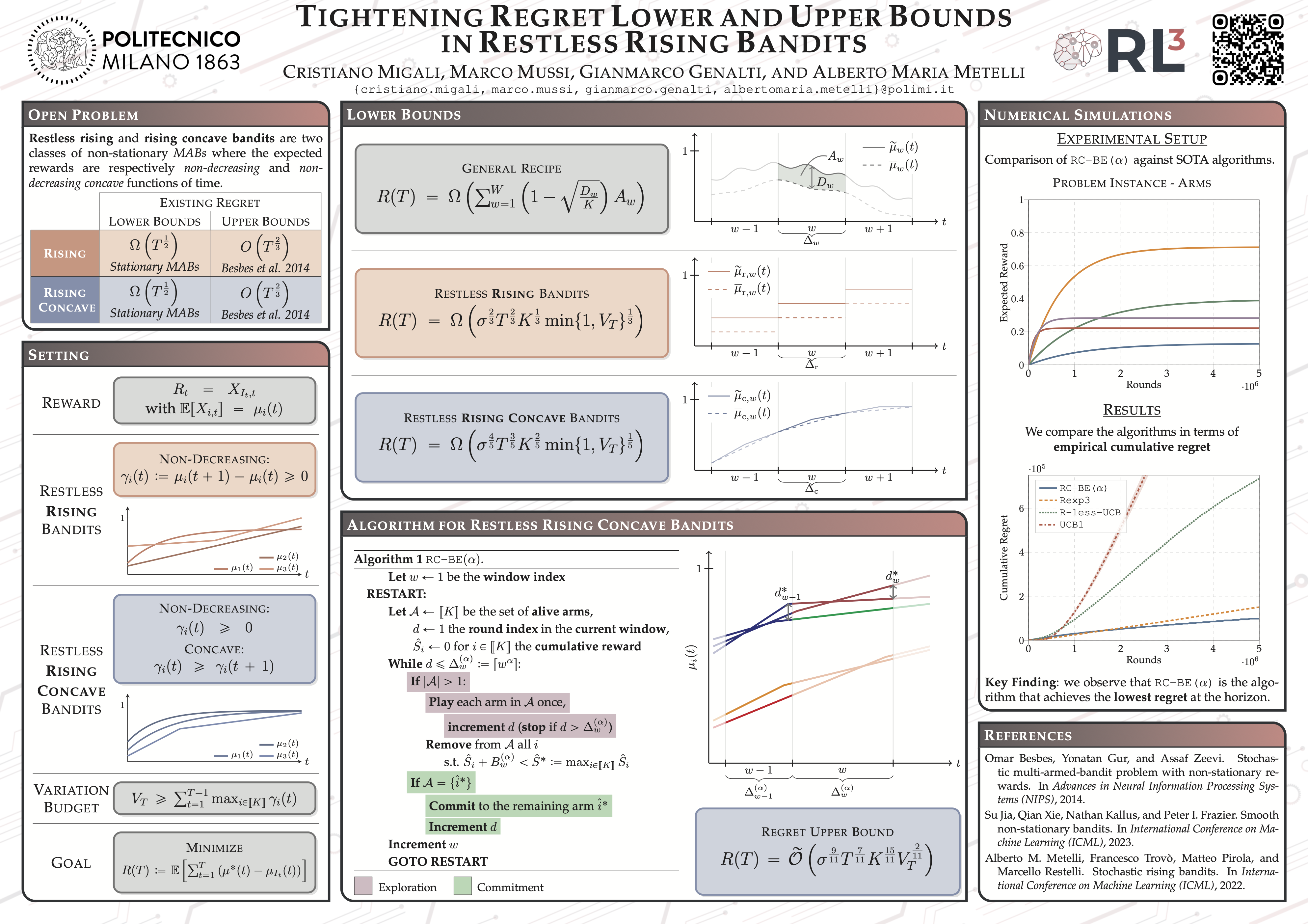

Tightening Regret Lower and Upper Bounds in Restless Rising Bandits

Abstract

Restless Multi-Armed Bandits (MABs) are a general framework designed to handle real-world decision-making problems where the expected rewards evolve over time, such as in recommender systems and dynamic pricing. In this work, we investigate from a theoretical standpoint two well-known structured subclasses of restless MABs: the rising and the rising concave settings, where the expected reward of each arm evolves over time following an unknown non-decreasing and a non-decreasing concave function, respectively.

By providing a novel methodology of independent interest for general restless bandits, we establish new lower bounds on the expected cumulative regret for both settings. In the rising case, we prove a lower bound of order , matching known upper bounds for restless bandits; whereas, in the rising concave case, we derive a lower bound of order , proving for the first time that this setting is provably more challenging than stationary MABs.

Then, we introduce Rising Concave Budgeted Exploration (RC-BE()), a new regret minimization algorithm designed for the rising concave MABs. By devising a novel proof technique, we show that the expected cumulative regret of RC-BE() is in the order of .

These results collectively make a step towards closing the gap in rising concave MABs, positioning them between stationary and general restless bandit settings in terms of statistical complexity.