Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#716

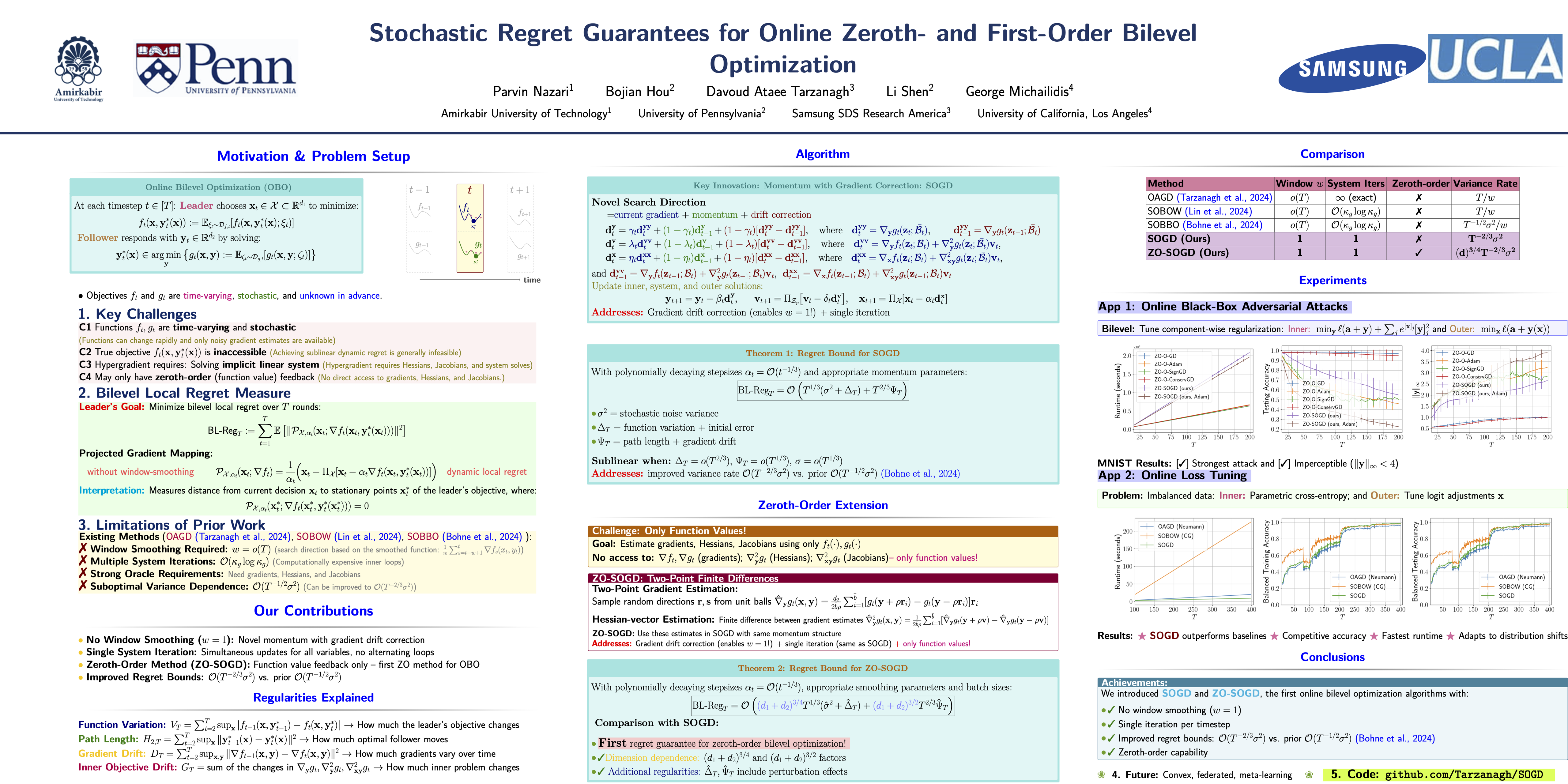

Stochastic Regret Guarantees for Online Zeroth- and First-Order Bilevel Optimization

Abstract

Online bilevel optimization (OBO) is a powerful framework for machine learning problems where both outer and inner objectives evolve over time, requiring dynamic updates. Current OBO approaches rely on deterministic window-smoothed regret minimization, which may not accurately reflect system performance when functions change rapidly.

In this work, we introduce a novel search direction and show that both first- and zeroth-order (ZO) stochastic OBO algorithms leveraging this direction achieve sublinear {stochastic bilevel regret without window smoothing}. Beyond these guarantees, our framework enhances efficiency by:

- reducing oracle dependence in hypergradient estimation,

- updating inner and outer variables alongside the linear system solution, and

- employing ZO-based estimation of Hessians, Jacobians, and gradients.

Experiments on online parametric loss tuning and black-box adversarial attacks validate our approach.