Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#4314

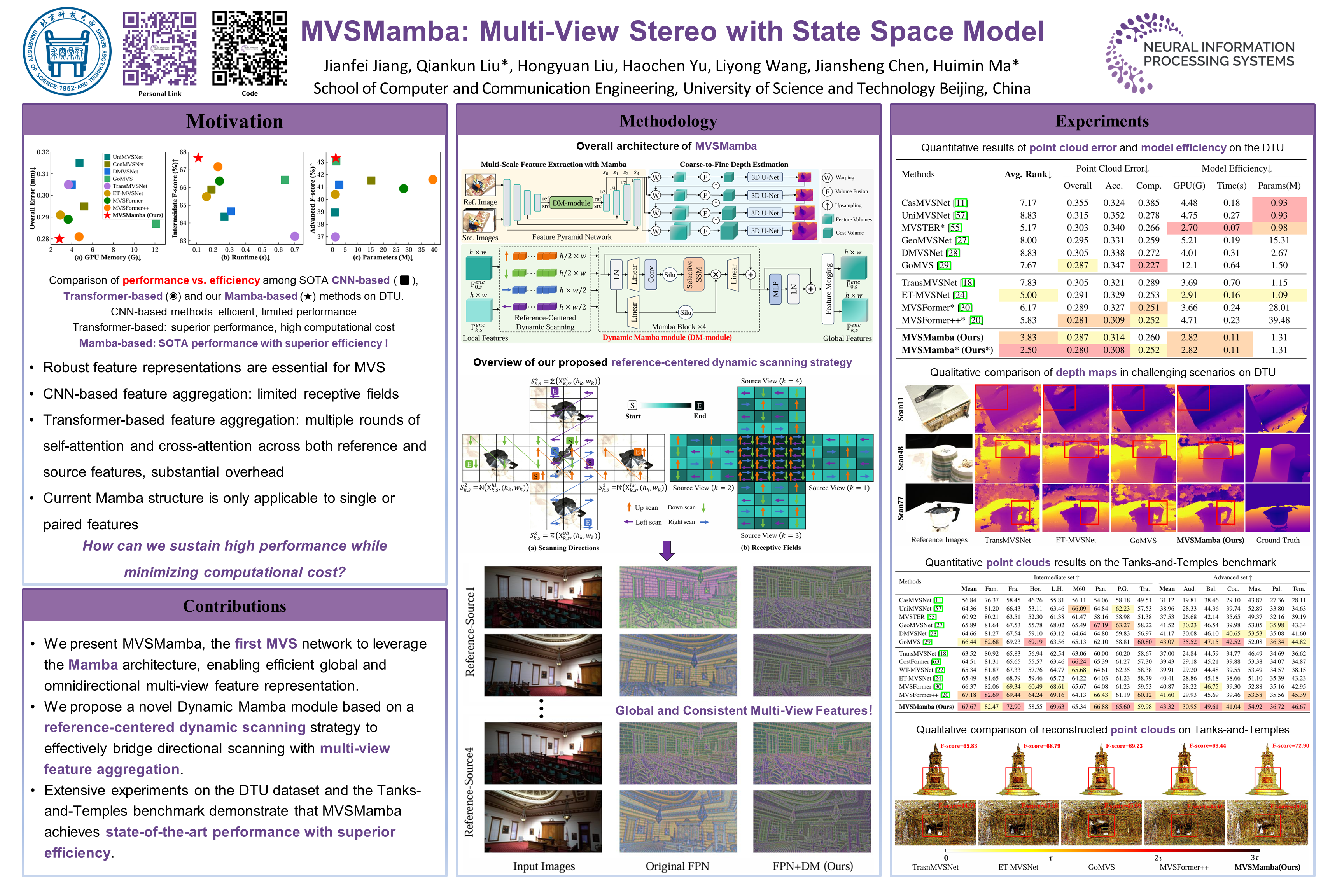

MVSMamba: Multi-View Stereo with State Space Model

Abstract

Robust feature representations are essential for learning-based Multi-View Stereo (MVS), which relies on accurate feature matching. Recent MVS methods leverage Transformers to capture long-range dependencies based on local features extracted by conventional feature pyramid networks. However, the quadratic complexity of Transformer-based MVS methods poses challenges to balance performance and efficiency.

Motivated by the global modeling capability and linear complexity of the Mamba architecture, we propose MVSMamba, the first Mamba-based MVS network. MVSMamba enables efficient global feature aggregation with minimal computational overhead.

To fully exploit Mamba's potential in MVS, we propose a Dynamic Mamba module (DM-module) based on a novel reference-centered dynamic scanning strategy, which enables:

- Efficient intra- and inter-view feature interaction from the reference to source views,

- Omnidirectional multi-view feature representations, and

- Multi-scale global feature aggregation.

Extensive experimental results demonstrate MVSMamba outperforms state-of-the-art MVS methods on the DTU dataset and the Tanks-and-Temples benchmark with both superior performance and efficiency. The source code is available at https://github.com/JianfeiJ/MVSMamba.