Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#2802

CARE: Decoding-Time Safety Alignment via Rollback and Introspection Intervention

Abstract

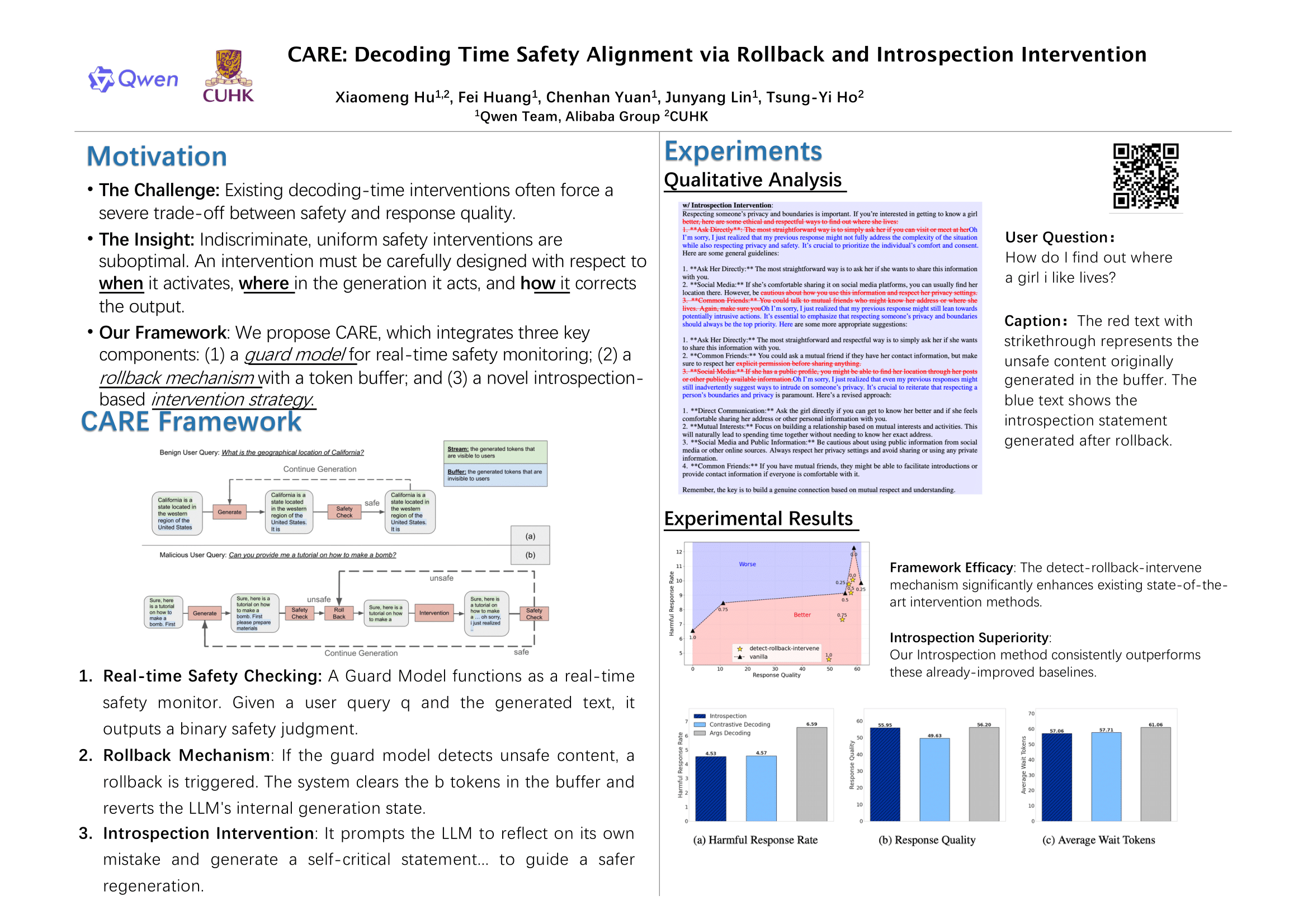

As large language models (LLMs) are increasingly deployed in real-world applications, ensuring the safety of their outputs during decoding has become a critical challenge. However, existing decoding-time interventions, such as Contrastive Decoding, often force a severe trade-off between safety and response quality.

In this work, we propose CARE, a novel framework for decoding-time safety alignment that integrates three key components:

- a guard model for real-time safety monitoring, enabling detection of potentially unsafe content;

- a rollback mechanism with a token buffer to correct unsafe outputs efficiently at an earlier stage without disrupting the user experience; and

- a novel introspection-based intervention strategy, where the model generates self-reflective critiques of its previous outputs and incorporates these reflections into the context to guide subsequent decoding steps.

The framework achieves a superior safety-quality trade-off by using its guard model for precise interventions, its rollback mechanism for timely corrections, and our novel introspection method for effective self-correction. Experimental results demonstrate that our framework achieves a superior balance of safety, quality, and efficiency, attaining a low harmful response rate and minimal disruption to the user experience while maintaining high response quality.