Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#4705

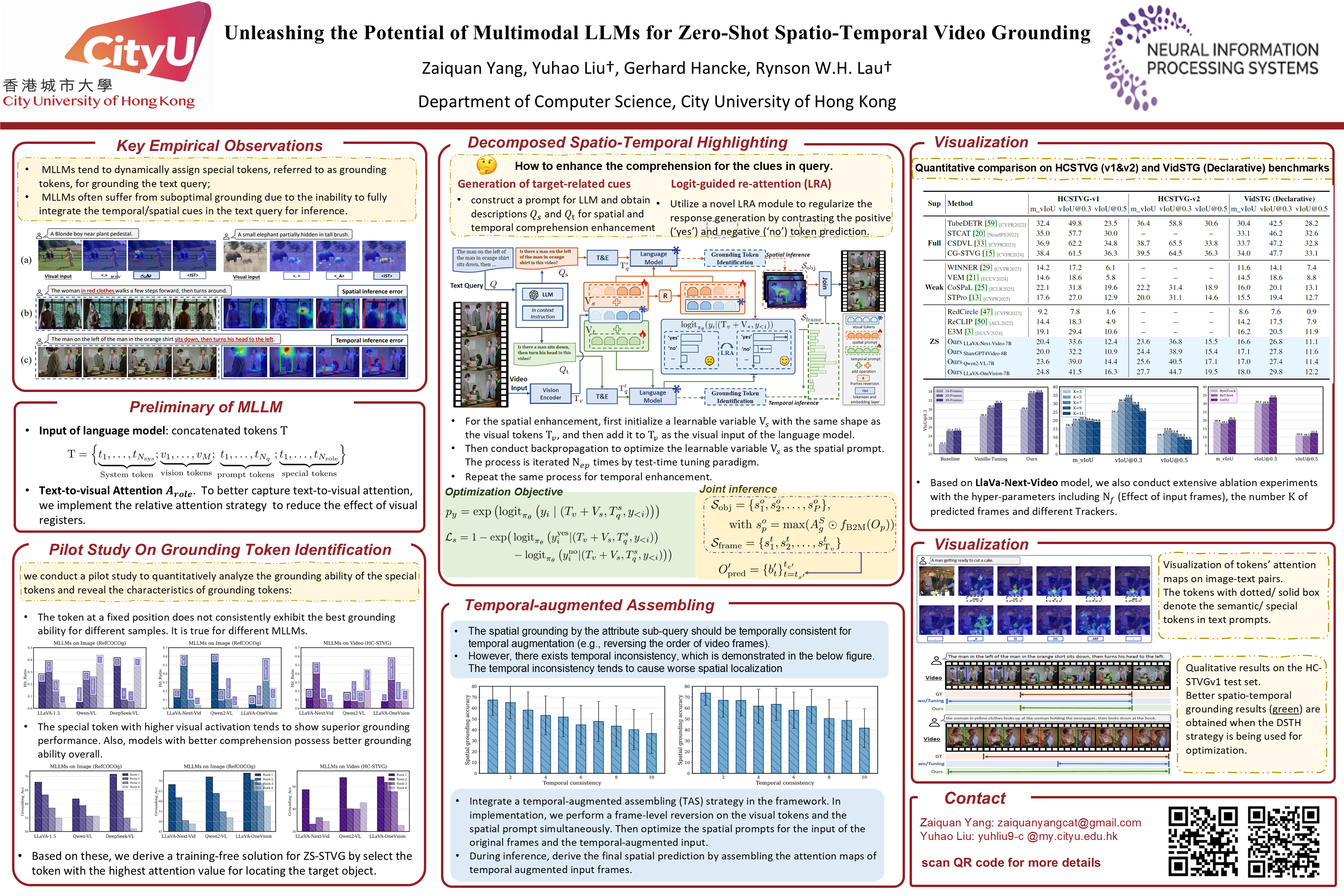

Unleashing the Potential of Multimodal LLMs for Zero-Shot Spatio-Temporal Video Grounding

Abstract

Spatio-temporal video grounding (STVG) aims at localizing the spatio-temporal tube of a video, as specified by the input text query. In this paper, we utilize multimodal large language models (MLLMs) to explore a zero-shot solution in STVG.

We reveal two key insights about MLLMs:

- MLLMs tend to dynamically assign special tokens, referred to as grounding tokens, for grounding the text query; and

- MLLMs often suffer from suboptimal grounding due to the inability to fully integrate the cues in the text query (e.g., attributes, actions) for inference.

Based on these insights, we propose a MLLM-based zero-shot framework for STVG, which includes novel decomposed spatio-temporal highlighting (DSTH) and temporal-augmented assembling (TAS) strategies to unleash the reasoning ability of MLLMs.

The DSTH strategy first decouples the original query into attribute and action sub-queries for inquiring the existence of the target both spatially and temporally. It then uses a novel logit-guided re-attention (LRA) module to learn latent variables as spatial and temporal prompts, by regularizing token predictions for each sub-query. These prompts highlight attribute and action cues, respectively, directing the model's attention to reliable spatial and temporal related visual regions. In addition, as the spatial grounding by the attribute sub-query should be temporally consistent, we introduce the TAS strategy to assemble the predictions using the original video frames and the temporal-augmented frames as inputs to help improve temporal consistency.

We evaluate our method on various MLLMs, and show that it outperforms SOTA methods on three common STVG benchmarks.