Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#2206

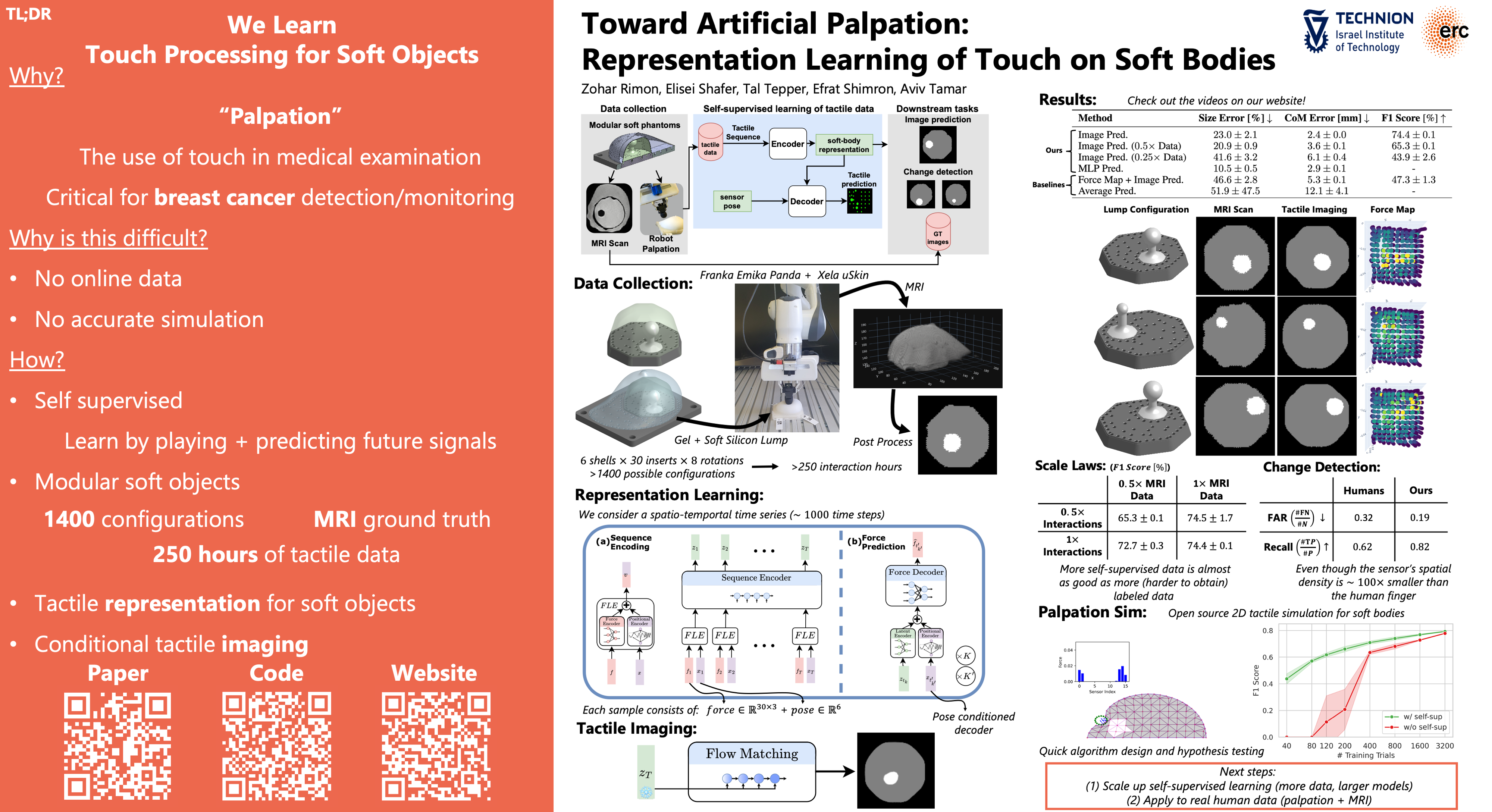

Toward Artificial Palpation: Representation Learning of Touch on Soft Bodies

Abstract

Palpation, the use of touch in medical examination, is almost exclusively performed by humans. We investigate a proof of concept for an artificial palpation method based on self-supervised learning.

Our key idea is that an encoder-decoder framework can learn a representation from a sequence of tactile measurements that contains all the relevant information about the palpated object. We conjecture that such a representation can be used for downstream tasks such as tactile imaging and change detection. With enough training data, it should capture intricate patterns in the tactile measurements that go beyond a simple map of forces—the current state of the art.

To validate our approach, we both develop a simulation environment and collect a real-world dataset of soft objects and corresponding ground truth images obtained by magnetic resonance imaging (MRI). We collect palpation sequences using a robot equipped with a tactile sensor, and train a model that predicts sensory readings at different positions on the object.

We investigate the representation learned in this process, and demonstrate its use in imaging and change detection.