Poster Session 5 · Friday, December 5, 2025 11:00 AM → 2:00 PM

#3404

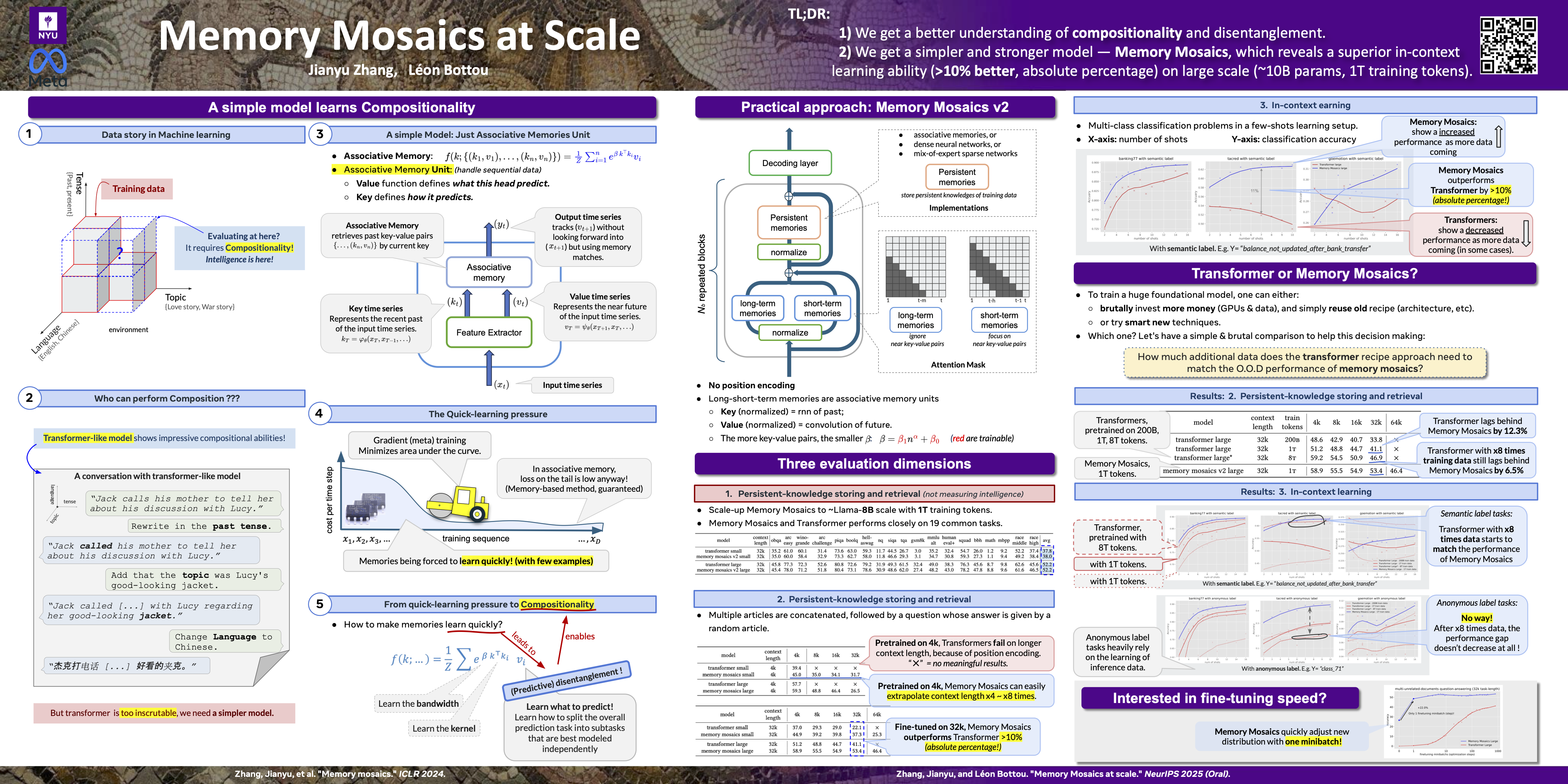

Memory Mosaics at scale

Abstract

Memory Mosaics, networks of associative memories, have demonstrated appealing compositional and in-context learning capabilities on medium-scale networks (GPT-2 scale) and synthetic small datasets.

This work shows that these favorable properties remain when we scale memory mosaics to large language model sizes (llama-8B scale) and real-world datasets. To this end, we scale memory mosaics to 10B size, we train them on one trillion tokens, we introduce a couple architectural modifications (memory mosaics v2), we assess their capabilities across three evaluation dimensions:

- training-knowledge storage,

- new-knowledge storage, and

- in-context learning.

Throughout the evaluation, memory mosaics v2 match transformers on the learning of training knowledge (first dimension) and significantly outperforms transformers on carrying out new tasks at inference time (second and third dimensions). These improvements cannot be easily replicated by simply increasing the training data for transformers. A memory mosaics v2 trained on one trillion tokens still perform better on these tasks than a transformer trained on eight trillion tokens.