Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#4701

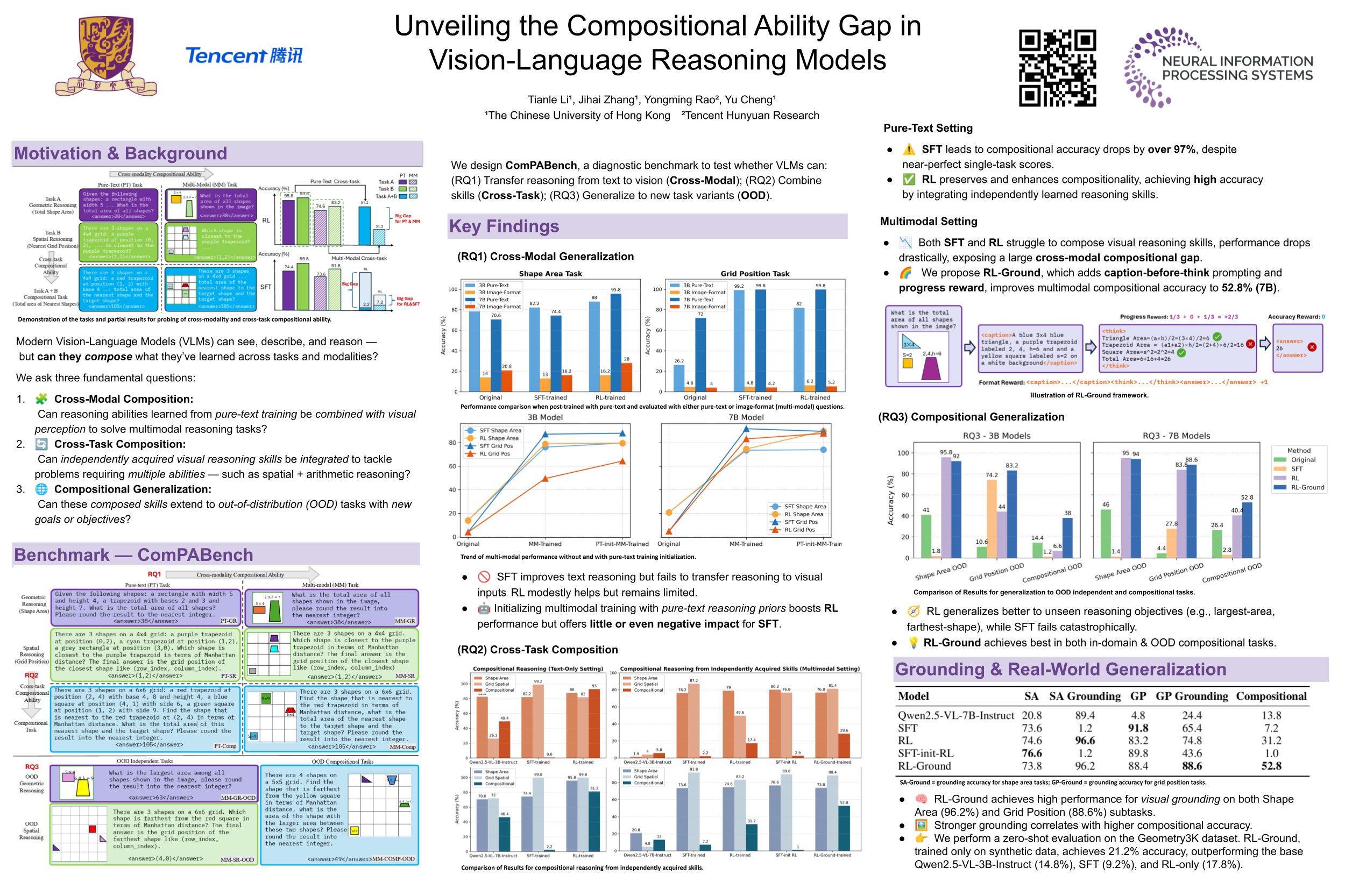

Unveiling the Compositional Ability Gap in Vision-Language Reasoning Model

Abstract

While large language models (LLMs) demonstrate strong reasoning capabilities utilizing reinforcement learning (RL) with verifiable reward, whether large vision-language models (VLMs) can directly inherit such capabilities through similar post-training strategies remains underexplored.

In this work, we conduct a systematic compositional probing study to evaluate whether current VLMs trained with RL or other post-training strategies can compose capabilities across modalities or tasks under out-of-distribution conditions. We design a suite of diagnostic tasks that train models on unimodal tasks or isolated reasoning skills, and evaluate them on multimodal, compositional variants requiring skill integration.

Through comparisons between supervised fine-tuning (SFT) and RL-trained models, we identify three key findings:

- RL-trained models consistently outperform SFT on compositional generalization, demonstrating better integration of learned skills;

- although VLMs achieve strong performance on individual tasks, they struggle to generalize compositionally under cross-modal and cross-task scenarios, revealing a significant gap in current training strategies;

- enforcing models to explicitly describe visual content before reasoning (e.g., caption-before-thinking), along with rewarding progressive vision-to-text grounding, yields notable gains.

It highlights two essential ingredients for improving compositionality in VLMs: visual-to-text alignment and accurate visual grounding. Our findings shed light on the current limitations of RL-based reasoning VLM training and provide actionable insights toward building models that reason compositionally across modalities and tasks.