Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#5005

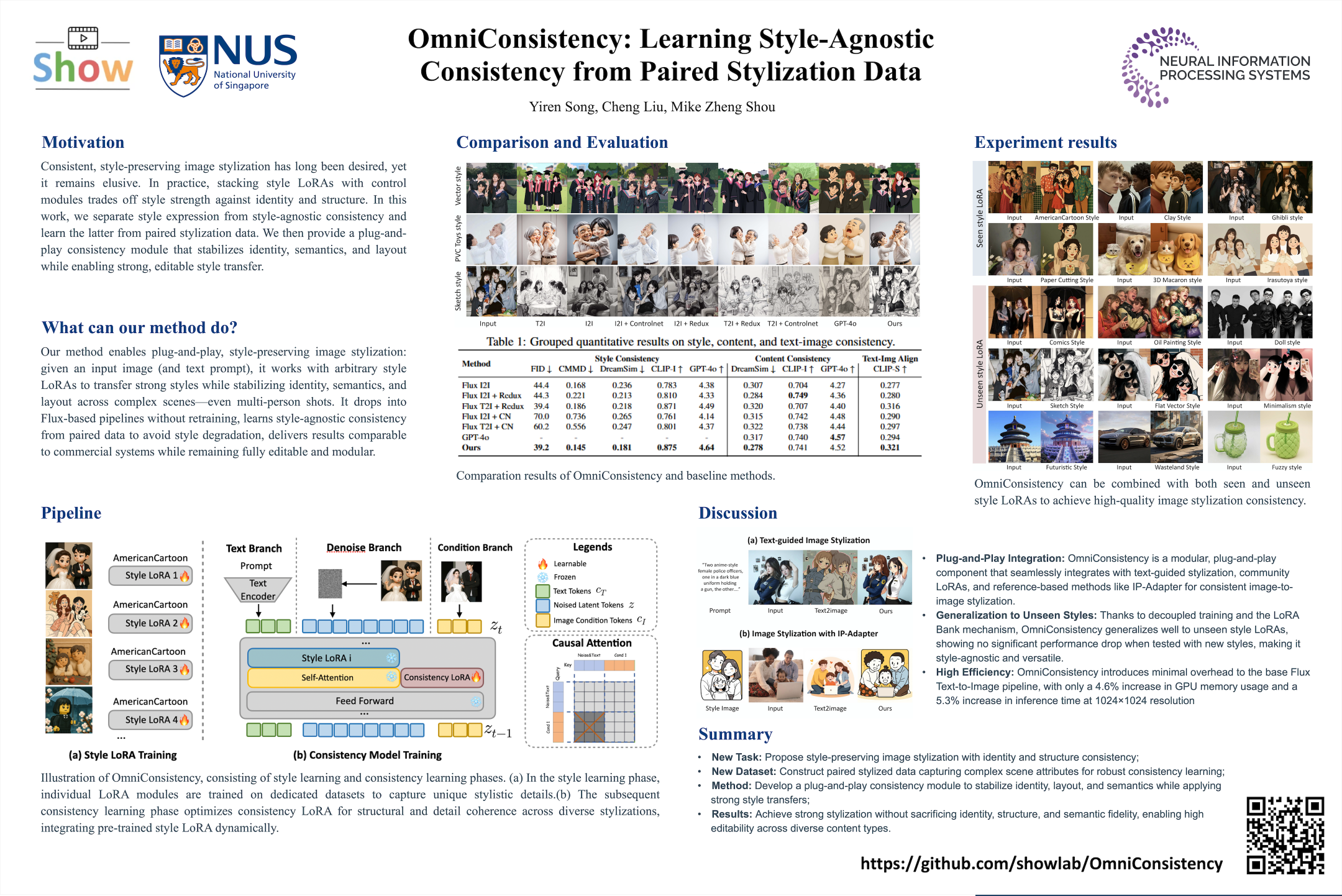

OmniConsistency: Learning Style-Agnostic Consistency from Paired Stylization Data

Abstract

Diffusion models have advanced image stylization significantly, yet two core challenges persist: (1) maintaining consistent stylization in complex scenes, particularly identity, composition, and fine details, and (2) preventing style degradation in image-to-image pipelines with style LoRAs. GPT-4o's exceptional stylization consistency highlights the performance gap between open-source methods and proprietary models.

To bridge this gap, we propose OmniConsistency, a universal consistency plugin leveraging large-scale Diffusion Transformers (DiTs).

OmniConsistency contributes:

- an in-context consistency learning framework trained on aligned image pairs for robust generalization;

- a two-stage progressive learning strategy decoupling style learning from consistency preservation to mitigate style degradation; and

- a fully plug-and-play design compatible with arbitrary style LoRAs under the Flux framework.

Extensive experiments show that OmniConsistency significantly enhances visual coherence and aesthetic quality, achieving performance comparable to commercial state-of-the-art model GPT-4o.