Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#3512

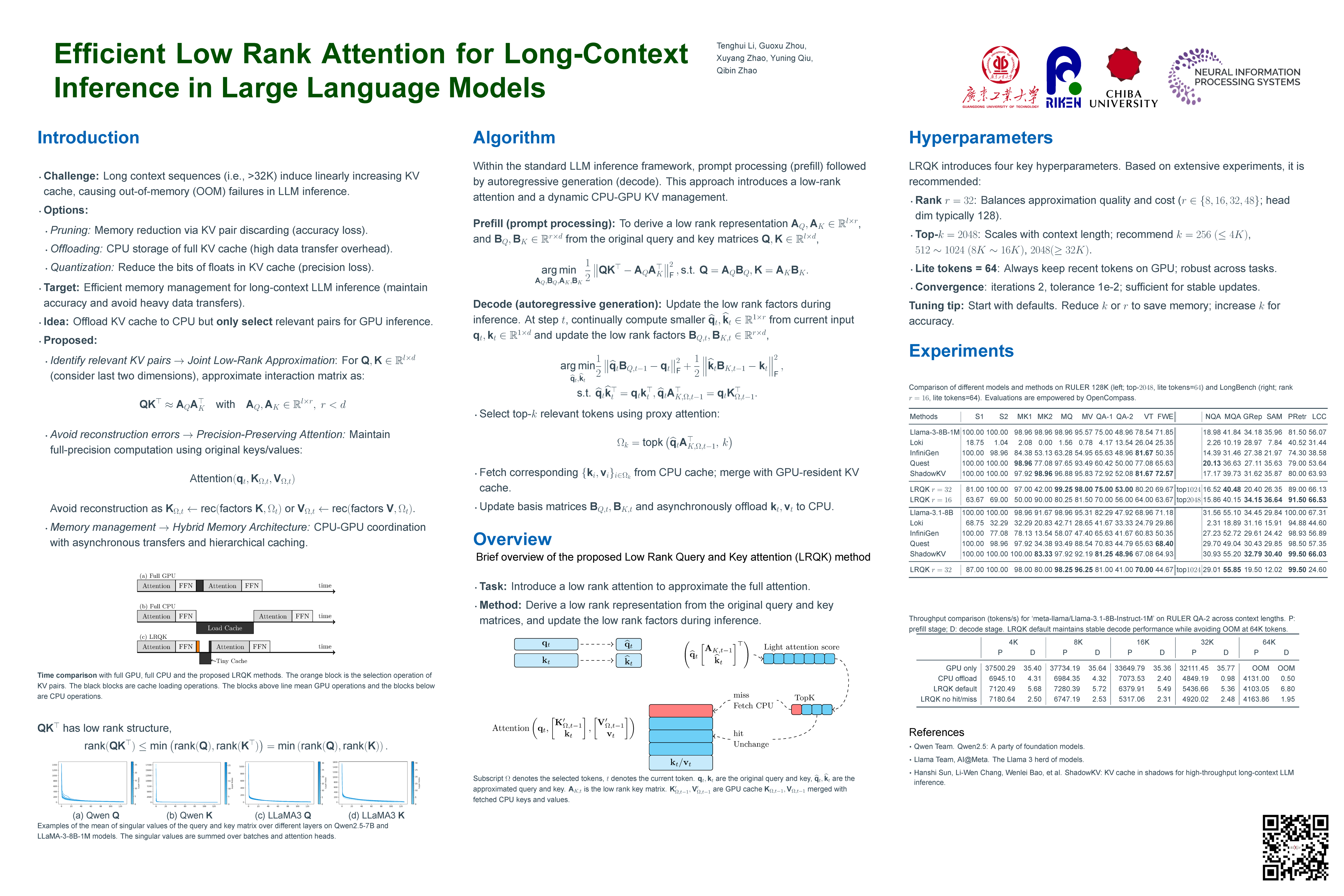

Efficient Low Rank Attention for Long-Context Inference in Large Language Models

Abstract

As the length of input text grows, the key-value (KV) cache in LLMs imposes prohibitive GPU memory costs and limits long-context inference on resource-constrained devices. Existing approaches, such as KV quantization and pruning, reduce memory usage but suffer from numerical precision loss or suboptimal retention of key-value pairs.

We introduce Low Rank Query and Key attention (LRQK), a two-stage framework that jointly decomposes the full-precision query and key matrices into compact rank-(r) factors during the prefill stage, and then uses these low-dimensional projections to compute proxy attention scores in time at each decode step. By selecting only the top-(k) tokens and a small fixed set of recent tokens, LRQK employs a mixed GPU-CPU cache with a hit-and-miss mechanism that transfers only missing full-precision KV pairs, thereby preserving exact attention outputs while reducing CPU-GPU data movement.

Extensive experiments on the RULER and LongBench benchmarks with LLaMA-3-8B and Qwen2.5-7B demonstrate that LRQK matches or surpasses leading sparse-attention methods in long context settings, while delivering significant memory savings with minimal loss in accuracy. Our code is available at https://github.com/tenghuilee/LRQK.