Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#314

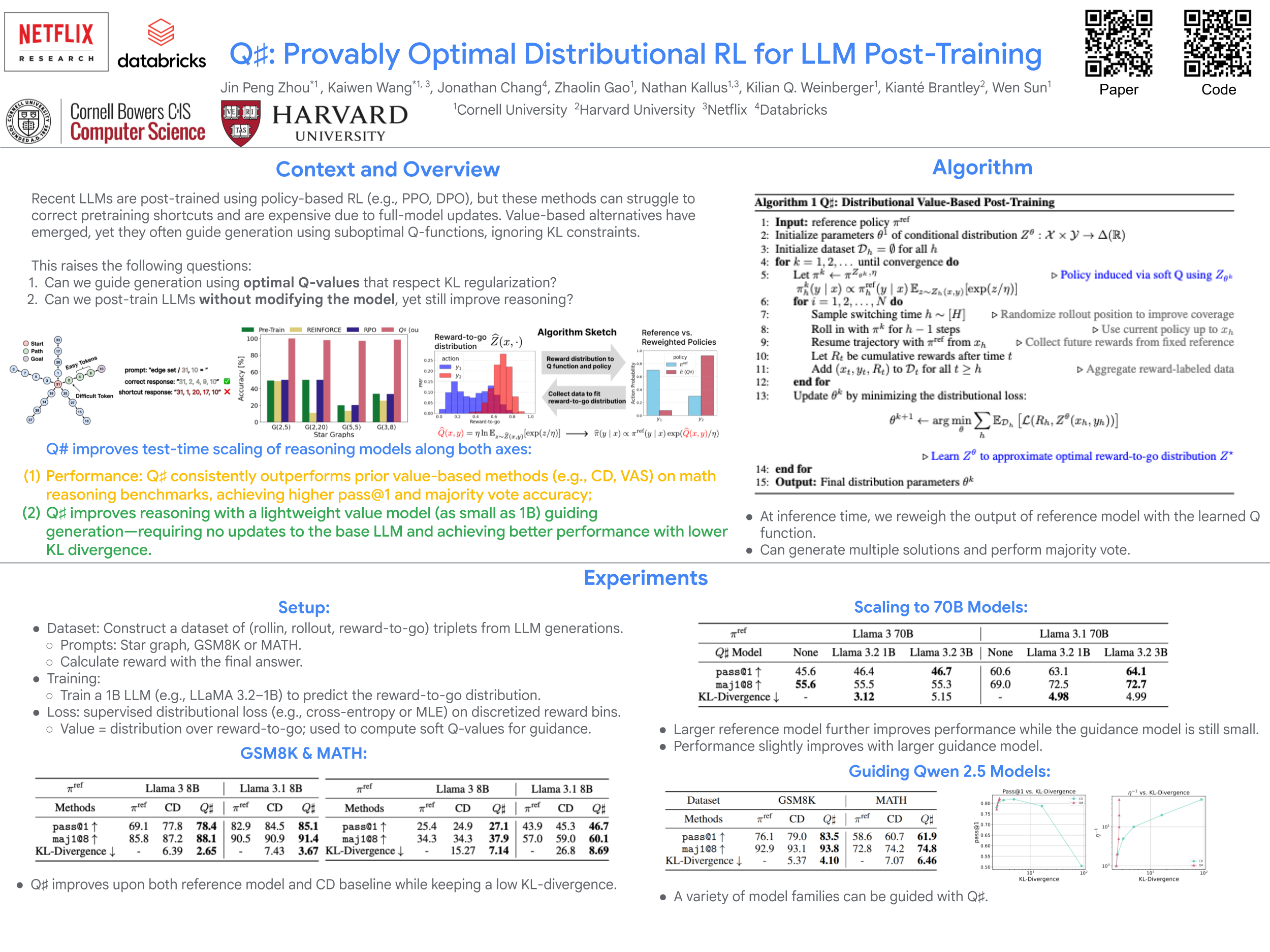

: Provably Optimal Distributional RL for LLM Post-Training

Abstract

Reinforcement learning (RL) post-training is crucial for LLM alignment and reasoning, but existing policy-based methods, such as PPO and DPO, can fall short of fixing shortcuts inherited from pre-training. In this work, we introduce , a value-based algorithm for KL-regularized RL that guides the reference policy using the optimal regularized function. We propose to learn the optimal function using distributional RL on an aggregated online dataset. Unlike prior value-based baselines that guide the model using unregularized -values, our method is theoretically principled and provably learns the optimal policy for the KL-regularized RL problem.

Empirically, outperforms prior baselines in math reasoning benchmarks while maintaining a smaller KL divergence to the reference policy.

Theoretically, we establish a reduction from KL-regularized RL to no-regret online learning, providing the first bounds for deterministic MDPs under only realizability. Thanks to distributional RL, our bounds are also variance-dependent and converge faster when the reference policy has small variance.

In sum, our results highlight as an effective approach for post-training LLMs, offering both improved performance and theoretical guarantees. The code can be found at https://github.com/jinpz/qsharp.