Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#2913

Gradient-Guided Epsilon Constraint Method for Online Continual Learning

Abstract

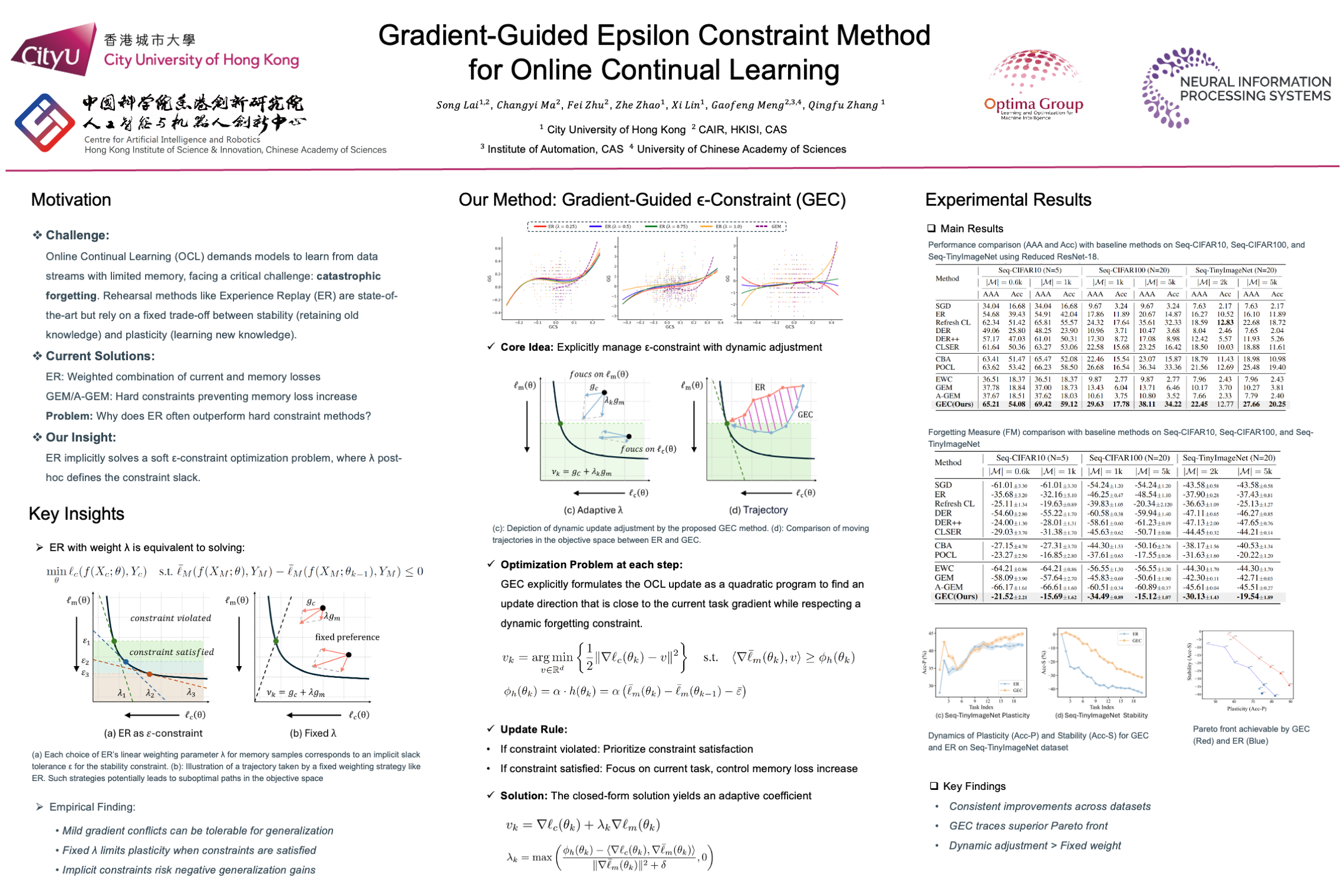

Online Continual Learning (OCL) requires models to learn sequentially from data streams with limited memory. Rehearsal-based methods, particularly Experience Replay (ER), are commonly used in OCL scenarios. This paper revisits ER through the lens of -constraint optimization, revealing that ER implicitly employs a soft constraint on past task performance, with its weighting parameter post-hoc defining a slack variable.

While effective, ER's implicit and fixed slack strategy has limitations: it can inadvertently lead to updates that negatively impact generalization, and its fixed trade-off between plasticity and stability may not optimally balance current streaming with memory retention, potentially overfitting to the memory buffer.

To address these shortcomings, we propose the Gradient-Guided Epsilon Constraint (GEC) method for online continual learning. GEC explicitly formulates the OCL update as an -constraint optimization problem, which minimize the loss on the current task data and transform the stability objective as constraints and propose a gradient-guided method to dynamically adjusts the update direction based on whether the performance on memory samples violates a predefined slack tolerance : if forgetting exceeds this tolerance, GEC prioritizes constraint satisfaction; otherwise, it focuses on the current task while controlling the rate of increase in memory loss.

Empirical evaluations on standard OCL benchmarks demonstrate GEC's ability to achieve a superior trade-off, leading to improved overall performance.