Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#3907

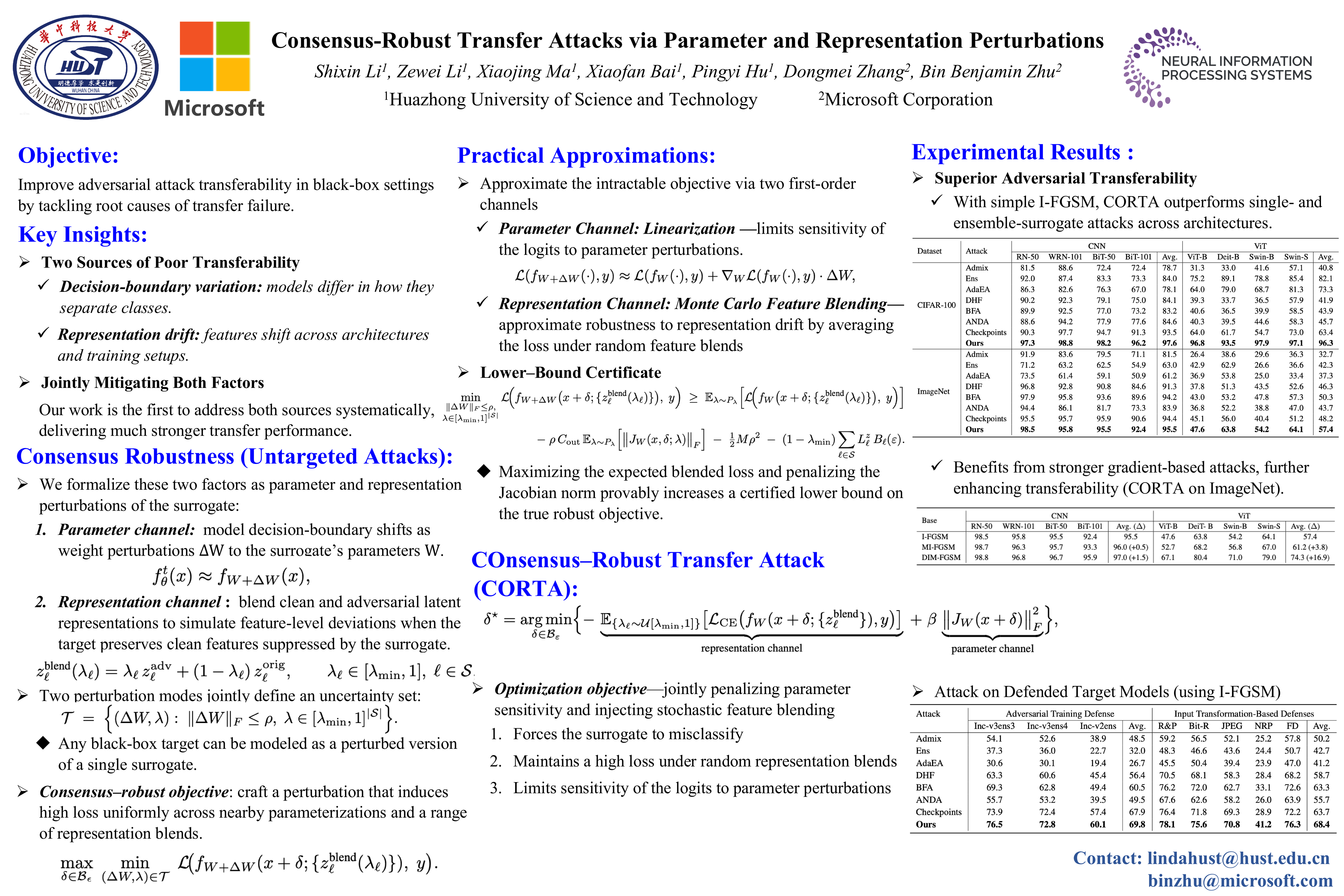

Consensus-Robust Transfer Attacks via Parameter and Representation Perturbations

Abstract

Adversarial examples crafted on one model often exhibit poor transferability to others, hindering their effectiveness in black-box settings. This limitation arises from two key factors:

- decision-boundary variation across models

- representation drift in feature space.

We address these challenges through a new perspective that frames transferability for untargeted attacks as a consensus-robust optimization problem: adversarial perturbations should remain effective across a neighborhood of plausible target models. To model this uncertainty, we introduce two complementary perturbation channels: a parameter channel, capturing boundary shifts via weight perturbations, and a representation channel, addressing feature drift via stochastic blending of clean and adversarial activations.

We then propose CORTA (COnsensus–Robust Transfer Attack), a lightweight attack instantiated from this robust formulation using two first-order strategies:

- sensitivity regularization based on the squared Frobenius norm of logits’ Jacobian with respect to weights, and

- Monte Carlo sampling for blended feature representations.

Our theoretical analysis provides a certified lower bound linking these approximations to the robust objective. Extensive experiments on CIFAR-100 and ImageNet show that CORTA significantly outperforms state-of-the-art transfer-based methods—including ensemble approaches—across CNN and Vision Transformer targets. Notably, CORTA achieves a 19.1 percentage-point gain in transfer success rate over the best prior method while using only a single surrogate model.