Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#4706 Spotlight

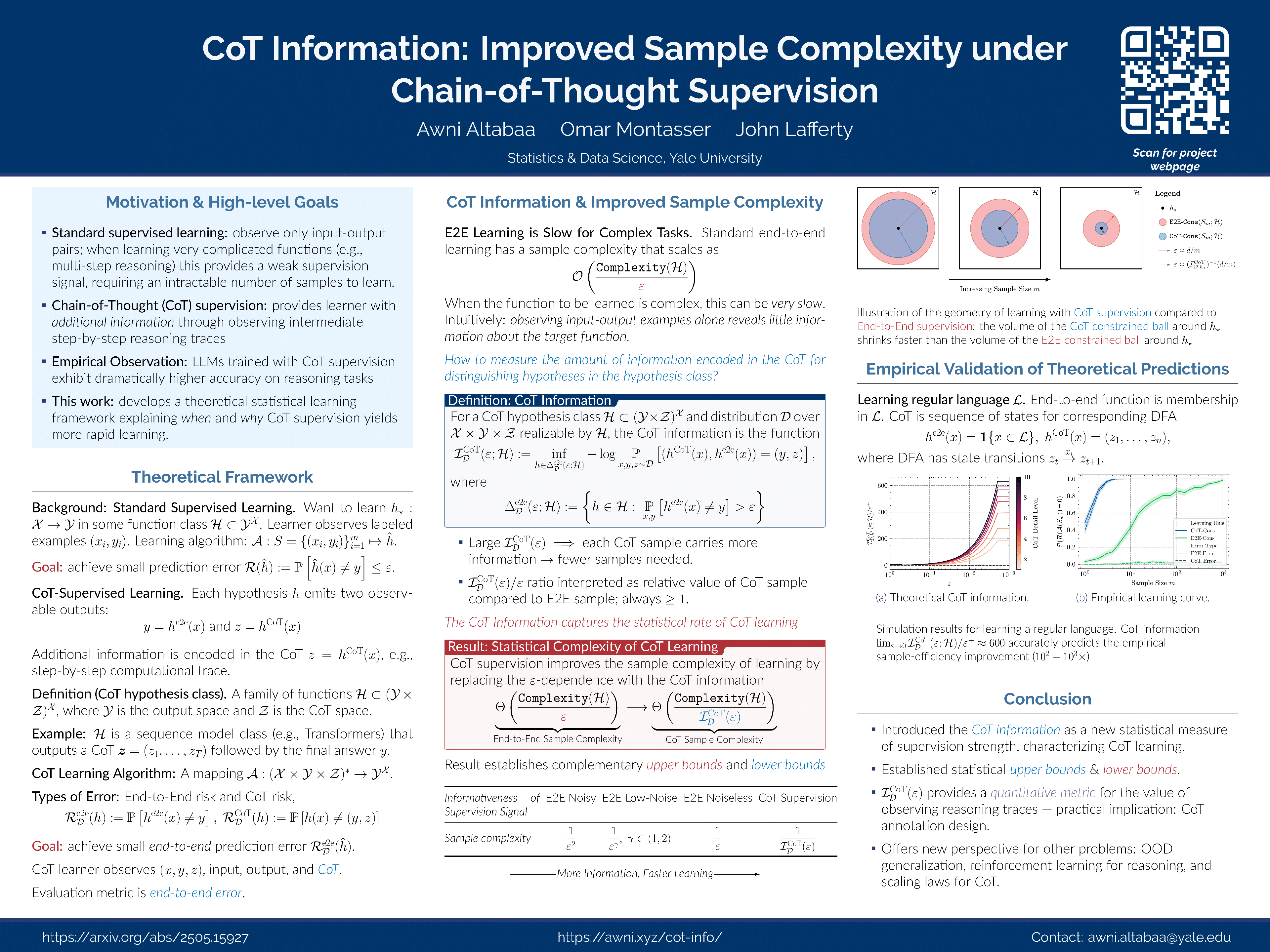

CoT Information: Improved Sample Complexity under Chain-of-Thought Supervision

Abstract

Learning complex functions that involve multi-step reasoning poses a significant challenge for standard supervised learning from input-output examples. Chain-of-thought (CoT) supervision, which augments training data with intermediate reasoning steps to provide a richer learning signal, has driven recent advances in large language model reasoning.

This paper develops a statistical theory of learning under CoT supervision. Central to the theory is the CoT information, which measures the additional discriminative power offered by the chain-of-thought for distinguishing hypotheses with different end-to-end behaviors.

The main theoretical results demonstrate how CoT supervision can yield significantly faster learning rates compared to standard end-to-end supervision, with both upper bounds and information-theoretic lower bounds characterized by the CoT information.