Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#1210

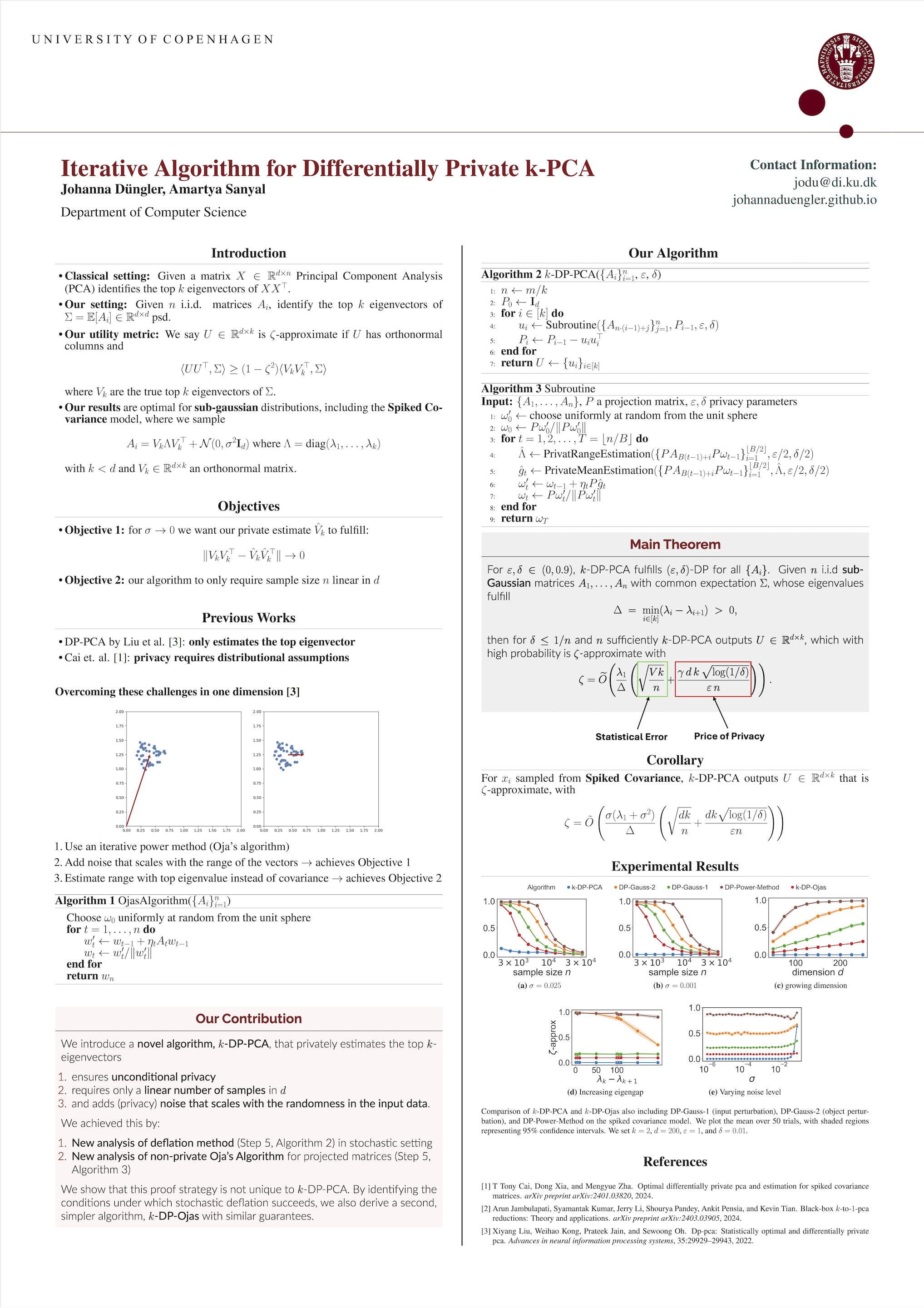

An Iterative Algorithm for Differentially Private -PCA with Adaptive Noise

Abstract

Given i.i.d.random matrices that share common expectation , the objective of Differentially Private Stochastic PCA is to identify a subspace of dimension that captures the largest variance directions of , while preserving differential privacy (DP) of each individual .

Existing methods either

- require the sample size to scale super-linearly with dimension , even under Gaussian assumptions on the , or

- introduce excessive noise for DP even when the intrinsic randomness within is small. \citet{liu2022dp} addressed these issues for sub-Gaussian data but only for estimating the top eigenvector () using their algorithm DP-PCA.

We propose the first algorithm capable of estimating the top eigenvectors for arbitrary , whilst overcoming both limitations above.

For , our algorithm matches the utility guarantees of DP-PCA, achieving near-optimal statistical error even when . We further provide a lower bound for general , matching our upper bound up to a factor of , and experimentally demonstrate the advantages of our algorithm over comparable baselines.