Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#2002

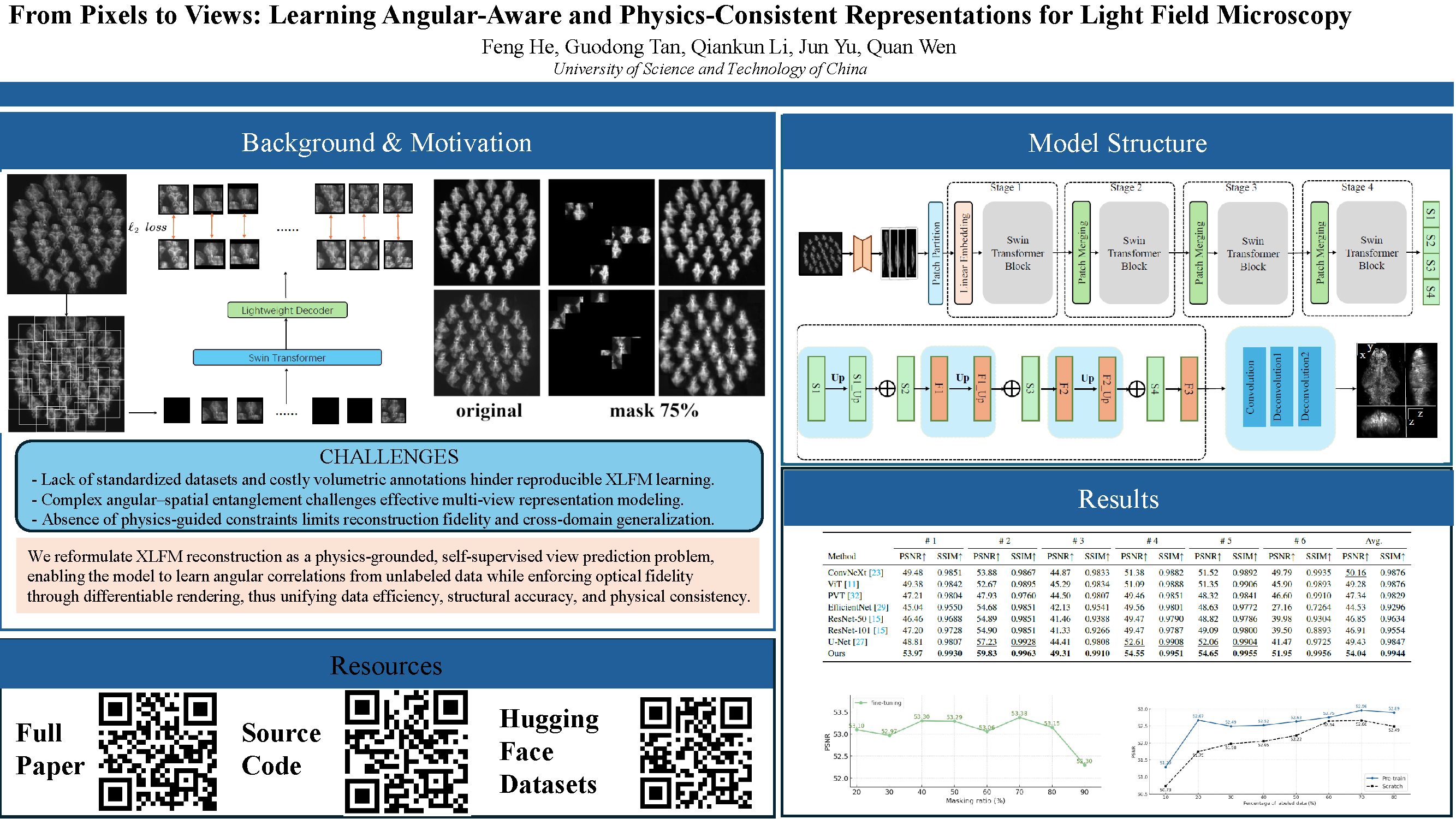

From Pixels to Views: Learning Angular-Aware and Physics-Consistent Representations for Light Field Microscopy

Abstract

Light field microscopy (LFM) has become an emerging tool in neuroscience for large-scale neural imaging in vivo, with XLFM (eXtended Light Field Microscopy) notable for its single-exposure volumetric imaging, broad field of view, and high temporal resolution. However, learning-based 3D reconstruction in XLFM remains underdeveloped due to two core challenges: the absence of standardized datasets and the lack of methods that can efficiently model its angular–spatial structure while remaining physically grounded.

We address these challenges by introducing three key contributions.

- First, we construct the

XLFM-Zebrafishbenchmark, a large-scale dataset and evaluation suite for XLFM reconstruction. - Second, we propose Masked View Modeling for Light Fields (

MVM-LF), a self-supervised task that learns angular priors by predicting occluded views, improving data efficiency. - Third, we formulate the Optical Rendering Consistency Loss (

ORC Loss), a differentiable rendering constraint that enforces alignment between predicted volumes and their PSF-based forward projections.

On the

XLFM-Zebrafish benchmark, our method improves PSNR by 7.7% over state-of-the-art baselines. Code and datasets are publicly available at: https://github.com/hefengcs/XLFM-Former.