Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#3012 Spotlight

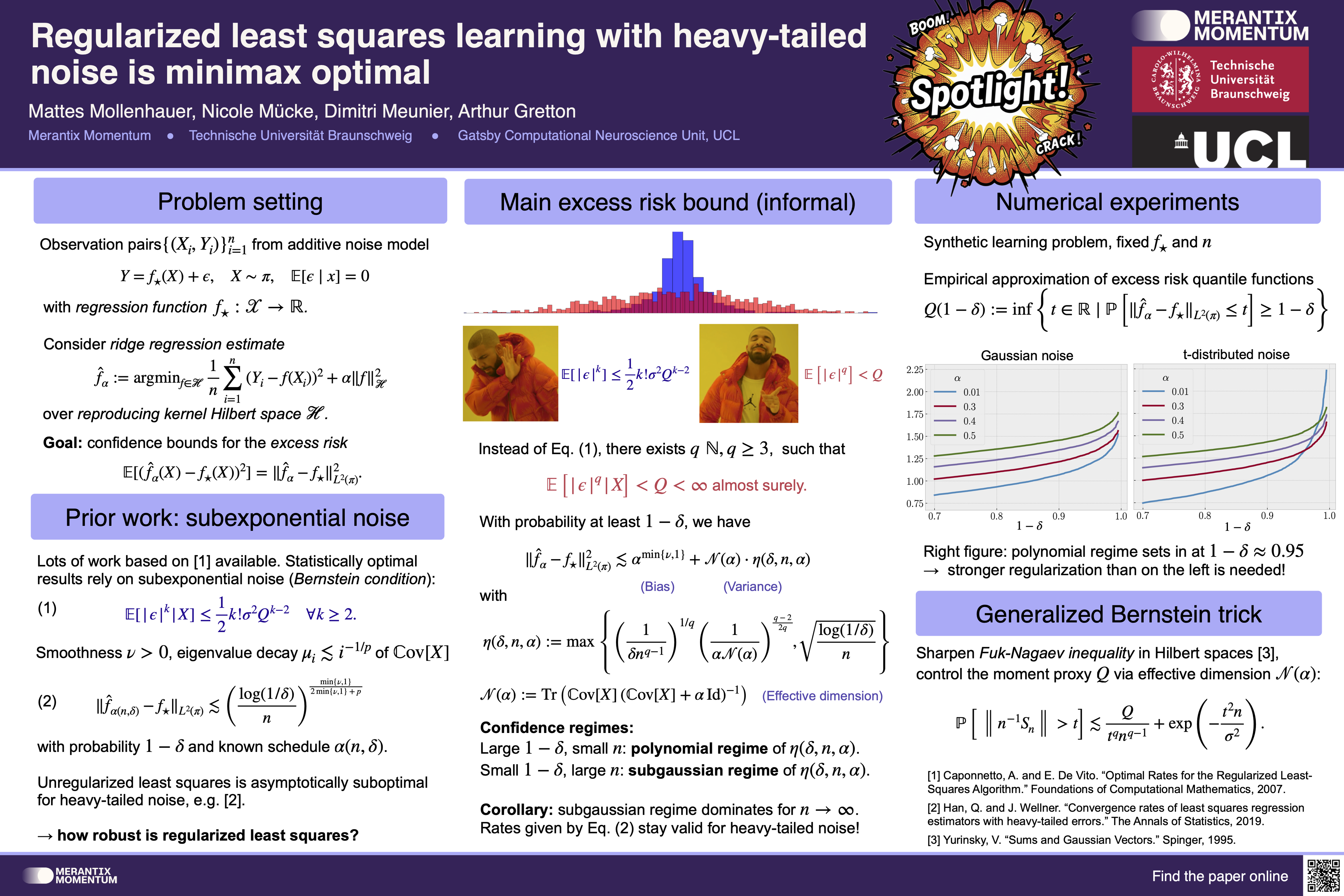

Regularized least squares learning with heavy-tailed noise is minimax optimal

Abstract

This paper examines the performance of ridge regression in reproducing kernel Hilbert spaces in the presence of noise that exhibits a finite number of higher moments.

We establish excessrisk bounds consisting of subgaussian and polynomial terms based on the well known integraloperator framework. The dominant subgaussian component allows to achieve convergence ratesthat have previously only been derived under subexponential noise—a prevalent assumption inrelated work from the last two decades.

These rates are optimal under standard eigenvalue decayconditions, demonstrating the asymptotic robustness of regularized least squares against heavy-tailed noise. Our derivations are based on a Fuk–Nagaev inequality for Hilbert-space valuedrandom variables.