Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#4106

Exploiting Vocabulary Frequency Imbalance in Language Model Pre-training

Abstract

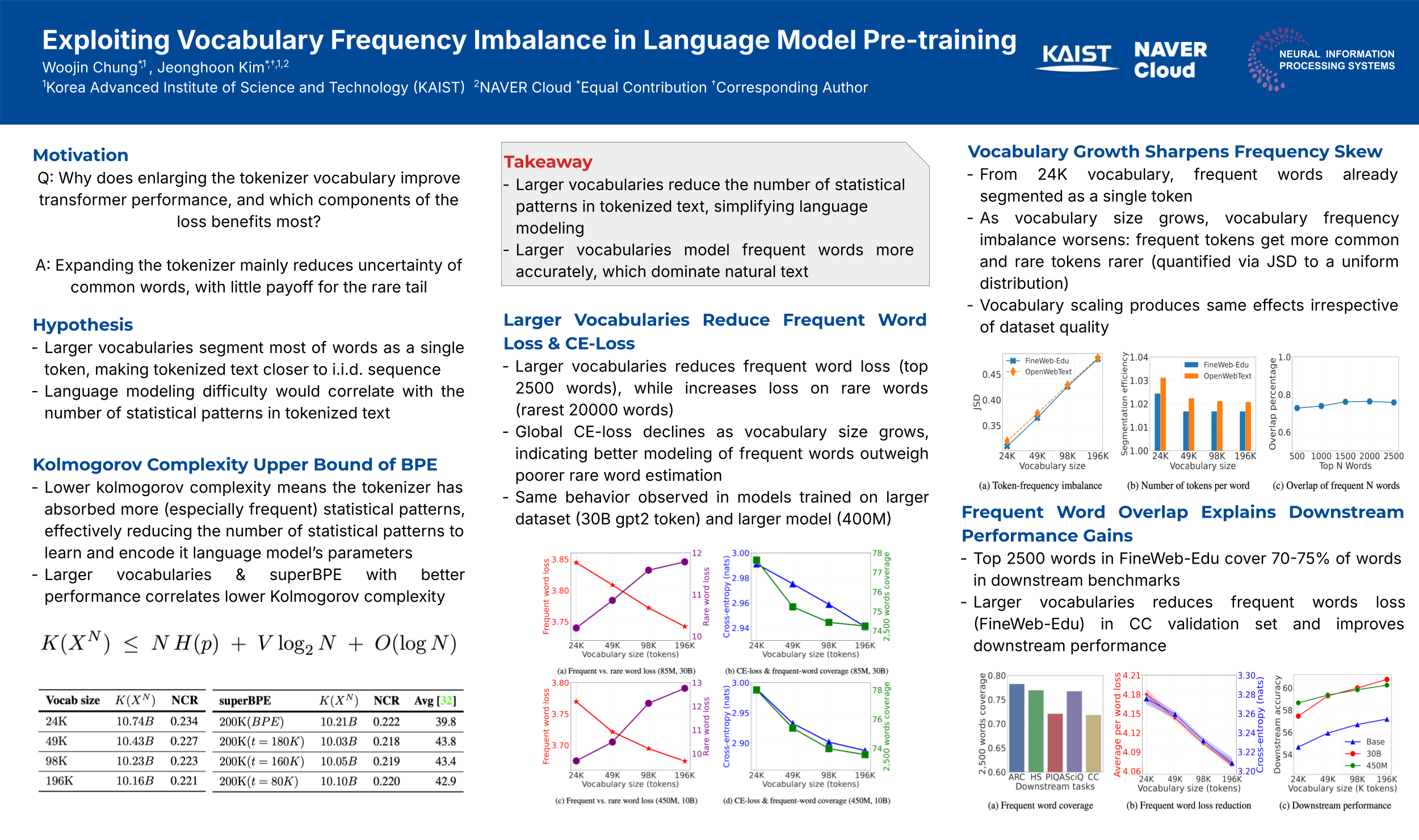

Large language models are trained with tokenizers that map text to a fixed vocabulary, yet the resulting token distribution is highly imbalanced: a few words dominate the stream while most occur rarely. Recent practice favours ever-larger vocabularies, but it is unclear whether the benefit comes from better word segmentation or from amplifying this frequency skew.

To this end, we perform a controlled study that scales the vocabulary of a constant-size Transformer from 24K to 196K symbols while holding data, compute and optimisation unchanged. Above 24K every common word is already a single token, so further growth only increases imbalance. Word-level loss decomposition shows that larger vocabularies reduce cross-entropy almost exclusively by lowering uncertainty on the ~ most frequent words, even though loss on the rare tail rises.

Same frequent words cover roughly of tokens in downstream benchmarks, this training advantage transfers intact.

We further show that enlarging model parameters with a fixed tokenizer yields the same frequent-word benefit, revealing a shared mechanism behind vocabulary and model scaling. Our results recast "bigger vocabularies help" as "sharper frequency imbalance helps," offering a simple, principled knob for tokenizer-model co-design and clarifying the loss dynamics that govern language-model scaling in pre-training.