Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#3306

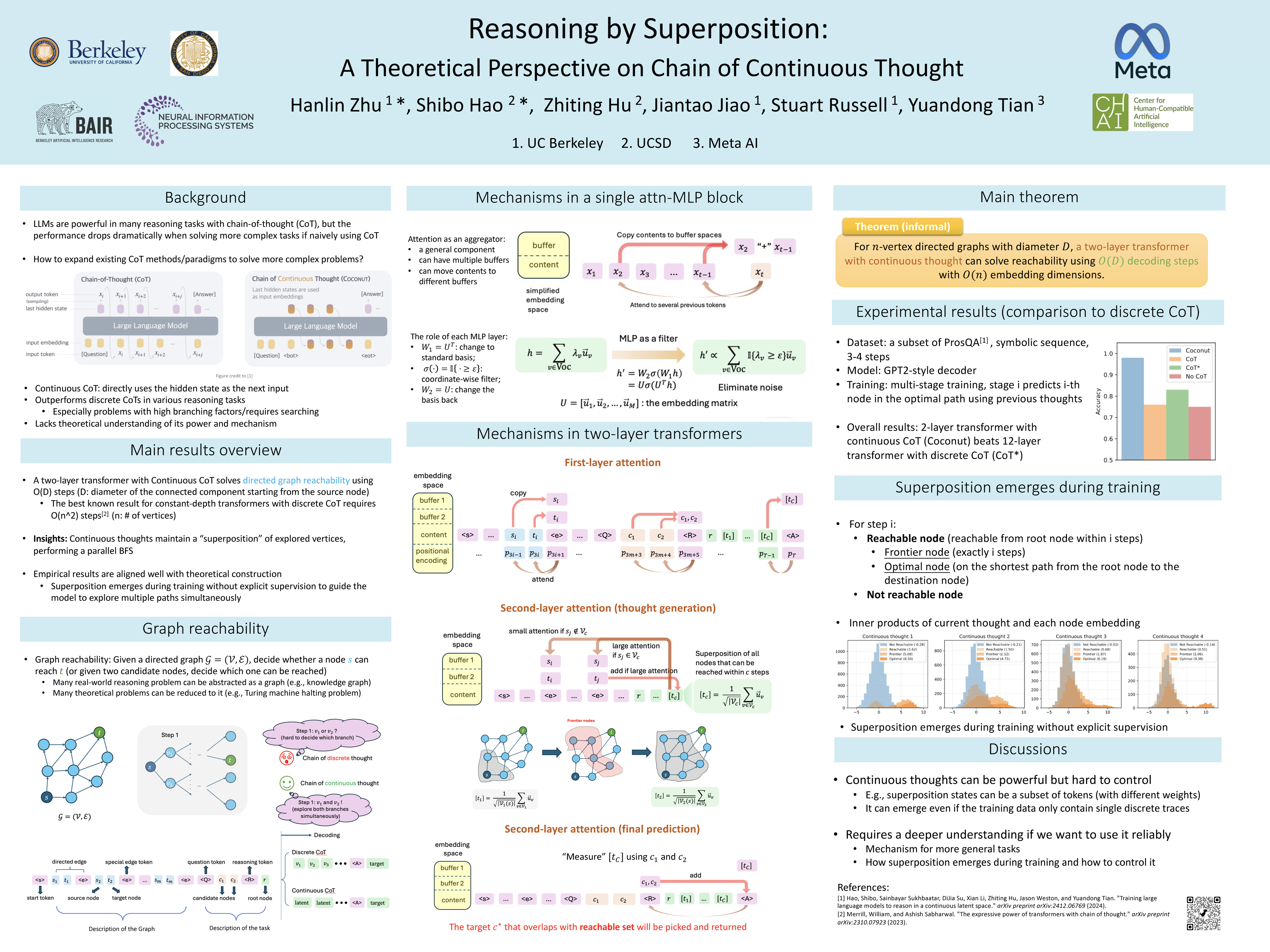

Reasoning by Superposition: A Theoretical Perspective on Chain of Continuous Thought

Abstract

Large Language Models (LLMs) have demonstrated remarkable performance in many applications, including challenging reasoning problems via chain-of-thought (CoT) techniques that generate ``thinking tokens'' before answering the questions. While existing theoretical works demonstrate that CoT with discrete tokens boosts the capability of LLMs, recent work on continuous CoT lacks a theoretical understanding of why it outperforms discrete counterparts in various reasoning tasks, such as directed graph reachability, a fundamental graph reasoning problem that includes many practical domain applications as special cases.

In this paper, we prove that a two-layer transformer with steps of continuous CoT can solve the directed graph reachability problem, where is the diameter of the graph, while the best known result of constant-depth transformers with discrete CoT requires decoding steps where is the number of vertices (