Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#3907

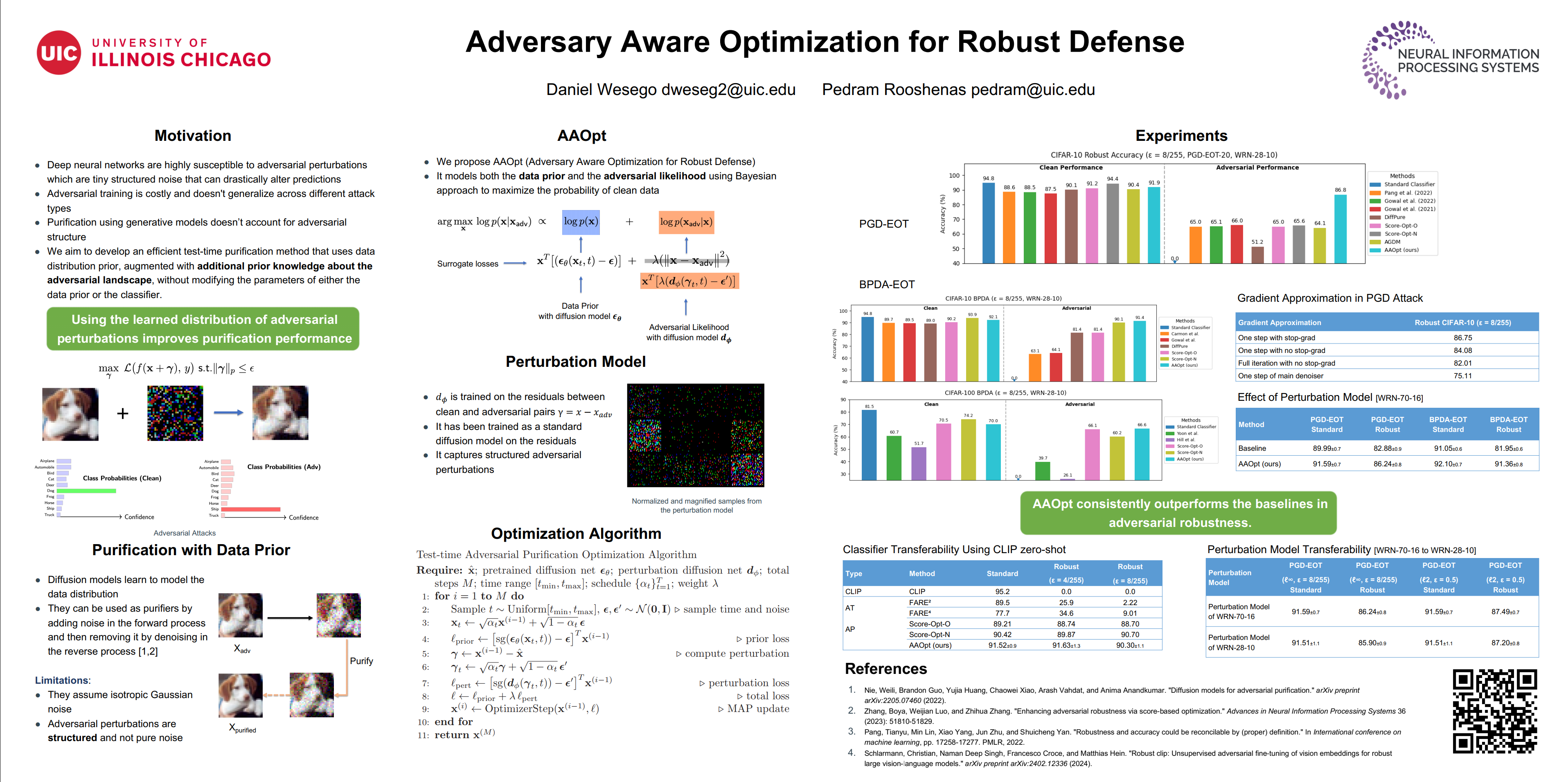

Adversary Aware Optimization for Robust Defense

Abstract

Deep neural networks remain highly susceptible to adversarial attacks, where small, subtle perturbations to input images may induce misclassification.

We propose a novel optimization-based purification framework that directly removes these perturbations by maximizing a Bayesian-inspired objective combining a pretrained diffusion prior with a likelihood term tailored to the adversarial perturbation space. Our method iteratively refines a given input through gradient-based updates of a combined score-based loss to guide the purification process. Unlike existing optimization-based defenses that treat adversarial noise as generic corruption, our approach explicitly integrates the adversarial landscape into the objective.

Experiments performed on CIFAR-10 and CIFAR-100 demonstrate strong robust accuracy against a range of common adversarial attacks.

Our work offers a principled test-time defense grounded in probabilistic inference using score-based generative models. Our code can be found at https://github.com/rooshenasgroup/aaopt.