Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#4904 Spotlight

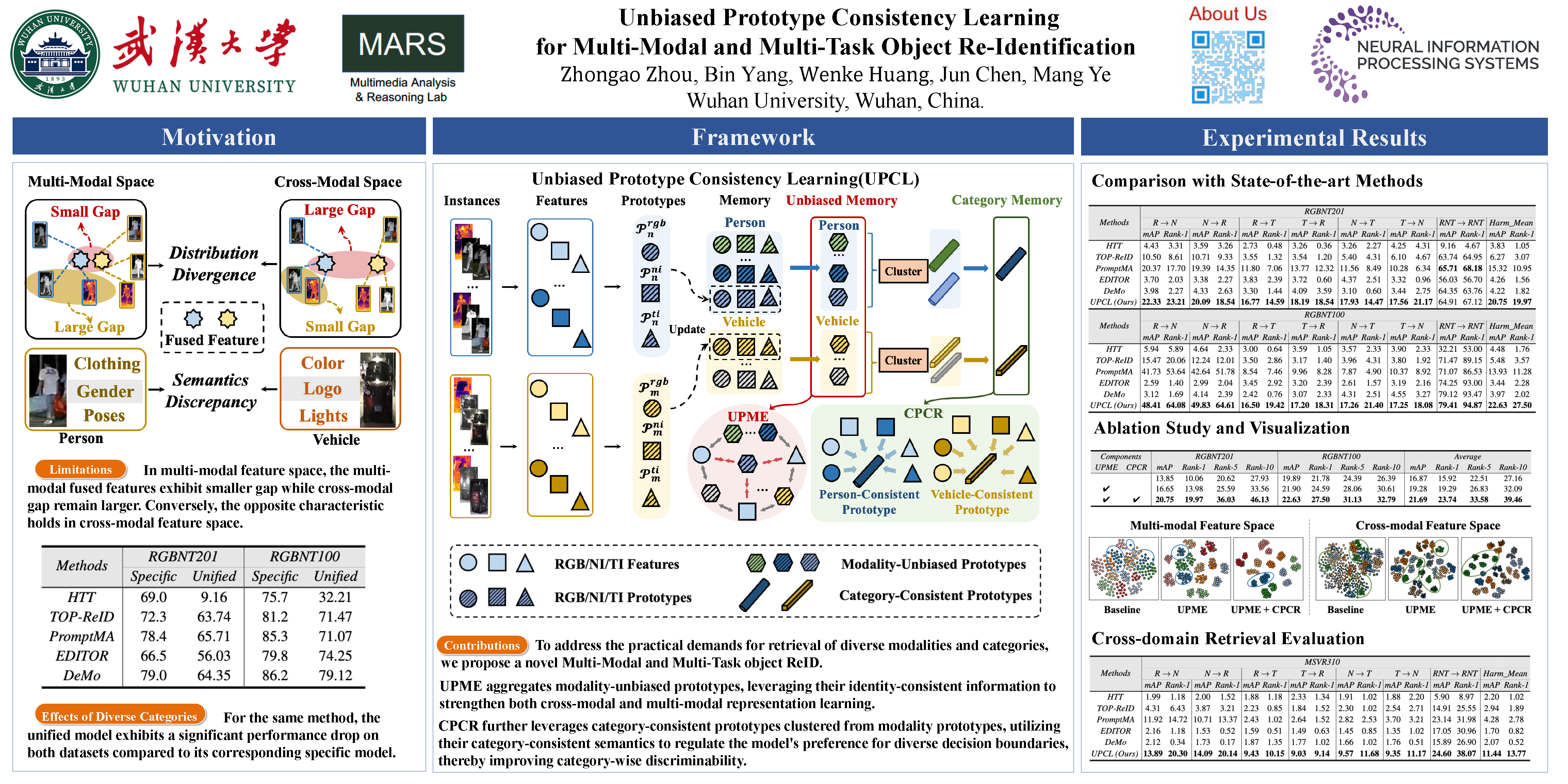

Unbiased Prototype Consistency Learning for Multi-Modal and Multi-Task Object Re-Identification

Abstract

In object re-identification (ReID) task, both cross-modal and multi-modal retrieval methods have achieved notable progress. However, existing approaches are designed for specific modality and category (person or vehicle) retrieval task, lacking generalizability to others. Acquiring multiple task-specific models would result in wasteful allocation of both training and deployment resources. To address the practical requirements for unified retrieval, we introduce Multi-Modal and Multi-Task object ReID (-ReID). The -ReID task aims to utilize a unified model to simultaneously achieve retrieval tasks across different modalities and different categories.

Specifically, to tackle the challenges of modality distibution divergence and category semantics discrepancy posed in -ReID, we design a novel Unbiased Prototype Consistency Learning (UPCL) framework, which consists of two main modules: Unbiased Prototypes-guided Modality Enhancement (UPME) and Cluster Prototype Consistency Regularization (CPCR). UPME leverages modality-unbiased prototypes to simultaneously enhance cross-modal shared features and multi-modal fused features. Additionally, CPCR regulates discriminative semantics learning with category-consistent information through prototypes clustering.

Under the collaborative operation of these two modules, our model can simultaneously learn robust cross-modal shared feature and multi-modal fused feature spaces, while also exhibiting strong category-discriminative capabilities. Extensive experiments on multi-modal datasets RGBNT201 and RGBNT100 demonstrates our UPCL framework showcasing exceptional performance for -ReID.

The code is available at https://github.com/ZhouZhongao/UPCL.