Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#1206

Understanding and Improving Fast Adversarial Training against Bounded Perturbations

Abstract

This work studies fast adversarial training against sparse adversarial perturbations bounded by norm.

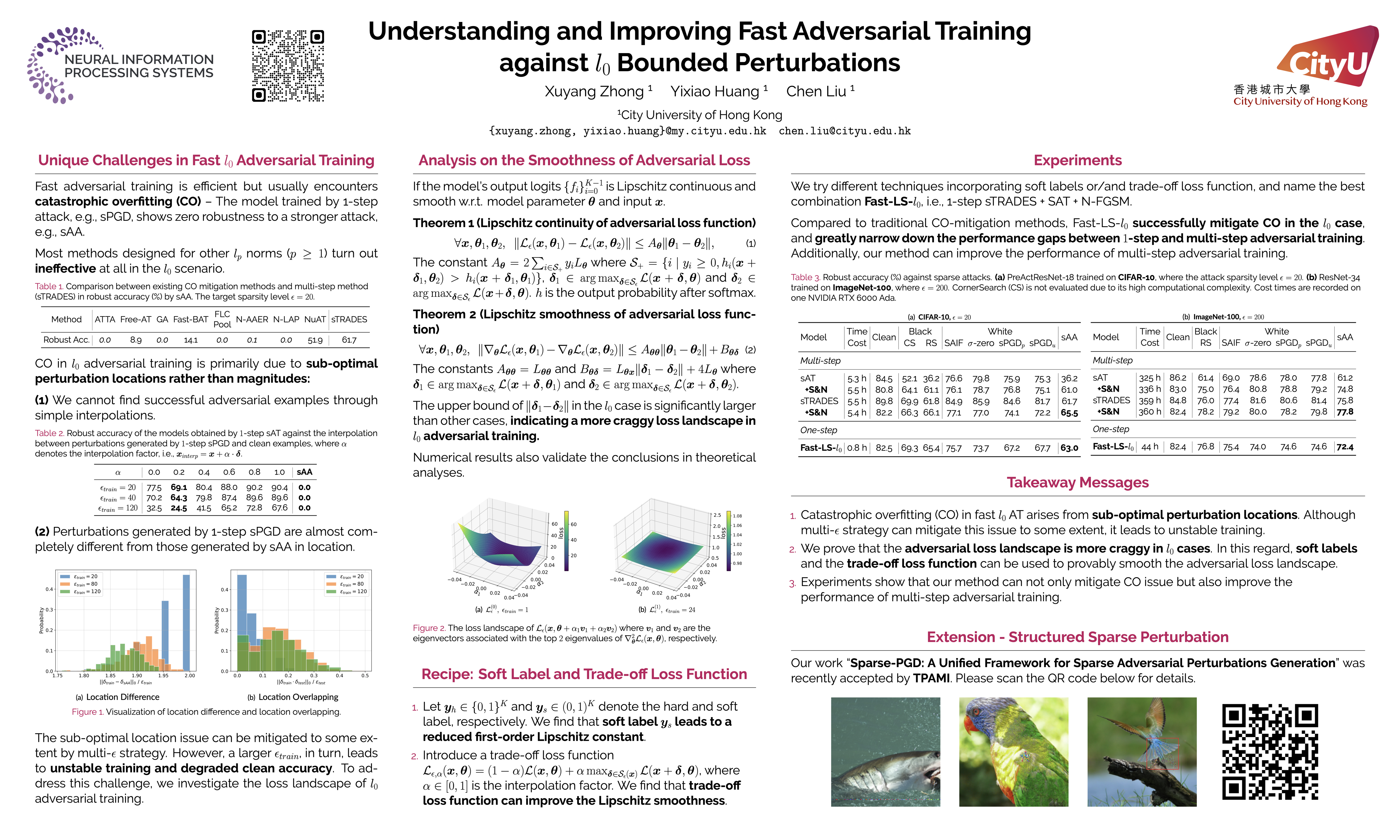

We first demonstrate the unique challenges of employing -step attacks on bounded perturbations, especially catastrophic overfitting (CO) that cannnot be properly addressed by existing fast adversarial training method for other norms (). We highlight that CO in adversarial training arises from sub-optimal perturbation locations of -step attack. Some strategies like multi- can mitigate this sub-optimality to some extent, they lead to unstable training in turn. Theoretical and numerical analyses also reveal that the loss landscape of adversarial training is more craggy than its , and counterparts, which exaggerates CO.

To address this issue, we adopt soft labels and the trade-off loss function to smooth the adversarial loss landscape. Extensive experiments demonstrate our method can overcome the challenge of CO, achieve state-of-the-art performance, and narrow the performance gap between -step and multi-step adversarial training against sparse attacks.