Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#3011

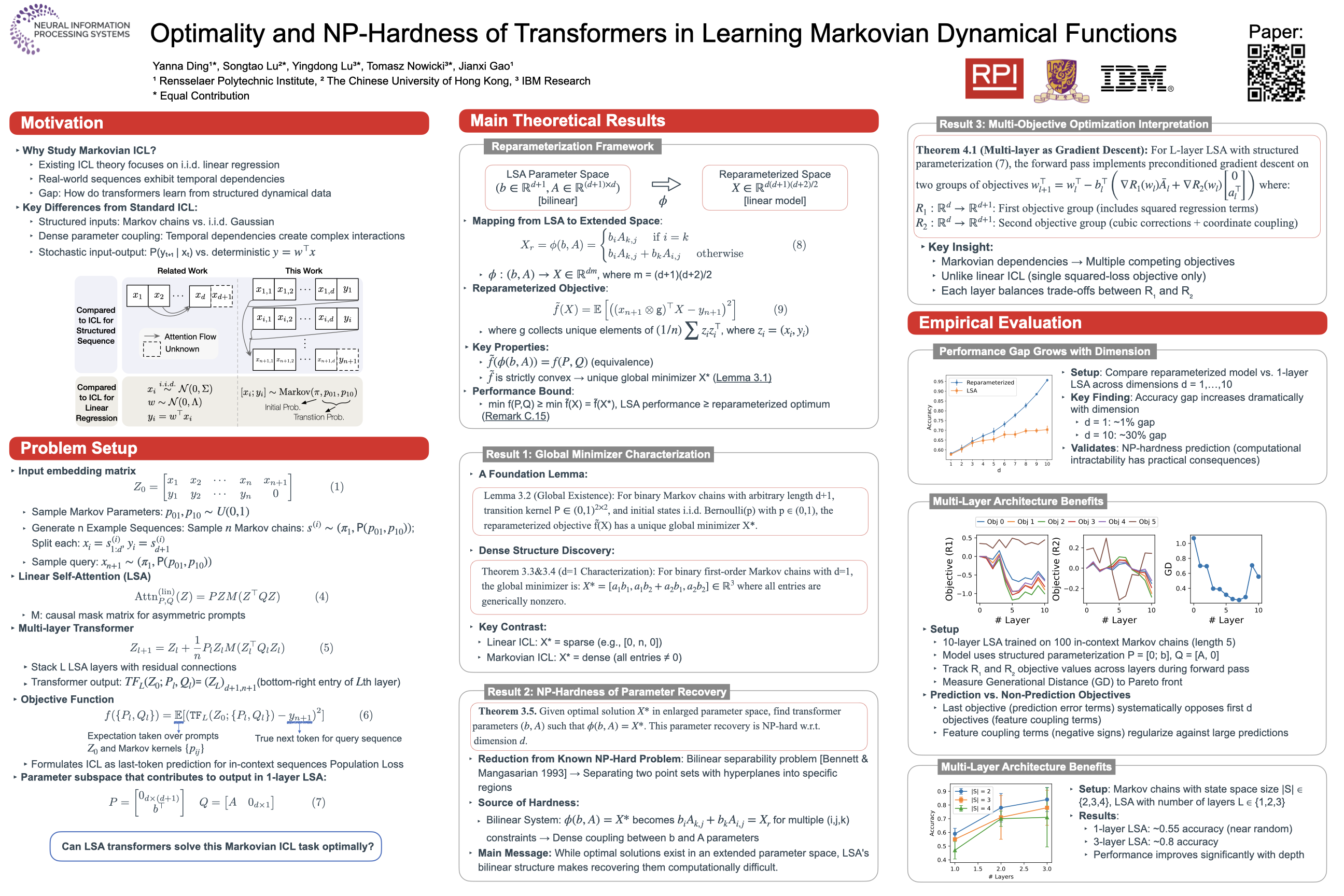

Optimality and NP-Hardness of Transformers in Learning Markovian Dynamical Functions

Abstract

Transformer architectures can solve unseen tasks based on input-output pairs in a given prompt due to in-context learning (ICL).

Existing theoretical studies on ICL have mainly focused on linear regression tasks, often with i.i.d. inputs. To understand how transformers express in-context learning when modeling dynamics-driven functions, we investigate Markovian function learning through a structured ICL setup, where we characterize the loss landscape to reveal underlying optimization behaviors.

Specifically, we:

- provide the closed-form expression of the global minimizer (in an enlarged parameter space) for a single-layer linear self-attention (LSA) model;

- prove that recovering transformer parameters that realize the optimal solution is NP-hard in general, revealing a fundamental limitation of one-layer LSA in representing structured dynamical functions; and

- supply a novel interpretation of a multilayer LSA as performing preconditioned gradient descent to optimize multiple objectives beyond the square loss.

These theoretical results are numerically validated using simplified transformers.