Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#4302

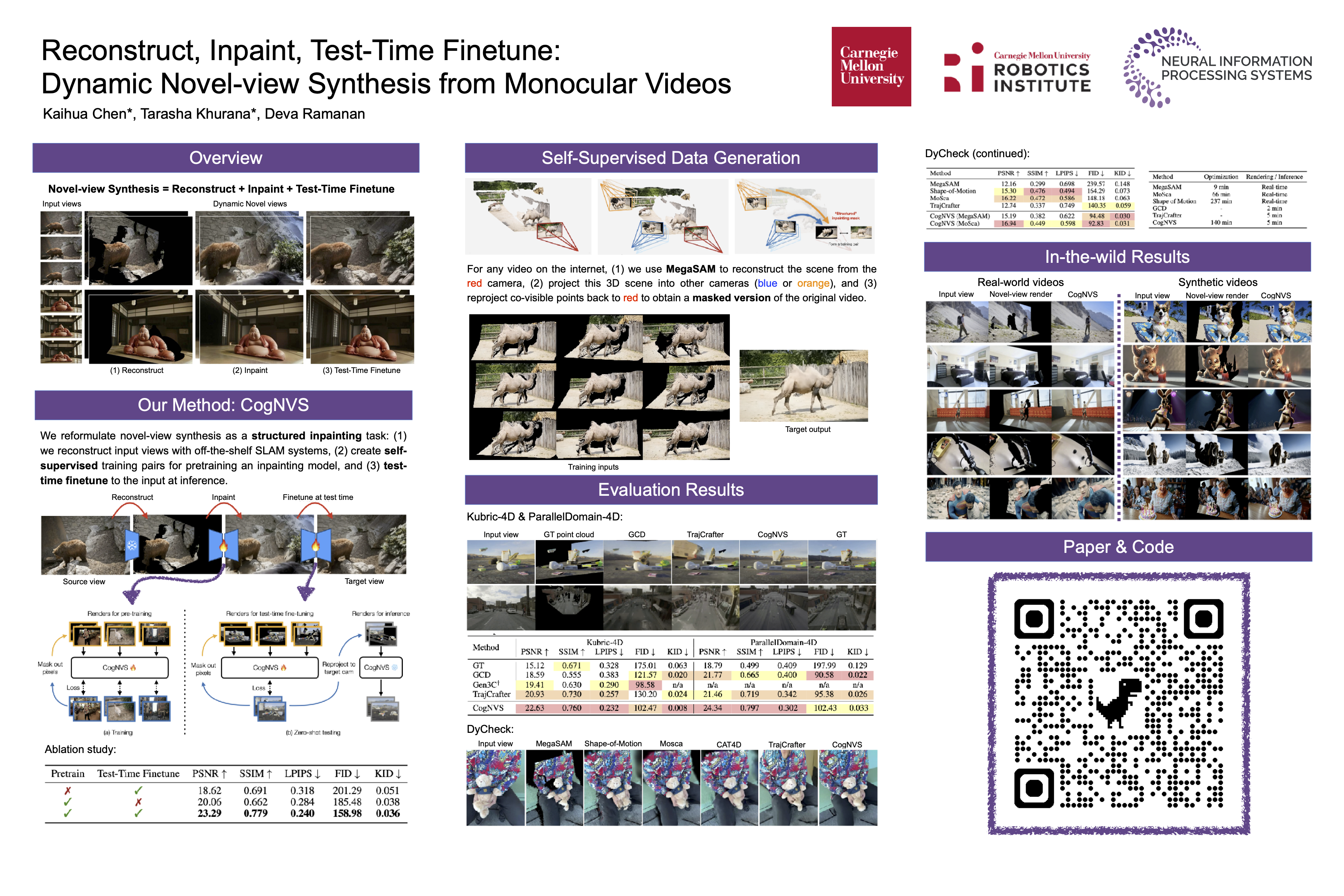

Reconstruct, Inpaint, Test-Time Finetune: Dynamic Novel-view Synthesis from Monocular Videos

Abstract

We explore novel-view synthesis for dynamic scenes from monocular videos. Prior approaches rely on costly test-time optimization of 4D representations or do not preserve scene geometry when trained in a feed-forward manner.

Our approach is based on three key insights:

- covisible pixels (that are visible in both the input and target views) can be rendered by first reconstructing the dynamic 3D scene and rendering the reconstruction from the novel-views and

- hidden pixels in novel views can be "inpainted" with feed-forward 2D video diffusion models.

Notably, our video inpainting diffusion model (CogNVS) can be self-supervised from 2D videos, allowing us to train it on a large corpus of in-the-wild videos. This in turn allows for

- CogNVS to be applied zero-shot to novel test videos via test-time finetuning.

We empirically verify that CogNVS outperforms almost all prior art for novel-view synthesis of dynamic scenes from monocular videos.