Poster Session 5 · Friday, December 5, 2025 11:00 AM → 2:00 PM

#715

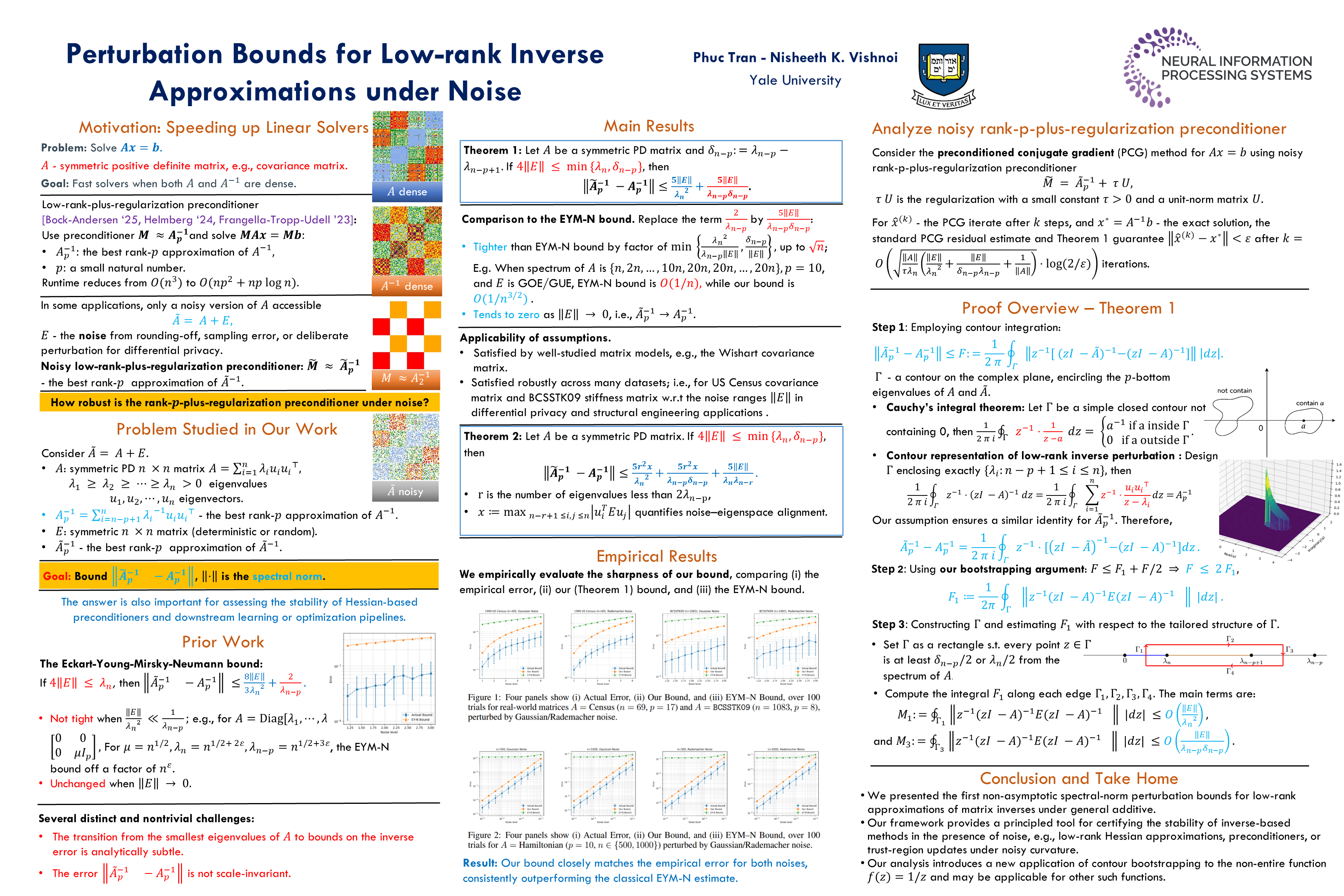

Perturbation Bounds for Low-Rank Inverse Approximations under Noise

Abstract

Low-rank pseudoinverses are widely used to approximate matrix inverses in scalable machine learning, optimization, and scientific computing. However, real-world matrices are often observed with noise, arising from sampling, sketching, and quantization. The spectral-norm robustness of low-rank inverse approximations remains poorly understood.

We systematically study the spectral-norm error for an symmetric matrix , where denotes the best rank- approximation of , and is a noisy observation. Under mild assumptions on the noise, we derive sharp non-asymptotic perturbation bounds that reveal how the error scales with the eigengap, spectral decay, and noise alignment with low-curvature directions of .

Our analysis introduces a novel application of contour integral techniques to the non-entire function , yielding bounds that improve over naive adaptations of classical full-inverse bounds by up to a factor of .

Empirically, our bounds closely track the true perturbation error across a variety of real-world and synthetic matrices, while estimates based on classical results tend to significantly overpredict. These findings offer practical, spectrum-aware guarantees for low-rank inverse approximations in noisy computational environments.