Poster Session 1 · Wednesday, December 3, 2025 11:00 AM → 2:00 PM

#512

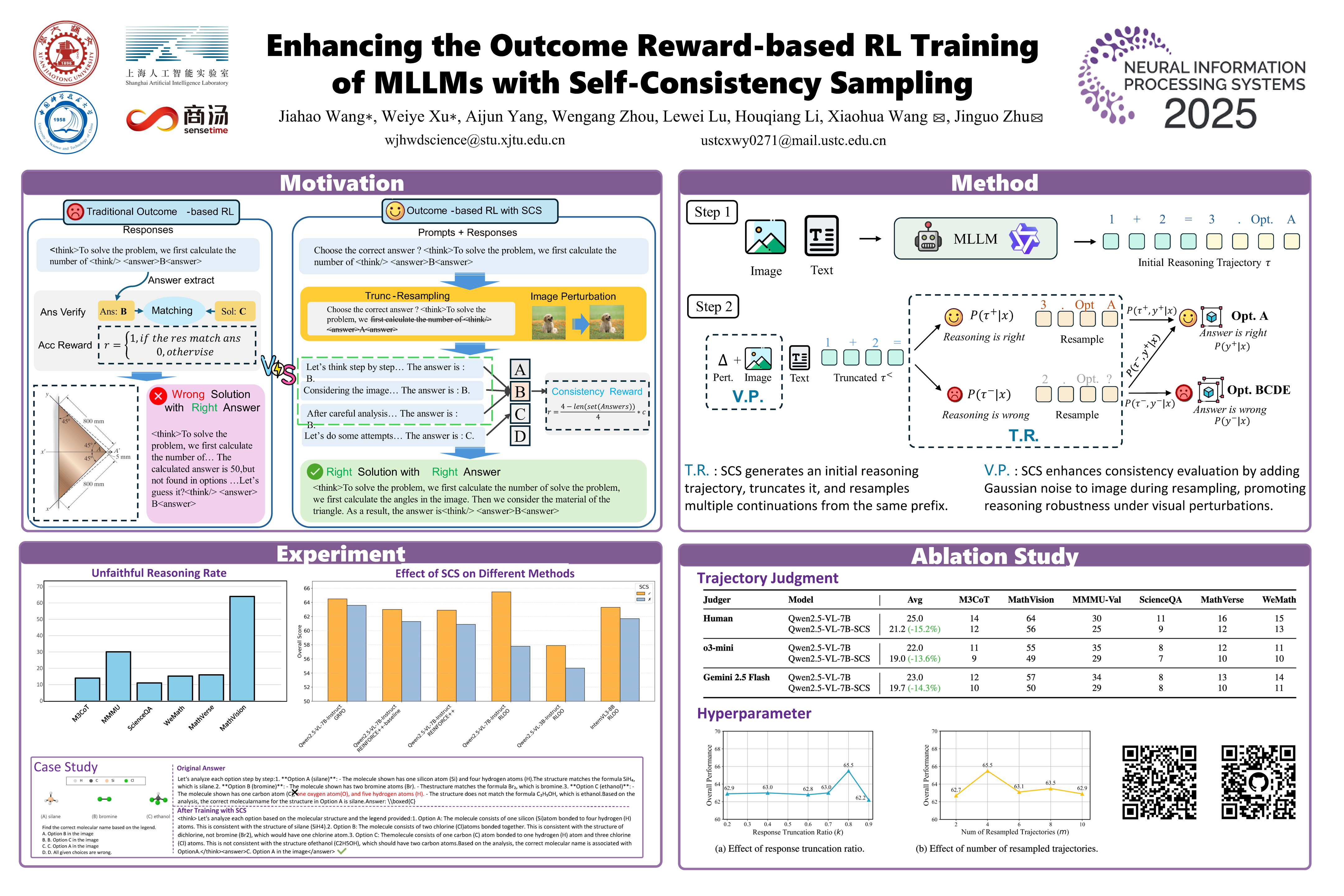

Enhancing the Outcome Reward-based RL Training of MLLMs with Self-Consistency Sampling

Abstract

Outcome-reward reinforcement learning (RL) is a common—and increasingly significant—way to refine the step-by-step reasoning of multimodal large language models (MLLMs). In the multiple-choice setting—a dominant format for multimodal reasoning benchmarks—the paradigm faces a significant yet often overlooked obstacle: unfaithful trajectories that guess the correct option after a faulty chain of thought receive the same reward as genuine reasoning, which is a flaw that cannot be ignored.

We propose Self-Consistency Sampling (SCS) to correct this issue. For each question, SCS (i) introduces small visual perturbations and (ii) performs repeated truncation-and-resampling of a reference trajectory; agreement among the resulting trajectories yields a differentiable consistency score that down-weights unreliable traces during policy updates.

Plugging SCS into RLOO, GRPO, REINFORCE++ series improves accuracy by up to 7.7 percentage points on six multimodal benchmarks with negligible extra computation, offering a simple, general remedy for outcome-reward RL in MLLMs.