Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#3809

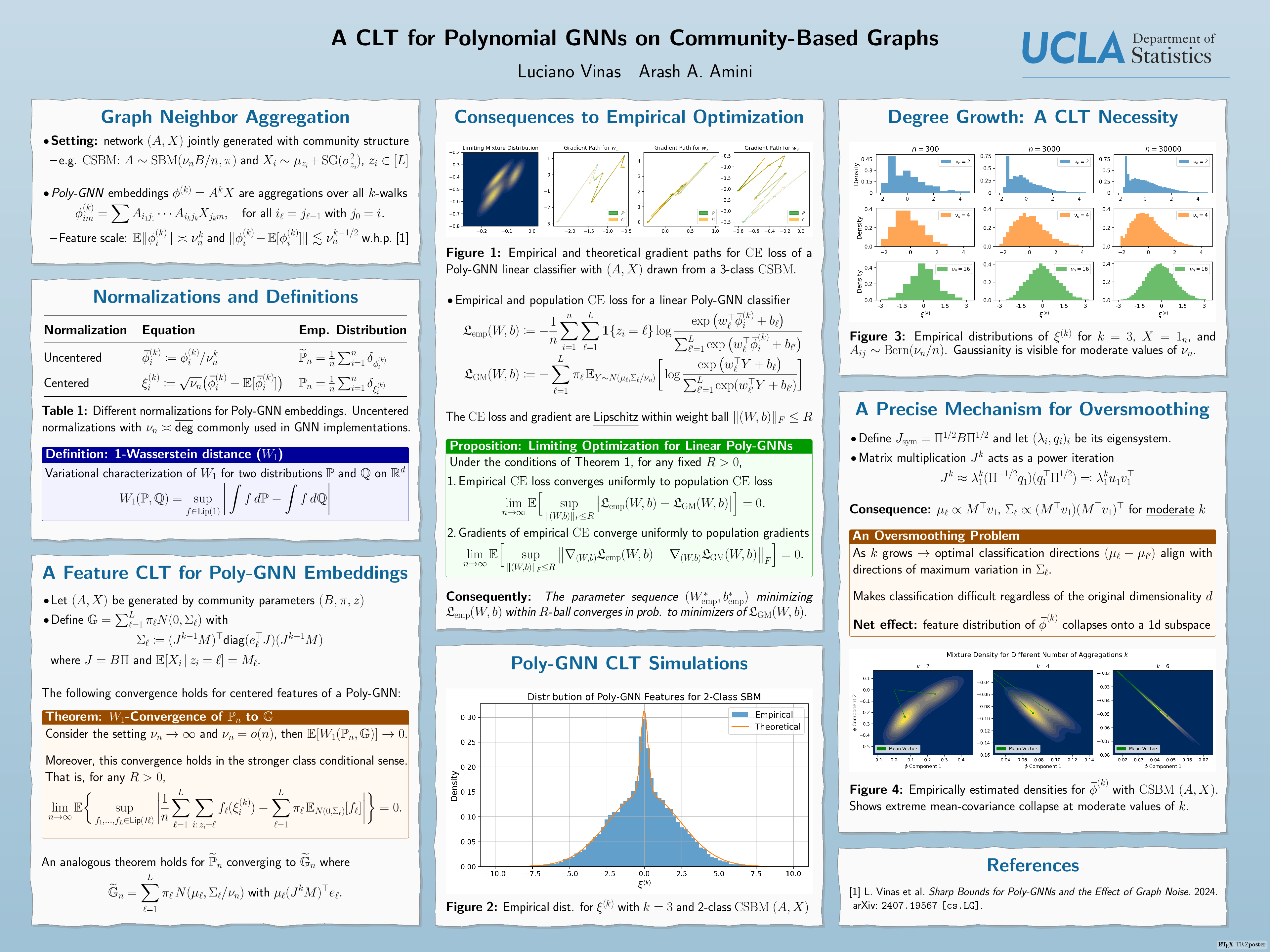

A CLT for Polynomial GNNs on Community-Based Graphs

Abstract

We consider the empirical distribution of the embeddings of a -layer polynomial GNN on a semi-supervised node classification task and prove a central limit theorem for them.

Assuming a community based model for the underlying graph, with growing average degree , we show that the empirical distribution of the centered features, when scaled by converge in 1-Wasserstein distance to a centered stable mixture of multivariate normal distributions. In addition, the joint empirical distribution of uncentered features and labels when normalized by approach that of mixture of multivariate normal distributions, with stable means and covariance matrices vanishing as . We explicitly identify the asymptotic means and covariances, showing that the mixture collapses towards a 1-D version as is increased.

Our results provides a precise and nuanced lens on how oversmoothing presents itself in the large graph limit, in the sparse regime. In particular, we show that training with cross-entropy on these embeddings is asymptotically equivalent to training on these nearly collapsed Gaussian mixtures.