Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#2516 Spotlight

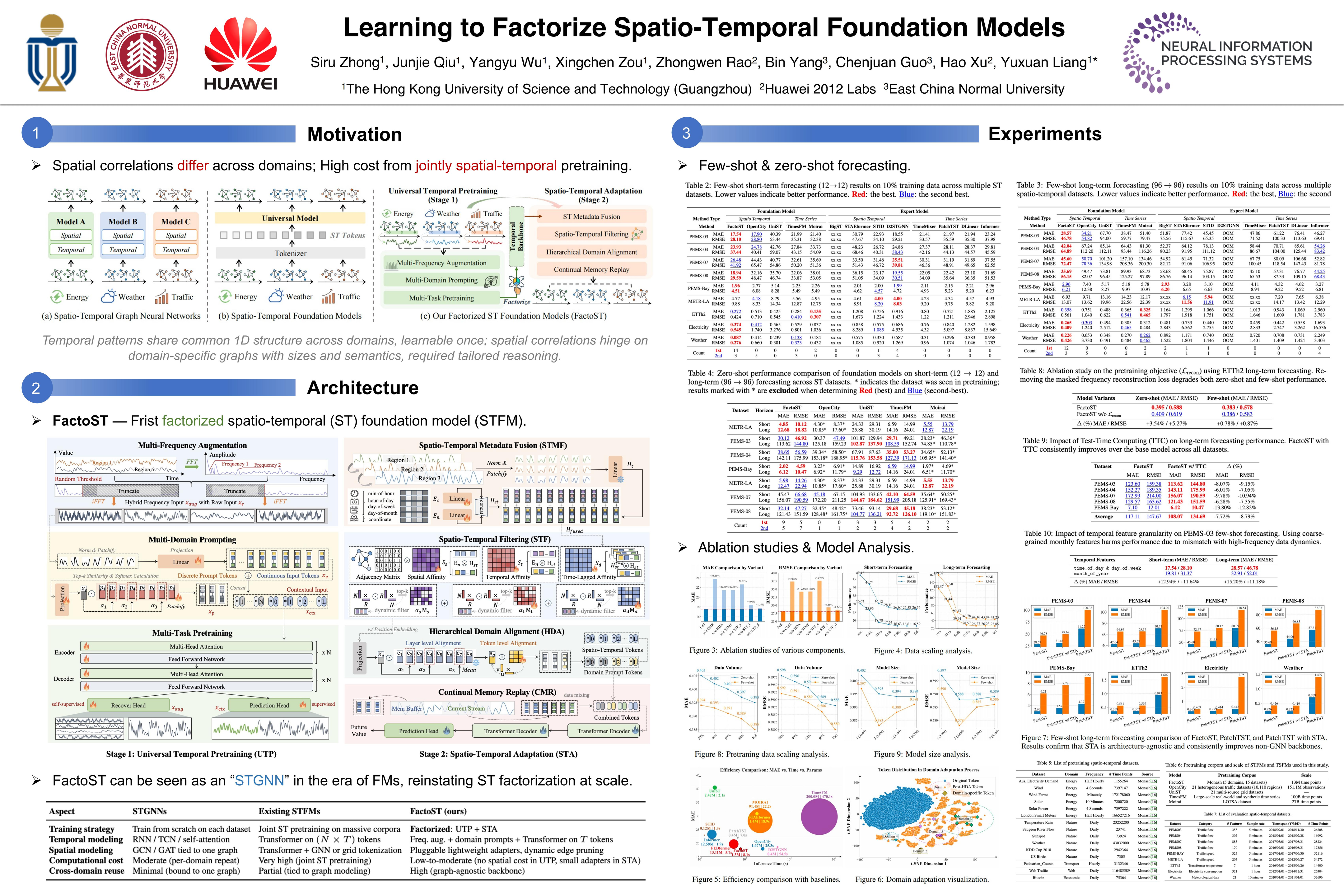

Learning to Factorize Spatio-Temporal Foundation Models

Abstract

Spatio-Temporal Foundation Models (STFMs) promise zero/few-shot generalization across various datasets, yet joint spatio-temporal pretraining is computationally prohibitive and struggles with domain-specific spatial correlations.

To this end, we introduce FactoST, a factorized STFM that decouples universal temporal pretraining from spatio-temporal adaptation. The first stage pretrains a space-agnostic backbone with multi-frequency reconstruction and domain-aware prompting, capturing cross-domain temporal regularities at low computational cost. The second stage freezes or further fine-tunes the backbone and attaches an adapter that fuses spatial metadata, sparsifies interactions, and aligns domains with continual memory replay.

Extensive forecasting experiments reveal that, in few-shot setting, FactoST reduces MAE by up to 46.4% versus UniST, uses 46.2% fewer parameters, and achieves 68% faster inference than OpenCity, while remaining competitive with expert models.

We believe this factorized view offers a practical and scalable path toward truly universal STFMs. The code will be released upon notification.