Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#3006

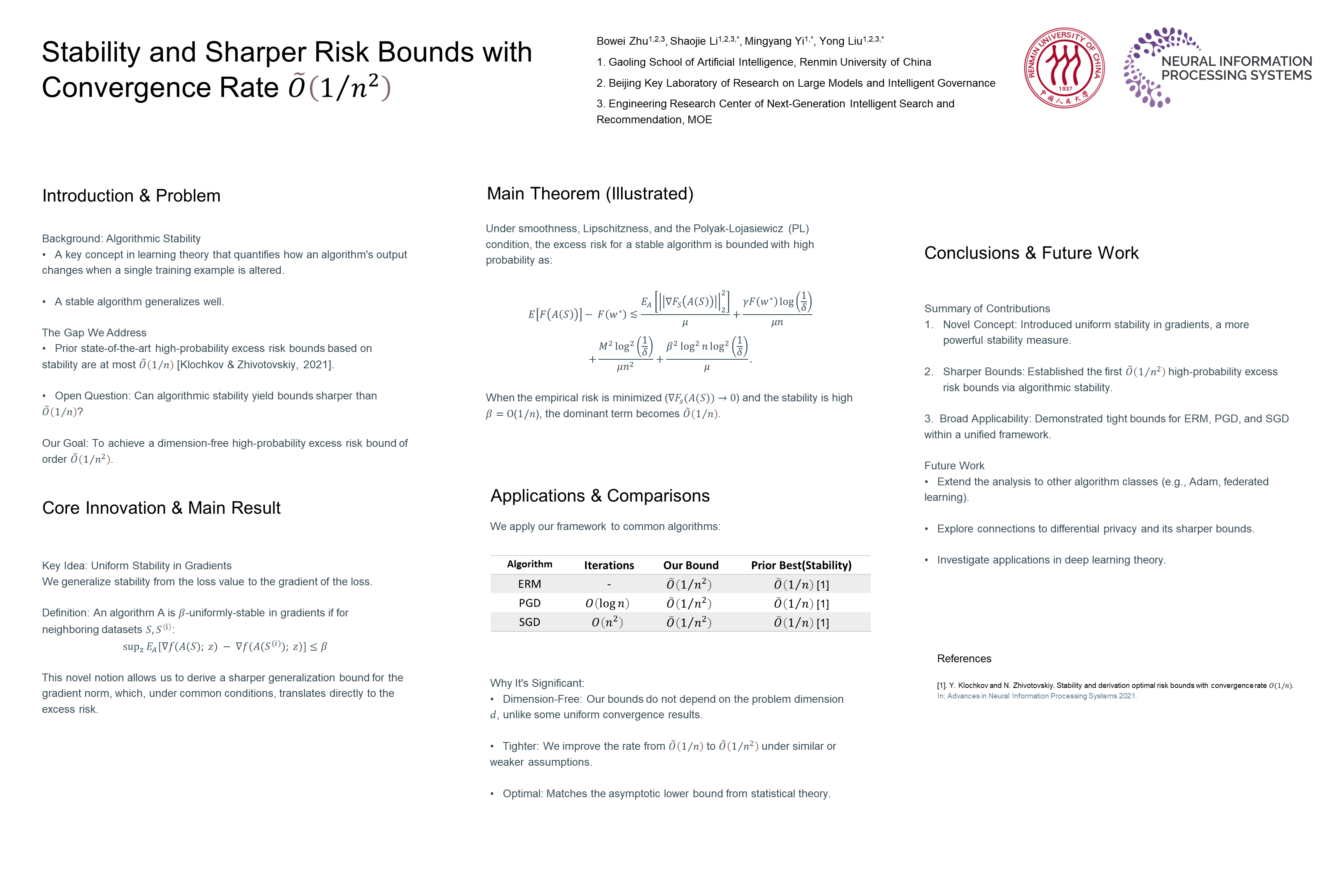

Stability and Sharper Risk Bounds with Convergence Rate

Abstract

Prior work (Klochkov & Zhivotovskiy, 2021) establishes at most excess risk bounds via algorithmic stability for strongly-convex learners with high probability.

We show that under the similar common assumptions — Polyak-Lojasiewicz condition, smoothness, and Lipschitz continous for losses — rates of are at most achievable.

To our knowledge, our analysis also provides the tightest high-probability bounds for gradient-based generalization gaps in nonconvex settings.