Poster Session 2 · Wednesday, December 3, 2025 4:30 PM → 7:30 PM

#904

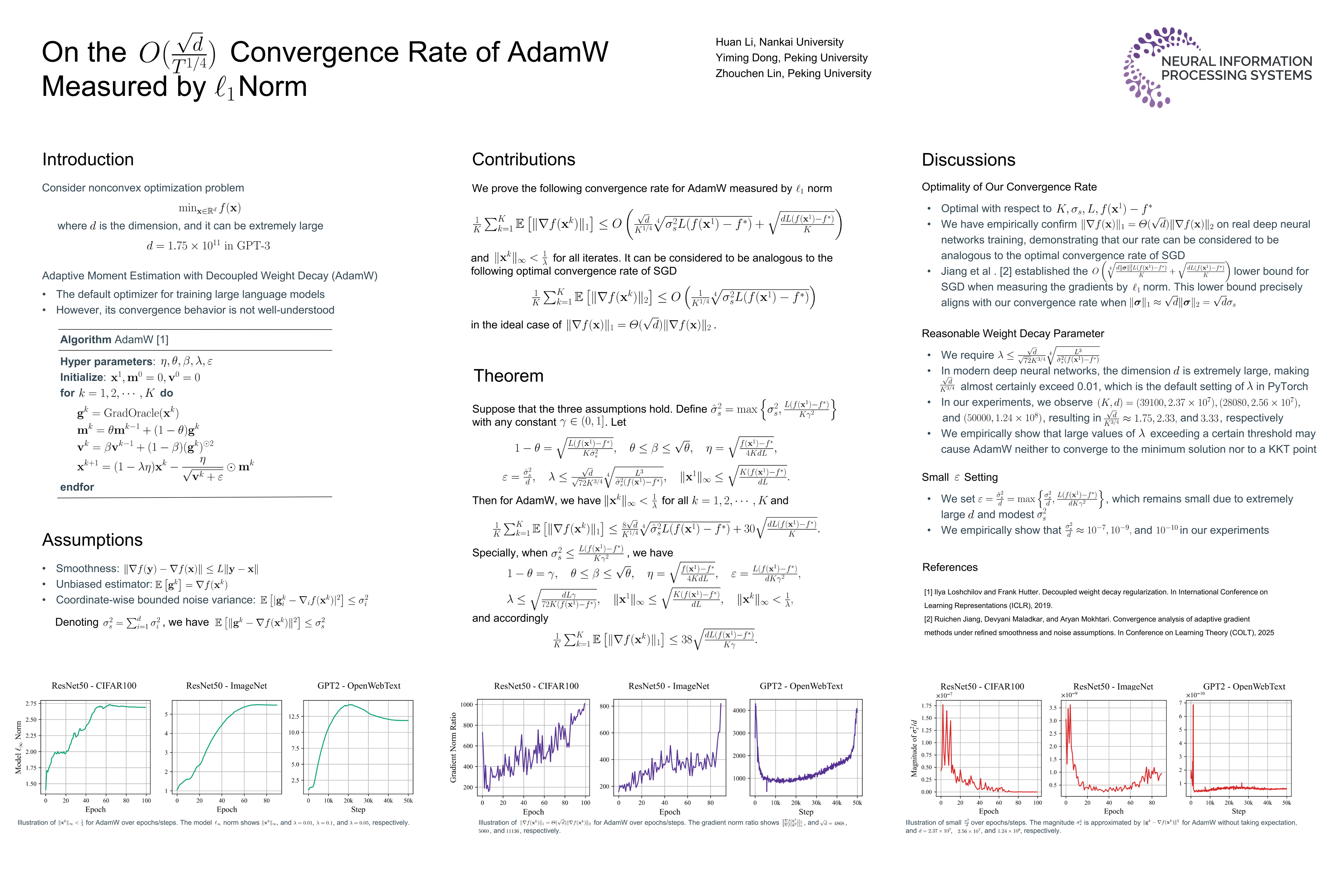

On the Convergence Rate of AdamW Measured by Norm

Abstract

As the default optimizer for training large language models, AdamW has achieved remarkable success in deep learning. However, its convergence behavior is not theoretically well-understood.

This paper establishes the convergence rate for AdamW measured by norm, where K represents the iteration number, d denotes the model dimension, and C matches the constant in the optimal convergence rate of SGD.

Theoretically, we have when each element of is generated from Gaussian distribution . Empirically, our experimental results on real-world deep learning tasks reveal . Both support that our convergence rate can be considered to be analogous to the optimal convergence rate of SGD.