Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#4211

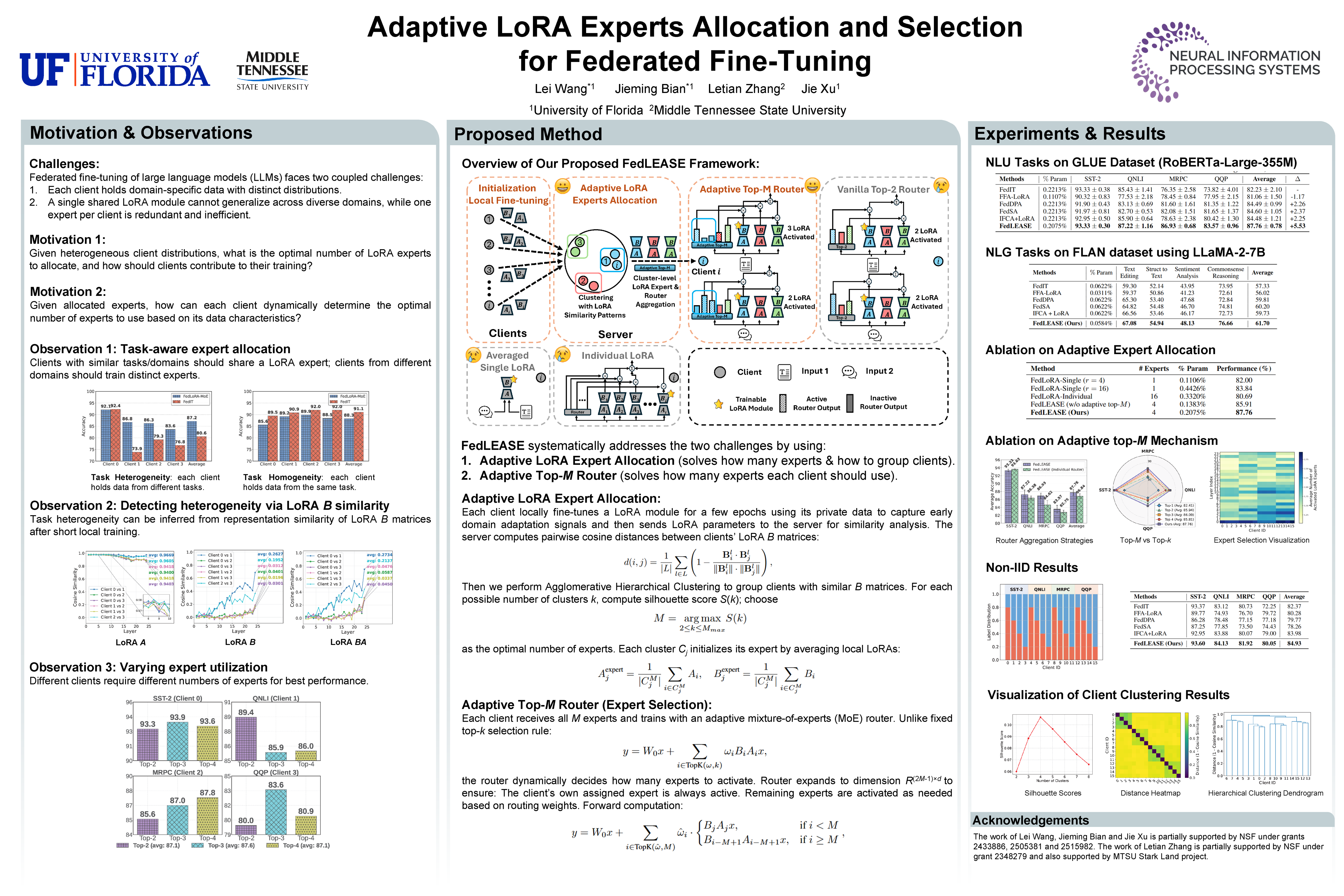

Adaptive LoRA Experts Allocation and Selection for Federated Fine-Tuning

Abstract

Large Language Models (LLMs) have demonstrated impressive capabilities across various tasks, but fine-tuning them for domain-specific applications often requires substantial domain-specific data that may be distributed across multiple organizations. Federated Learning (FL) offers a privacy-preserving solution, but faces challenges with computational constraints when applied to LLMs. Low-Rank Adaptation (LoRA) has emerged as a parameter-efficient fine-tuning approach, though a single LoRA module often struggles with heterogeneous data across diverse domains.

This paper addresses two critical challenges in federated LoRA fine-tuning:

- determining the optimal number and allocation of LoRA experts across heterogeneous clients, and

- enabling clients to selectively utilize these experts based on their specific data characteristics.

We propose FedLEASE (Federated adaptive LoRA Expert Allocation and SElection), a novel framework that adaptively clusters clients based on representation similarity to allocate and train domain-specific LoRA experts. It also introduces an adaptive top- Mixture-of-Experts mechanism that allows each client to select the optimal number of utilized experts.

Our extensive experiments on diverse benchmark datasets demonstrate that FedLEASE significantly outperforms existing federated fine-tuning approaches in heterogeneous client settings while maintaining communication efficiency.