Poster Session 6 · Friday, December 5, 2025 4:30 PM → 7:30 PM

#2604

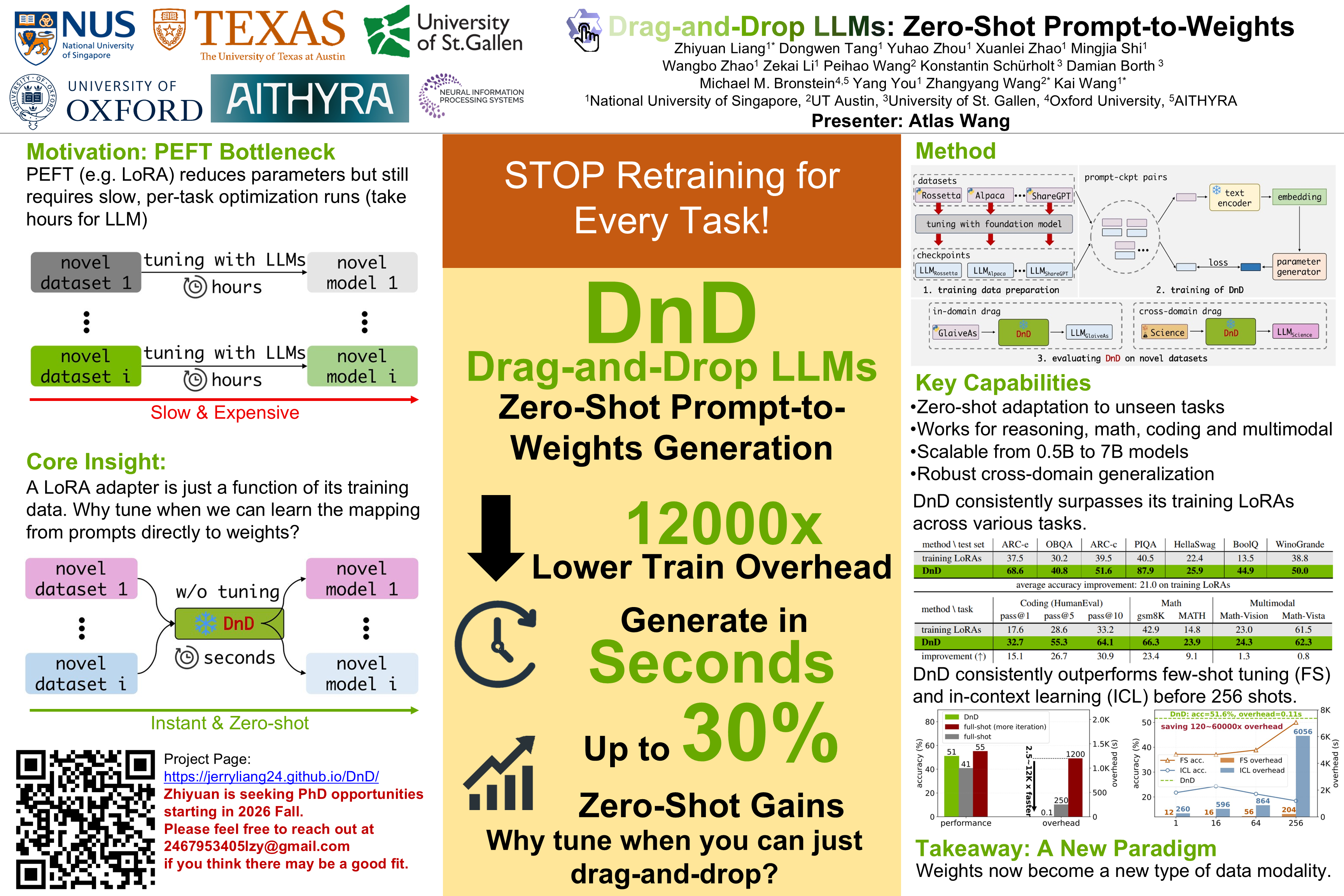

Drag-and-Drop LLMs: Zero-Shot Prompt-to-Weights

Abstract

Modern Parameter-Efficient Fine-Tuning (PEFT) methods such as low-rank adaptation (LoRA) reduce the cost of customizing large language models (LLMs), yet still require a separate optimization run for every downstream dataset.

We introduce Drag-and-Drop LLMs (DnD), a prompt-conditioned parameter generator that eliminates per-task training by mapping a handful of unlabeled task prompts directly to LoRA weight updates. A lightweight text encoder distills each prompt batch into condition embeddings, which are then transformed by a cascaded hyper-convolutional decoder into the full set of LoRA matrices.

Once trained in a diverse collection ofprompt-checkpoint pairs, DnD produces task-specific parameters in seconds, yielding

- up to 12,000 lower overhead than full fine-tuning,

- average gains up to 30% in performance over the strongest training LoRAs on unseen common-sense reasoning, math, coding, and multimodal benchmarks, and

- robust cross-domain generalization improving 40% performance without access to the target data or labels.

Our results demonstrate that prompt-conditioned parameter generation is a viable alternative to gradient-based adaptation for rapidly specializing LLMs. We open source our project in support of future research.