Poster Session 3 · Thursday, December 4, 2025 11:00 AM → 2:00 PM

#1006

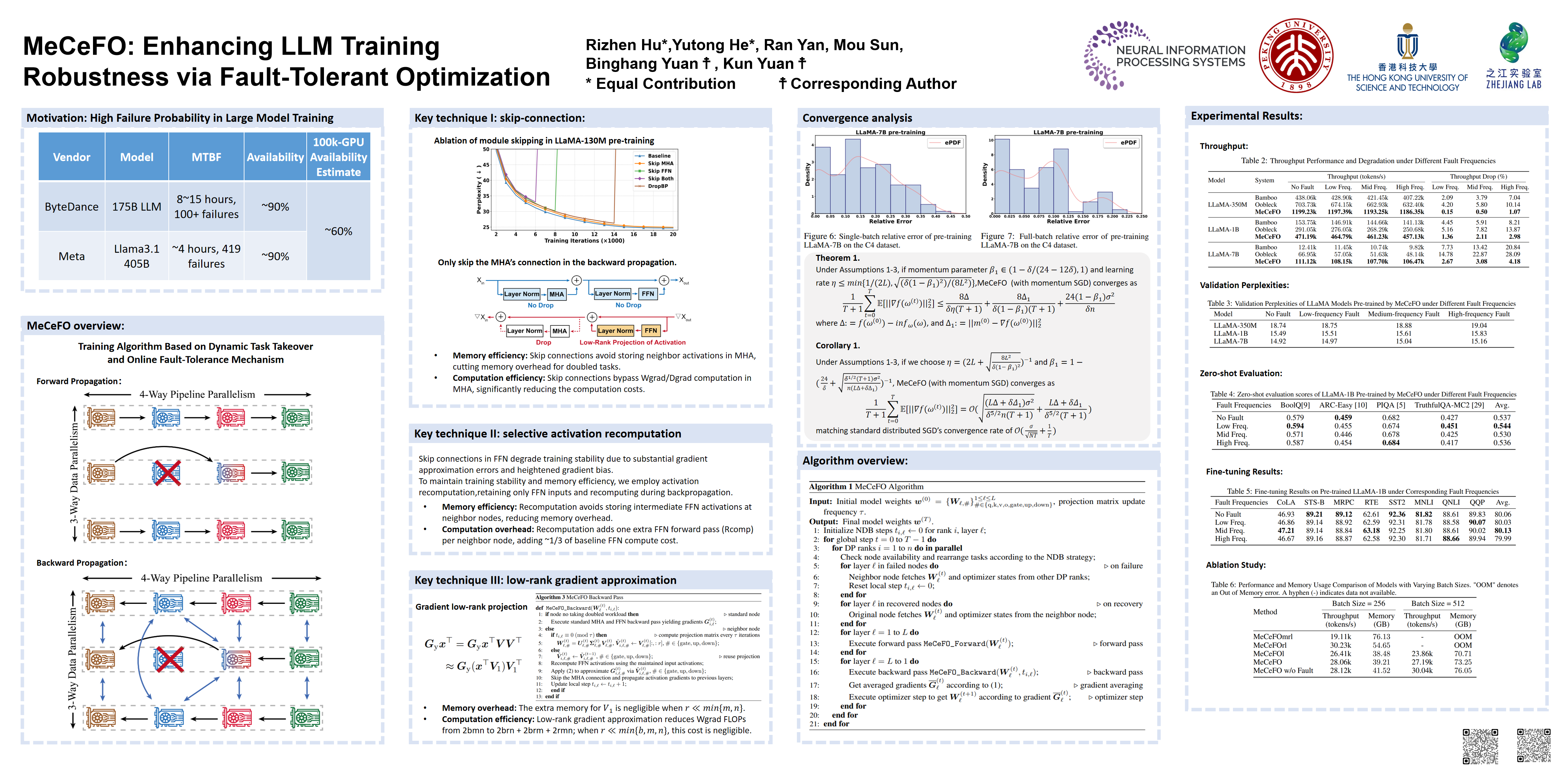

MeCeFO: Enhancing LLM Training Robustness via Fault-Tolerant Optimization

Abstract

As distributed optimization scales to meet the demands of Large Language Model (LLM) training, hardware failures become increasingly non-negligible. Existing fault-tolerant training methods often introduce significant computational or memory overhead, demanding additional resources.

To address this challenge, we propose Memory- and Computation- efficient Fault-tolerant Optimization (MeCeFO), a novel algorithm that ensures robust training with minimal overhead. When a computing node fails, MeCeFO seamlessly transfers its training task to a neighboring node while employing memory- and computation-efficient algorithmic optimizations to minimize the extra workload imposed on the neighboring node handling both tasks.

MeCeFO leverages three key algorithmic designs:

- Skip-connection, which drops the multi-head attention (MHA) module during backpropagation for memory- and computation-efficient approximation;

- Recomputation, which reduces activation memory in feedforward networks (FFNs); and

- Low-rank gradient approximation, enabling efficient estimation of FFN weight matrix gradients.

Theoretically, MeCeFO matches the convergence rate of conventional distributed training, with a rate of , where is the data parallelism size and is the number of iterations. Empirically, MeCeFO maintains robust performance under high failure rates, incurring only a 4.18% drop in throughput, demonstrating to greater resilience than previous SOTA approaches.