Poster Session 5 · Friday, December 5, 2025 11:00 AM → 2:00 PM

#305

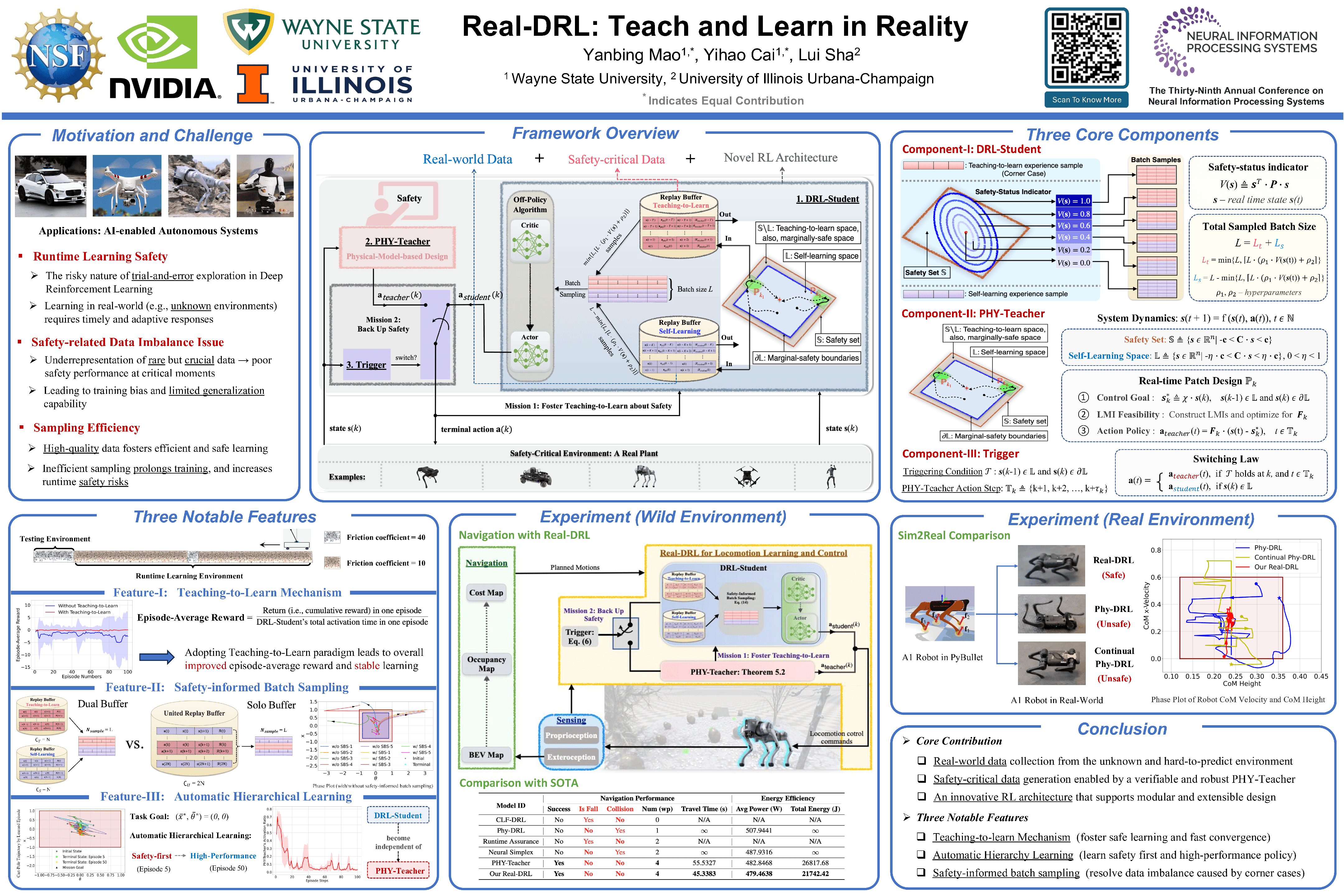

Real-DRL: Teach and Learn in Reality

Abstract

This paper introduces the Real-DRL framework for safety-critical autonomous systems, enabling runtime learning of a deep reinforcement learning (DRL) agent to develop safe and high-performance action policies in real plants while prioritizing safety.

The Real-DRL consists of three interactive components: a DRL-Student, a PHY-Teacher, and a Trigger. The DRL-Student is a DRL agent that innovates in the dual self-learning and teaching-to-learn paradigm and the safety-status-dependent batch sampling. On the other hand, PHY-Teacher is a physics-model-based design of action policies that focuses solely on safety-critical functions. PHY-Teacher is novel in its real-time patch for two key missions:

- fostering the teaching-to-learn paradigm for DRL-Student

- backing up the safety of real plants.

Powered by the three interactive components, the Real-DRL can effectively address safety challenges that arise from the unknown unknowns and the Sim2Real gap. Additionally, Real-DRL notably features:

- assured safety

- automatic hierarchy learning (i.e., safety-first learning and then high-performance learning)

- safety-informed batch sampling to address the experience imbalance caused by corner cases.

Experiments with a real quadruped robot, a quadruped robot in Nvidia Isaac Gym, and a cart-pole system, along with comparisons and ablation studies, demonstrate the Real-DRL's effectiveness and unique features.