Poster Session 4 · Thursday, December 4, 2025 4:30 PM → 7:30 PM

#2101

SNN: Sub-bit Spiking Neural Networks

Wenjie Wei, Malu Zhang, Jieyuan Zhang, Ammar Belatreche, Shuai Wang, Yimeng Shan, Hanwen Liu, Honglin Cao, Guoqing Wang, Yang Yang, Haizhou Li

Abstract

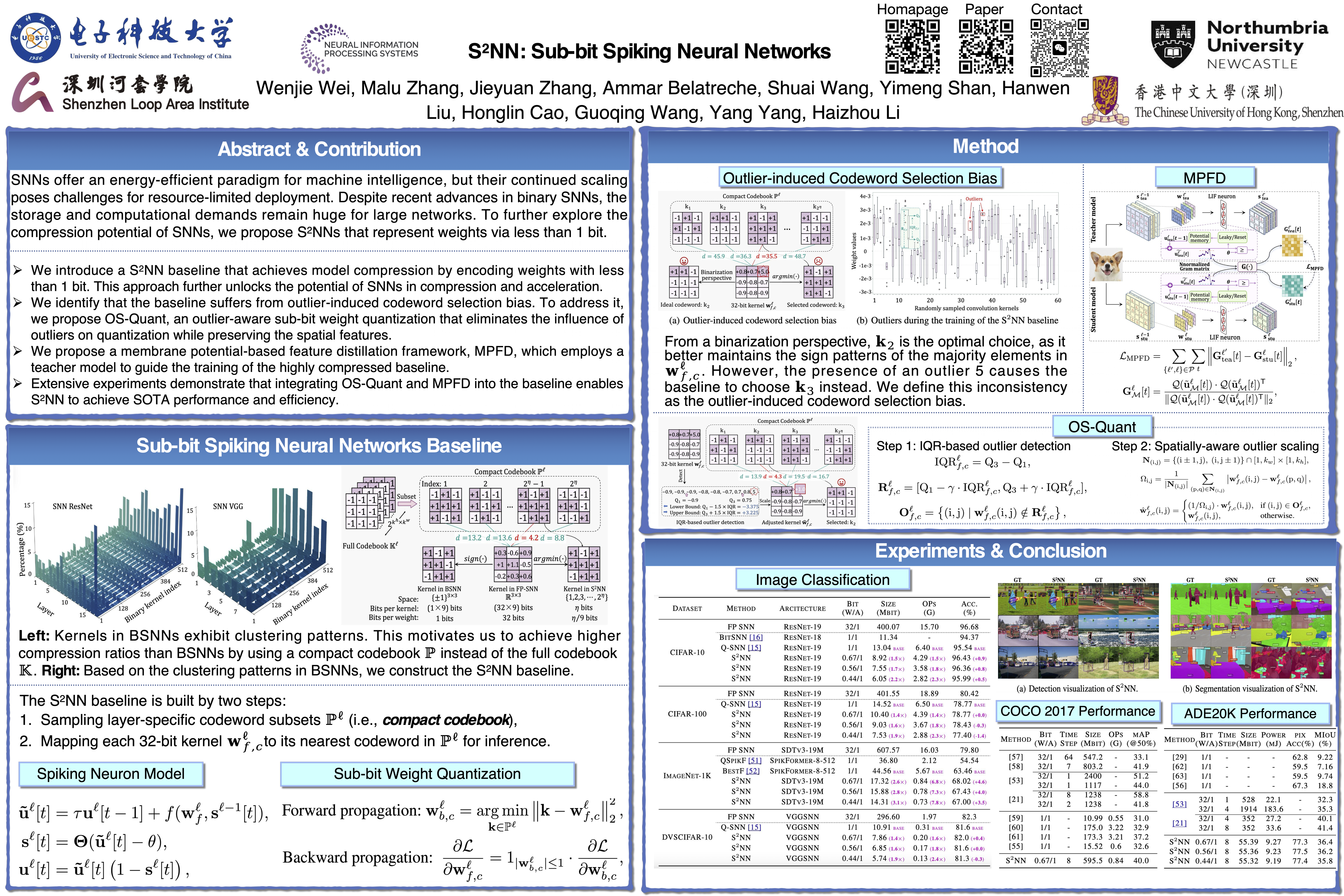

Spiking Neural Networks (SNNs) offer an energy-efficient paradigm for machine intelligence, but their continued scaling poses challenges for resource-limited deployment. Despite recent advances in binary SNNs, the storage and computational demands remain substantial for large-scale networks. To further explore the compression and acceleration potential of SNNs, we propose Sub-bit Spiking Neural Networks (SNNs) that represent weights with less than one bit.

Specifically, we first establish an SNN baseline by leveraging the clustering patterns of kernels in well-trained binary SNNs. This baseline is highly efficient but suffers from outlier-induced codeword selection bias during training. To mitigate this issue, we propose an outlier-aware sub-bit weight quantization (OS-Quant) method, which optimizes codeword selection by identifying and adaptively scaling outliers. Furthermore, we propose a membrane potential-based feature distillation (MPFD) method, improving the performance of highly compressed SNN via more precise guidance from a teacher model.

Extensive results on vision reveal that SNN outperforms existing quantized SNNs in both performance and efficiency, making it promising for edge computing applications.